NVIDIA GeForce 6800 Ultra: The Next Step Forward

by Derek Wilson on April 14, 2004 8:42 AM EST- Posted in

- GPUs

Anisotropic, Trilinear, and Antialiasing

There was a great deal of controversy last year over some of the "optimizations" NVIDIA included in some of their drivers. We have

NVIDIA's new driver defaults to the same adaptive anisotropic filtering and trilinear filtering optimizations they are currently using in the 50 series drivers, but users are now able to disable these features. Trilinear filtering optimizations can be turned off (doing full trilinear all the time), and a new "High Quality" rendering mode turns off adaptive anisotropic filtering. What this means is that if someone wants (or needs) to have accurate trilinear and anisotropic filtering they can. The disabling of trilinear optimizations is currently available in the 56.72

Unfortunately, it seems like NVIDIA will be switching to a method of calculating anisotropic filtering based on a weighted Manhattan distance calculation. We appreciated the fact that NVIDIA's previous implementation of anisotropic filtering employed a Euclidean distance calculation which is less sensitive to the orientation of a surface than a weighted Manhattan calculation.

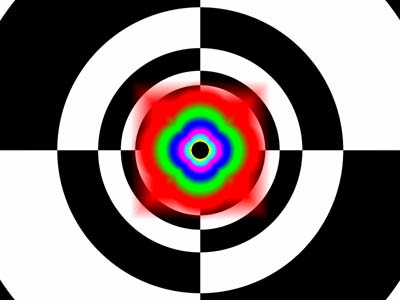

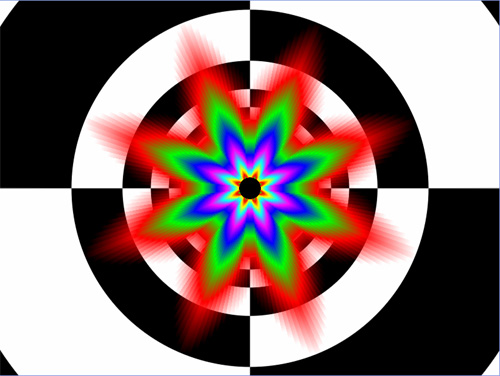

This is how NVIDIA used to do Anisotropic filtering

This is Anisotropic under the 60.72 driver.

This is how ATI does Anisotropic Filtering.

The advantage is that NVIDIA now has a lower impact when enabling anisotropic filtering, and we will also be doing a more apples to apples comparison when it comes to anisotropic filtering (ATI also makes use of a weighted Manhattan scheme for distance calculations). In games where angled, textured, surfaces rotate around the z-axis (the axis that comes "out" of the monitor) in a 3d world, both ATI and NVIDIA will show the same fluctuations in anisotropic rendering quality. We would have liked to see ATI alter their implementation rather than NVIDIA, but there is something to be said for both companies doing the same thing.

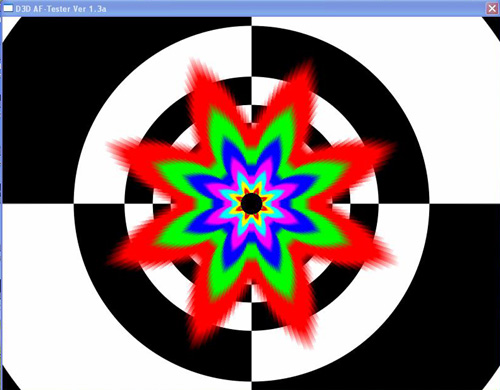

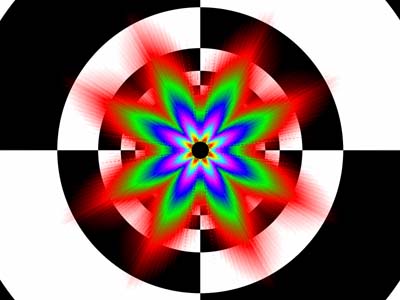

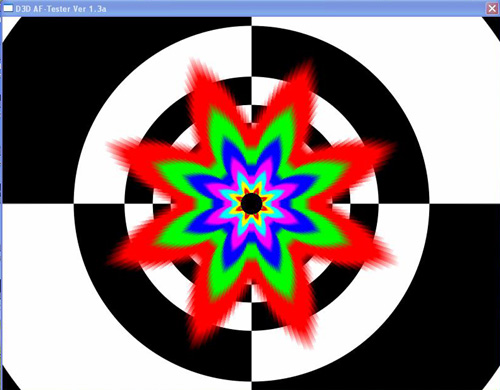

We had a little time to play with the D3D AF Tester that we used in last years image quality article. We can confirm that turning off the trilinear filtering optimizations results in full trilinear being performed all the time. Previously, neither ATI nor NVIDIA did this much trilinear filtering, but check out the screenshots.

Trilinear optimizations enabled.

Trilinear optimizations disabled.

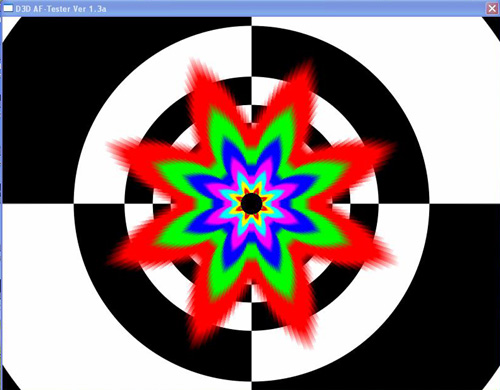

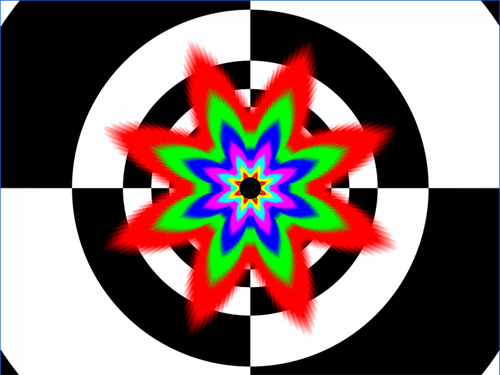

When comparing "Quality" mode to "High Quality" mode we didn't observe any difference in the anisotropic rendering fidelity. Of course, this is still a beta driver, so everything might not be doing what it's supposed to be doing yet. We'll definitely keep on checking this as the driver matures. For now, take a look.

Quality Mode.

High Quailty Mode.

On a very positive note, NVIDIA has finally adopted a rotated grid antialiasing scheme. Here we can take a glimpse at what the new method does for their rendering quailty in Jedi Knight: Jedi Academy.

Jedi Knight without AA

Jedi Knight with 4x AA

Its nice to finally see such smooth near vertical and horizontal lines from a graphics company other than ATI. Of course, ATI does have yet to throw its offering into the ring, and it is very possible that they've raised their own bar for filtering quality.

77 Comments

View All Comments

mkruer - Wednesday, April 14, 2004 - link

Well I hope this card is on par with ATi's or visa versa. ATi is planning to see their best at $500 pop and Nvidia is selling their at $400. How long to you think ATi is going to see their card for that price if the performance is virtually identical. Finally in the terms of the Power. Makes me wonder why PCI-Ex doesn’t include enough voltage from the socket? VPU's are getting to the point that they are just as powerful and complex as their CPU brethren, and will require the same power requirements as the CPU. Some one didn’t do their homework I guess. Well hears hoping that it will be in the next specification.quikah - Wednesday, April 14, 2004 - link

Can you post some screen shots of Far Cry? The demo at the launch event was pretty striking so I am wondering if PS 3 were actually enabled since you didn't see any difference.Novaoblivion - Wednesday, April 14, 2004 - link

Wow nice looking card I just hope the new ATI doesnt kick its ass lolRudee - Wednesday, April 14, 2004 - link

When you factor in the upgrade price of a power supply and a top of the line CPU, this is going to be one heck of an expensive gaming experience. People will be wise to wait for ATI's newest flagship before they make any purchase decisions.Pete - Wednesday, April 14, 2004 - link

Nice review, Derek. Some impressive performance, but now I'm expecting more from ATi in both performance (due to higher clockspeed) and IQ (I'm curious if ATi improved their AF while nV dropped to around ATi's current level). I also have a sneaking suspicion nV may clock the 6800U higher at launch, but maybe they're just giving themselves room for 6850U and beyond (to scale with faster memory). But a $300 12-pipe 128MB 6800 should prove interesting competition to a ~$300 256MB 9800XT.The editor in me can't refrain from offering two corrections: I'm pretty sure you meant to say Jen Hsun (not "Jensen") and well nigh (not "neigh").

Mithan - Wednesday, April 14, 2004 - link

Looks like a fantastic card, however I will wait for the ATI numbers first :)PS:

Thanks for including the 9700 Pro. I own that and it was nice to see the difference.

dawurz - Wednesday, April 14, 2004 - link

Derek, could you post the monitor you used (halo at 2048 rez), and any comments on the look of things at that monstrous a resolution?Thanks.

rainypickles - Wednesday, April 14, 2004 - link

does the size and the power requirement basically rule out using this beast of a card in a SFF machine?Damarr - Wednesday, April 14, 2004 - link

It was nice to see the 9700 Pro included in the benchmarks. Hopefully we'll see the same with the X800 Pro and XT so there can be a side-by-side comparison (should make picking a new card easier for 9700 Pro owners like myself :) ).DerekWilson - Wednesday, April 14, 2004 - link

We are planning on testing the actual power draw, but until then, NVIDIA is the one that said we needed to go with a 480W PS ... even making that suggestion limits their target demographic.Though, it could simply be a limitation of the engineering sample we were all given... We'll just have to wait an see.