ATI Radeon X800 Pro and XT Platinum Edition: R420 Arrives

by Derek Wilson on May 4, 2004 10:28 AM EST- Posted in

- GPUs

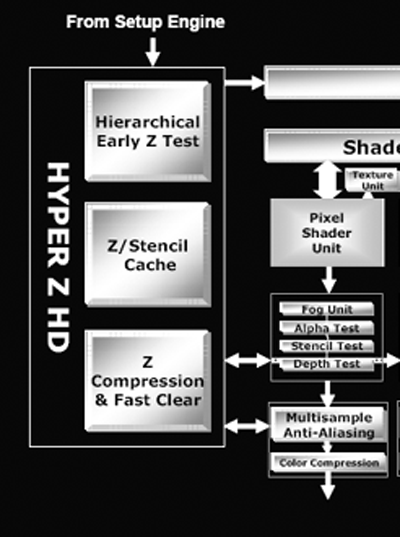

Depth and Stencil with Hyper Z HD

In accordance with their "High Definition Gaming" theme, ATI is calling the R420's method of handling depth and stencil processing Hyper Z HD. Depth and stencil processing is handled at multiple points throughout the pipeline, but grouping all this hardware into one block can make sense as each step along the way will touch the z-buffer (an on die cache of z and stencil data). We have previously covered other incarnations of Hyper Z which have done basically the same job. Here we can see where the Hyper Z HD functionality interfaces with the rendering pipeline:

The R420 architecture implements a hierarchical and early z type of occlusion culling in the rendering pipeline.

With early z, as data emerges from the geometry processing portion of the GPU, it is possible to skip further rendering large portions of the scene that are occluded (or covered) by other geometry. In this way, pixels that won't be seen don't need to run through the pixel shader pipelines and waste precious resources.

Hierarchical z indicates that large blocks of pixels are checked and thrown out if the entire tile is occluded. In R420, these tiles are the very same ones output by the geometry and setup engine. If only part of a tile is occluded, smaller subsections are checked and thrown out if possible. This processing doesn't eliminate all the occluded pixels, so pixels coming out of the pixel pipelines also need to be tested for visibility before they are drawn to the framebuffer. The real difference between R3xx and R420 is in the number of pixels that can be gracefully handled.

As rasterization draws nearer, the ATI and NVIDIA architectures begin to differentiate themselves more. Both claim that they are able to calculate up to 32 z or stencil operations per clock, but the conditions under which this is true are different. NV40 is able to push two z/stencil operations per pixel pipeline during a z or stencil only pass or in other cases when no color data is being dealt with (the color unit in NV40 can work with z/stencil data when no color computation is needed). By contrast, R420 pushes 32 z/stencil operations per clock cycle when antialiasing is enabled (one z/stencil operation can be completed per clock at the end of each pixel pipeline, and one z/stencil operation can be completed inside the multisample AA unit).

The different approaches these architectures take mean that each will excel in different ways when dealing with z or stencil data. Under R420, z/stencil speed will be maximized when antialiasing is enabled and will only see 16 z/stencil operations per clock under non-antialiased rendering. NV40 will achieve maximum z/stencil performance when a z/stencil only pass is performed regardless of the state of antialiasing.

The average case for NV40 will be closer to 16 z/stencil operations per clock, and if users don't run antialiasing on R420 they won't see more than 16 z/stencil operations per clock. Really, if everyone begins to enable antialiasing, R420 will begin to shine in real world situations, and if developers embrace z or stencil only passes (such as in Doom III), NV40 will do very well. The bottom line on which approach is better will be defined by the direction the users and developers take in the future. Will enabling antialiasing win out over running at ultra-high resolutions? Will developers mimic John Carmack and the intensive shadowing capabilities of Doom III? Both scenarios could play out simultaneously, but, really, only time will tell.

95 Comments

View All Comments

NullSubroutine - Thursday, May 6, 2004 - link

Trog I agree with you for the most part, but there are some people who can use upgrades. I myself have bought expensive video cards in the past. I got the Geforce3 right when it came out (in top of the line alienware system for 1400 bucks), and it lasted me for 2-3 years. Now if someone spends 400-500 bucks on a video card that lasts them that long (2-3 years) its no different than if someone buys a 200 buck video card every year. I am one of those people who likes to buy new compoents when computing speed doubles and if I have the money I'll get what I can that will last me the longest. If I cant afford top of the line Ill get something that will get me by (9500pro last card I bought for 170 over a year ago).However I do agree with you that people who upgrade to the best every generation is silly.

TrogdorJW - Thursday, May 6, 2004 - link

I'm sorry, but I simply have to laugh at anyone going on and on about how they're going to run out and buy the latest graphics cards from ATI or Nvidia right now. $400 to $500 for a graphics card is simply too much (and it's too much for a CPU as well). Besides, unless you have some dementia that requires you to run all games at 1600x1200 with 4xAA and 8xAF, there's very little need for either the 6800 Ultra or the X800 XT right now. Relax, take a deep breath, save some money, and forget about the pissing contest.So, is it just me, or is there an inverse relationship between a person's cost of computer hardware and their actual knowledge of computers? I have a coworker that is always spending money on upgrading his PC, and he really has no idea what he's doing. He went from an Athlon XP 2800+ (OC'ed to 2.4 GHz) to a P4 2.8 OC'ed to 3.7 GHz. He also went from a 9800 Pro 256 to a 9800 XT. In the past, he also had a GeForce FX 5900 Ultra. He tries to overclock all of his systems, they sound like a jet engine, and none of them are actually fully stable. In the last year, he has spent roughly $5000 on computer parts (although he has sold off some of the "old" parts like the 5900 Ultra). Performance of his system has probably improved by about 25% over the course of the year.

Sorry for the rant, but behavior like that from *anybody* is just plain stupid. He's gone from 120 FPS in some games up to 150 FPS. Anyone here actually think he can tell the difference? I suppose it goes without saying that he's constantly crowing about his 3DMark scores. Now he's all hot to go out and buy the X800 XT cards, and he's been asking me when they'll be in stores. Like I care. They're nice cards, I'm sure, but why buy them before you actually have a game that needs the added performance?

His current games du joir? Battlefield 1942 and Battlefield Vietnam. Yeah... those really need a high performance DX9 card. The 80+ FPS of the 9800 XT he has just isn't cutting it.

So, if you read my description of this guy and think I'm way off base, go get your head examined. Save your money, because some day down the road you will be glad that you didn't spend everything you earned on computer parts. Enjoy life, sure, but having a faster car, faster computer, bigger house, etc. than someone else is worth pretty much jack and shit when it all comes down to it.

/Rant. :D

a2y - Thursday, May 6, 2004 - link

If a card is going to come up every few weeks then how do you guys choose which to buy?ATI have the trade-up section for old cards, is that any good?

gxshockwav - Thursday, May 6, 2004 - link

Um...what happened to the posting of new Ge6 6850 benchmark numbers?NullSubroutine - Thursday, May 6, 2004 - link

Trog, its good to hear you were being nice, but I wasnt bashing THG, I love that site (besides this one) and I get alot of my tech info from there.What I normally do though is I take benchmarks from different sites then put them in Excel, make a little graph and see the % point differences between the tests. If you plan on buying a new vid card its important to find out if the Nvida or ATi card is faster on your type of system.

And from what I found is that the AMD system from Atech performed better with Nvidia, and Intel system peformed better with ATi from THG (for Farcry and Unreal2004 only ones to be somewhat similar tests).

#61 How much money did ATi spend when developing the R3xx line? I would venture to say a decent amount...somtimes companies invest more money in a design then refine it several times (at less cost) before starting from scratch again. ATi and Nvidia has done this for quite awhile. Also from what Ive heard the r3xx had the possibilty of 16 pipes to begin with..this true anyone?

Texture memory about 256 doesnt really matter now b/c of the insane bandwidth the 8x apg has to offer, however one might see that 512 may come in handy after Doom3 comes out since they use shitloads of high res textures instead of high polygons for alot of detail. I dont see 512 coming out for a little while, espescially with ram prices.

NullSubroutine - Thursday, May 6, 2004 - link

Trog, its good to hear you were being nice, but I wasnt bashing THG, I love that site (besides this one) and I get alot of my tech info from there.What I normally do though is I take benchmarks from different sites then put them in Excel, make a little graph and see the % point differences between the tests. If you plan on buying a new vid card its important to find out if the Nvida or ATi card is faster on your type of system.

And from what I found is that the AMD system from Atech performed better with Nvidia, and Intel system peformed better with ATi from THG (for Farcry and Unreal2004 only ones to be somewhat similar tests).

#61 How much money did ATi spend when developing the R3xx line? I would venture to say a decent amount...somtimes companies invest more money in a design then refine it several times (at less cost) before starting from scratch again. ATi and Nvidia has done this for quite awhile. Also from what Ive heard the r3xx had the possibilty of 16 pipes to begin with..this true anyone?

Texture memory about 256 doesnt really matter now b/c of the insane bandwidth the 8x apg has to offer, however one might see that 512 may come in handy after Doom3 comes out since they use shitloads of high res textures instead of high polygons for alot of detail. I dont see 512 coming out for a little while, espescially with ram prices.

deathwalker - Thursday, May 6, 2004 - link

Well...once again..someone is lying thru there teeth. What happen to the $399 entry price of the Pro model? Cheapest price on pricewatch it $478. Someone trying to cash in on the new buyer hysteria? I am impressed though with ATI's ability to step up to the plate and steal Nvidia's thunder.a2y - Thursday, May 6, 2004 - link

OMG OMG!! I almost gone to buy and build a new system with latest specs and graphics card! and was going for the nVidia 6800Ultra ! until just now i decided to see any news from ATI and discovered their new card!Man if ATI and nVidia are going to bring up a card every 2/3 weeks then i'll never be able to build this system!!!

Being a (Pre)fan of half-life 2, I guess im going to wait until its released to buy a graphics card (meaning when we all die and go to hell).

remy - Wednesday, May 5, 2004 - link

For the OpenGL vs D3D performance argument don't forget to take a look at Homeworld2 as it is an OpenGL game. ATI's hardware certainly seems to have come a long way since the 9700 Pro in that game!TrogdorJW - Wednesday, May 5, 2004 - link

NullSubroutine - It was meant as nice sarcasm, more or less. No offense intended. (I was also trying to head off this thread becoming a "THG sucks blah blah blah" tangent, as many in the past have done when someone mentions their reviews.)My basic point (without doing a ton of research) is that pretty much every hardware site has their own demos that they use for benchmarking. Given that the performance difference between the ATI and Nvidia cards was relatively constant (I think), it's generally safe to assume that the levels, setup, bots, etc. are not the same when you see differing scores. Now if you see to places using the same demo and the same system setup, and there's a big difference, then you can worry. I usually don't bother comparing benchmark numbers from two different sites since they are almost never the same configuration.