ATI Radeon X800 Pro and XT Platinum Edition: R420 Arrives

by Derek Wilson on May 4, 2004 10:28 AM EST- Posted in

- GPUs

The Pixel Shader Engine

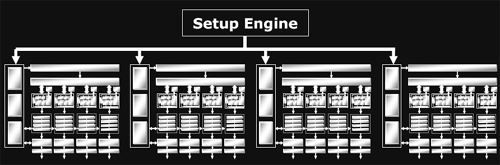

On par with what we have seen from NVIDIA, ATI's top of the line card is offering a GPU with a 16x1 pixel pipeline architecture. This means that it is able to render up to 16 single textured pixels in parallel per clock. As previously alluded to, R420 divides its pixel pipes into groups of four called quads. The new line of ATI GPUs will offer anywhere from one to four quad pipelines. The R3xx architecture offers an 8x1 pixel pipeline layout (grouped into two quad pipelines), delivering half of R420's pixel processing power per clock. For both R420 and R3xx, certain resources are shared between individual pixel pipelines in each quad. It makes a lot of sense to share local memory among quad members, as pixels near eachother on the screen should have (especially texture) data with a high locality of reference. At this level of abstraction, things are essentially the same as NV40's architecture.

Of course, it isn't enough to just look how many pixel pipelines are available: we must also discover how much work each pipeline is able to get done. As we saw in our final analysis of what went wrong with NV3x, the internals of a shader unit can have a very large impact on the ability of the GPU to schedule and execute shader code quickly and efficiently.

At our first introduction, the inside of R420's pixel pipeline was presented as a collection of 2 vector units, 2 scalar units, and one texture unit that can all work in parallel. We've seen the two math and one texture layout of NV40's pixel pipeline, but does this mean that R420 will be able to completely blow NV40 out of the water? In short, no: it's all about what kind of work these different units can do.

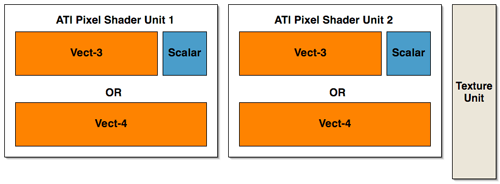

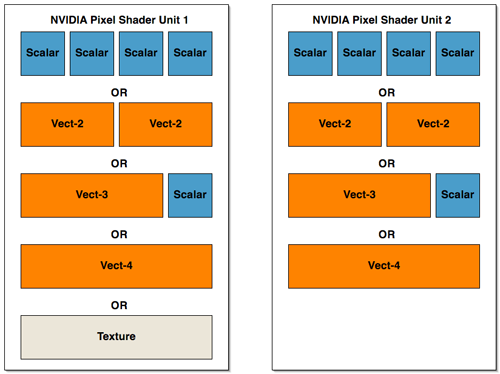

Lifting up the hood, we see that ATI has taken a different approach to presenting their architecture than NVIDIA. ATI's presentation of 2 vector units (which are 3 wide at 72bits), 2 scalar units (24bits), and a texture unit may be more reflective of their implementation than what NVIDIA has shown (but we really can't know this without many more low level details). NVIDIA's hardware isn't quite as straight forward as it may end up looking to software. The fact is that we could look at the shader units in NV40's pixel pipeline in the same way as ATI's hardware (with the exception of the fact that the texture unit shares some functionality with one of the math units). We could also look at NV40 architecture as being 4 2-wide vector units or 2 4-wide vector units (though this is still an over simplification as there are special cases NVIDIA's compiler can exploit that allow more work to be done in parallel). If ATI had decided to present it's architecture in the same way as NVIDIA, we would have seen 2 shader math units and one completely independent texture unit.

In order to gain better understanding, here is a diagram of the parallelism and functionality of the shader units within the pixel pipelines of R420 and NV40:

ATI has essentially three large blocks that can push up to 5 operations per clock cycle

NV40 can be seen two blocks of a more amorphous configuration (but there are special cases that allow some of these parts to work at the same time within each block.

Interestingly enough, there haven't been any changes to the block diagram of a pixel pipeline at this level of detail from R3xx to R420.

The big difference in the pixel pipe architectures that gives the R420 GPU a possible upper hand in performance over NV40 is that texture operations can be done entirely in parallel with the other math units. When NV40 needs to execute a texture operation, it looses much of its math processing power (the texturing unit cannot operate totally independently of the first shader unit in the NV40 pixel pipeline). This is also a feature of R3xx that carried over to R420.

![]()

Understanding what this all means in terms of shader performance depends on the kind of code developers end up writing. We wanted to dedicate some time to hand mapping some shader code to both architecture's pixel pipelines in order to explain how each GPU handled different situations. Trial and error have led us to the conclusion that video card drivers have their work cut out for them when trying to optimize code; especially for NV40. There are multiple special cases that allow NVIDIA's architecture to schedule instructions during texturing operations on the shared math/texture unit, and some of the "OR" cases from our previous diagram of parallelism can be massaged into "and" cases when the right instructions are involved. This also indicates that performance gains due to compiler optimizations could be in NV40's future.

Generally, when running code with mixed math and texturing (with a little more math than texturing) ATI will lead in performance. This case is probably the most indicative of real code.

The real enhancements to the R420 pixel pipeline are deep within the engine. ATI hasn't disclosed to us the number of internal registers their architectures have, or how many pixels each GPU can maintain in flight at any given time, or even cache hit/miss latencies. We do know that, in addition to the extra registers (32 constant and 32 temp registers up from 12) and longer length shaders (somewhere between 512 and 1536 depending on what's being done) available to developers on R420, the number of internal registers has increased and the maximum number of pixels in flight has increased. These facts are really important in understanding performance. The fundamental layout of the pixel pipelines in R420 and NV40 are not that different, but the underlying hardware is where the power comes from. In this case, the number of internal pipeline stages in each pixel pipeline, and the ability of the hardware to hide the latency of a texture fetch are of the utmost importance.

The bottom line is that R420 has the potential to execute more PS 2.0 instructions per clock than NVIDIA in the pixel pipeline because of the way it handles texturing. Even though NVIDIA's scheduler can help to allow more math to be done in parallel with texturing, NV40's texture and math parallelism only approaches that of ATI. Combine that with the fact that R420 runs at a higher clock speed than NV40, and even more pixel shader work can get done in the same amount of time on R420 (which translates into the possibility for frames being rendered faster under the right conditions).

Of course, when working with fp32 data, NV40 is doing 25% more "work" per operation, and it's likely that the support for fp32 from the front of the shader pipeline to the back contributes greatly to the gap in the transistor count (as well as performance numbers). When fp16 is enabled in NV40, internal register pressure is decreased, and less work is being done than in fp32 mode. This results in improved performance for NV40, but questions abound as to real world image quality from NVIDIA's compiler and precision optimized shaders (we are currently exploring this issue and will be following up with a full image quality analysis of now current generation hardware).

As an extension of the fp32 vs. fp24 vs. fp16 debate, NV40's support of Shader Model 3.0 puts it at a slight performance disadvantage. By supporting fp32 all the way through the shader pipeline, flow control, fp16 to the framebuffer and all the other bells and whistles that have come along for the ride, NV40 adds complexity to the hardware, and size to the die. The downside for R420 is that it now lags behind on the feature set front. As we pointed out earlier, the only really new features of the R420 pixel shaders are: higher instruction count shader programs, 32 temporary registers, and a polygon facing register (which can help enable two sided lighting).

To round out the enhancements to the R420's pixel pipeline, ATI's F-Buffer has been tweaked. The F-Buffer is what ATI calls the memory that stores pixels that have come out of the pixel shader but still require another pass (or more) thorough the pixel shader pipeline in order to finish being processed. Since the F-Buffer can require anywhere from no memory to enough memory to handle every pixel coming down the pipeline, ATI have built "improved" memory management hardware into the GPU rather than relegating this task to the driver.

95 Comments

View All Comments

413xram - Wednesday, May 5, 2004 - link

They announced they where going to in there release anyway. Later on this summer. Why not now?jensend - Wednesday, May 5, 2004 - link

#61- nuts. 512 mb ram will pull loads more power, put out a lot more heat, cost a great deal more (especially now, since ram prices are sky-high), and give negligible if any performance gains. Heck, even 256 mb is still primarily a marketing gimmick.413xram - Wednesday, May 5, 2004 - link

They (ATI) are using the same technology that their previous cards are using. They pretty much just added more transistors to perform more functions at a higher speed. I am willing to bet my paycheck that they spent no where close to 400 million dollars to run neck and neck with nvidia in performance. I guess "virtually nothing" is an overstatement. My apologies.Phiro - Wednesday, May 5, 2004 - link

Where do you get your info that ATI spent "virtually nothing"?413xram - Wednesday, May 5, 2004 - link

Both cards perform brilliantly. They are truly a huge step in graphics processing. One problem I forsee though,is that Nvidia spent 400 million dollars into development of their new nv40 technology, while ATI spent virtually nothing to have the same performance gains. Economically that is a hard pill for Nvidia to swallow.It is true that Nvidia's card has the 3.0 pixel shading, unfortunatly though, they are banking on hardware that is not supported upon release of the card. In dealing with video cards from a consumers standpoint that is a hard sell. I have learned from the past that future possibilties of technology in hardware does nothing for me today. Not to mention the power supply issue that does not help neither.

Nvidia must find a way to get better performance out of their new card, I can't believe I'am saying that after seeing the specs that it already performs at, or it may be a long, HOT, and expensive summer for them.

P.S. Nvidia. A little advice. Speed up the release on your 512 mb card. That would definetly sell me. Overclocking your 6800 is something that 90% of us in this forum would do anyway.

theIrish1 - Wednesday, May 5, 2004 - link

heh, whatever.. whatever, and whatever. I love the fanboyisms....

I admit I am a fan of ATI cards. I bought a 9700pro and a 9500pro(in my secondary gaming rig) when they first came out, and an 8500 "pro" before that...but now I want to upgrade again. I am keeping an open mind. After looking at benchmarks, it is clear the both cards have their wins and losses depending on the test. I don't think there is a clear cut winner. nVidia got there by new innovation/technology. ATI got there by optimizing "older" technology.

At this point, with pricing being the same.. I think I still have to lean to the ATI cards. Main reasons being heat & power consumption. If the 6800U was $75 or $100 cheaper, I would probably go with that. It will be interesting to see where the 6850 falls benchmark wise, and also in pricing. If the 6850 takes the $500 pricepoint, where will that leave the 6800U? $450? Or with the 6850 be $550?

Something else about the x800Pro (which by the way, alot of the readers/posters seem to be getting confused as to what they are talking about between the Pro and XT models). Anyway, there are a few online stores out there taking pre-orders still for the x800PRO.... for $500+. I thought the Pro was going to go at $400 and the XT at $500...?!?

413xram - Wednesday, May 5, 2004 - link

Pumpkinierre - Wednesday, May 5, 2004 - link

On the fabrication o the two Gpus- the tech report:"Regardless, transistor counts are less important, in reality, than die size, and we can measure that. ATI's chips are manufactured by TSMC on a 0.13-micron, low-k "Black Diamond" process. The use of a low-capacitance dielectric can reduce crosstalk and allow a chip to run at higher speeds with less power consumption. NVIDIA's NV40, meanwhile, is manufactured by IBM on its 0.13-micron fab process, though without the benefit of a low-k dielectric."

The extra transistors of the 6800U might be taken up with the cinematic encoding/rendering embedded chip. Although ATI claim encoding in their X800p/XT blurb, I havent seen much yet to distinguish it from the 9800p in this field. The Tech report checked power consumption at the wall for their test systems and the 6800s ramp up the power a lot quicker with gpu speed so I'm not too hopeful about the overclock to 520Mhz and 6800u extreme gpu yields. Still, maybe a new stepping or 90nm SOI shrink might help (I noticed both manufacturers shied away from 90nm).

Anyway brilliant video cards from North America. Congratulations ATI and Nvidia!

NullSubroutine - Wednesday, May 5, 2004 - link

If it was nice sarcasm I can laugh, if it was nasty sarcasm you can back off. I can see it would be simple for me to overlook the map used, however no indication to what Atech used. One could assume or someone could ask for the real answer and if they are really lucky they will get a smart ass remark.After checking through 10 different reviews I found similar results to Atech when they had 25 bots, THG had none.

Next time save us both the hassle and just say THG didnt use bots, and Atech probably did.

TrogdorJW - Tuesday, May 4, 2004 - link

#54 - Think about things for a minute. Gee... I wonder why THG and AT got such different scores on UT2K4.... Might it be something like the selection of map and the demo used? Nah, that would be too simple. /sarcasmFrom THG: "For our tests in UT2004 we used our own timedemo on the map Assault-Torlan (no bots). All quality options are set to maximum."

No clear indication of what was used for the map or demo on AT, but I'm pretty sure that it was also a home-brewed demo, and likely on a different map and perhaps with a different number of players. Clearly, though, it was not the same demo as THG used... unless THG is in the habit of giving their benchmarking demos out? Didn't think so.

I see questions like this all the time. Unless two sites use the exact same settings, it's almost impossible to directly compare their scores. There is no conspiracy, though. Both sites pretty much say the same thing: close match, with the edge going to ATI right now, especially in DX9, while NV still reigns supreme in OGL.