Western Digital's Raptors in RAID-0: Are two drives better than one?

by Anand Lal Shimpi on July 1, 2004 12:00 PM EST- Posted in

- Storage

Game Loading Performance

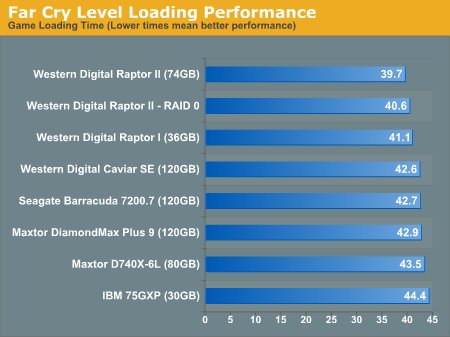

For our game loading tests, we used two games: Far Cry and Unreal Tournament 2004. Both games were installed, in full, to the hard drive. We then used no-CD patches to prevent any accessing of the CD/DVD drive to skew the loading process. Both games were installed to a clean drive without anything else present on the drive (the OS is located on a separate drive).Our Far Cry test consists of starting a campaign with the default difficulty level, hitting escape to skip the introductory movie and beginning the stop watch timer at first sight of the loading screen. The stop watch timer is stopped as soon as the loading screen disappears. The test is repeated three times with the final score reported being an average of the three. In order to avoid the effects of caching, we reboot between runs. All times are reported in seconds; lower scores, obviously, being better.

We were hoping to see some sort of performance increase in the game loading tests, but the RAID array didn't give us that. While the scores put the RAID-0 array slightly slower than the single drive Raptor II, you should also remember that these scores are timed by hand and thus, we're dealing within normal variations in the "benchmark".

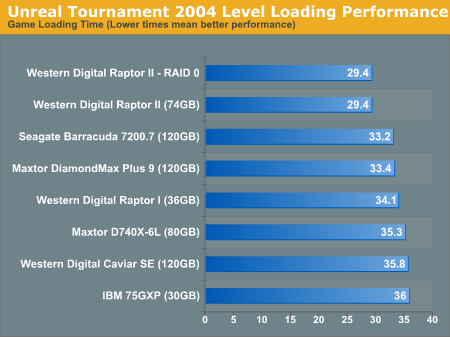

Our Unreal Tournament 2004 test uses the full version of the game and leaves all settings on defaults. After launching the game, we select Instant Action from the menu, choose Assault mode and select the Robot Factory level. The stop watch timer is started right after the Play button is clicked, and stopped when the loading screen disappears. The test is repeated three times with the final score reported being an average of the three. In order to avoid the effects of caching, we reboot between runs. All times are reported in seconds; lower scores, obviously, being better.

In Unreal Tournament, we're left with exactly no performance improvement, thanks to RAID-0.

127 Comments

View All Comments

Arth1 - Thursday, July 1, 2004 - link

The article contains several factual errors.RAID 1, for example, does have *read* speed benefits over a single drive, as you can read one block from one drive and the next block from the other drive at the same time.

Also, what was the block size used, and what was the stripe size?

Was the block size doubled when striping (as is normally recommended to keep the read size identical)?

Since non-serial-ATA drives were part of the test, how come THEY were not tried in a RAID? That way we could have seen how much was the striping effect and how much was due to using two serial ATA ports.

All in all a very useless article, I'm afraid

qquizz - Thursday, July 1, 2004 - link

here, here, what about more ordinairy drives.Kishkumen - Thursday, July 1, 2004 - link

Regarding Intel Application Accelerator, I would like to know if that was installed or not as well. It seems to me that could potentially affect performance quite a bit. But perhaps it doesn't make a difference? Either way, I would like to know.pieta - Thursday, July 1, 2004 - link

It's funny to see metion of ATA and performance. If you really want disk performance, get some real SCSI drives. Without tag cmd queuing, RAID configurations aren't able to reach their full potential.It would be interesting see hadware sites measure SCSI performance. Sure, ATA has the price point, but with 15K SCSI spinners so cheap these days, the major cost is the investment in the HBA. With people dropping 500 bucks on a video card, why is it so inconvievable to think power users wouldn't want to run with the best I/O available?

I was suprised not to see any Iometer benchmarks. IOPS and response times are king in determining disk performance. Iometer is still the best tool, as you can configure workers match typical workloads.

Show me a review of the latest dual ported ultra320 hardware raid HBA stripped across four 15k spinners. Compare that with a 2 drive configuration and the SATA stuff. Show me IOPS, response times, and CPU utilization. That would be meaningful, as people could better justify the extra $2-300 cost going with a real I/O performer.

meccaboy858 - Thursday, July 1, 2004 - link

meccaboy858 - Thursday, July 1, 2004 - link

meccaboy858 - Thursday, July 1, 2004 - link

meccaboy858 - Thursday, July 1, 2004 - link

Nighteye2 - Thursday, July 1, 2004 - link

Of course, RAID 0 makes little sense for raptors, which are already so fast that they hardly form a bottleneck.RAID 0 makes more sense for slower, cheaper HD's...try 2 WD 80GB 8MB cache harddisks, for example. Together they are cheaper than a raptor, but I expect performance will be very similar, if not faster.

Taracta - Thursday, July 1, 2004 - link

I am tired of seeing these RAID 0 articles just throwing 2 disk together and getting results that are contrary to what is expected and not dig deeper into what's the problem. I am only posting my comment here because of my repect for this site. Drive technology and methodlogy has to play apart in discussion of RAID technology. The principle behind RAID 0 is sound. The throughput is a multiple of the number of drives in the array (You will not get 100% but close to it). Not getting this, it should be examined as to WHY? One of my suspicion is that incorrect setup of the array is the primary culprit. How is information written to/from the drive, the array and to individual drives in individual arrays. What is the cluster and sectors sizes. How is the information broken up by the controller to be written to the array. Take for example each drive in a array has a minimum data size of 64bits and you have array sizes of 2 rives 128bits, 3 drives 192bits and four drives 256bits. In initializing you array do you intialize for 64bits, 128bits, 192bits or 256bit? Does it matter? Say for example you initialize for 64bits, does the array controller writes 64bits to each drive or does it writes 64bits to the first drive and 0bits (null spaces and wasting and defeating the purpose of the extra drives) to the other drives because it is expecting the array size bits (eg 128bits for 2 drives)or does it split the 64bits between the drives and waste space and kill performance because each drive allocate a minimum of 64bits. I was waiting for someone to examine in detail what's happening. Xbitlabs came close (from looking at the charts)that they could almost taste it I am sure but still jump to incorrect reasoning.I know I am rambling but in short the premise of RAID arrays are sound so why is it not showing up in the results of the testing?