NVIDIA's GeForce 6200 & 6600 non-GT: Affordable Gaming

by Anand Lal Shimpi on October 11, 2004 9:00 AM EST- Posted in

- GPUs

Halo Performance

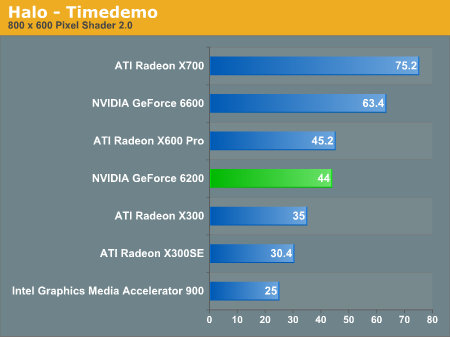

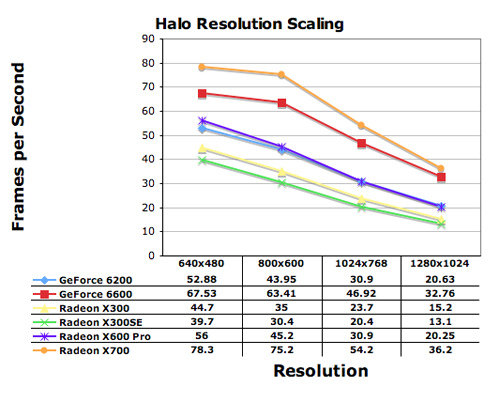

Although Halo 2 is due out next month on the Xbox, the only Halo action that PC gamers will be getting is from the currently available Halo PC title. It is still pretty fun and well played, thanks to its multi-player modes and thus, we still use it as a benchmark. We ran the built-in timedemo with the - use20 extension to force Pixel Shader 2.0 shaders when possible.The standings are similar in Halo to what we've already seen, with the X700 leader, and the 6200 basically performing as well as the X600 Pro.

We see that at 640x480 and even a little bit at 800x600, there are some elements of CPU limitations, but by the time you're at 1024x768, most of the CPU limitations have faded away. Once again, we see that regardless of resolution; the GeForce 6200 and Radeon X600 Pro perform identically to one another.

Notes from the Lab

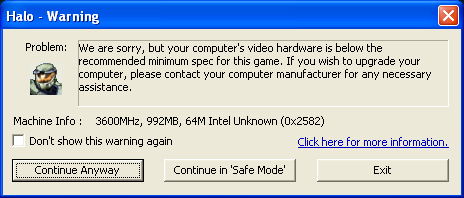

Intel Integrated Graphics: We were greeted with another error when attempting to run the Intel benchmarks:

Luckily, Halo let us continue and the card actually worked reasonably well. The image quality was clearly inferior to everything else - especially in texture filtering quality, the amount of shimmering in the game was incredible. But if you ask, "does it work?" and "can I play the game?", then the answer is "Yes." It makes Halo look like a game from 1999, but it works. The reason for the warning is because the integrated graphics has no built-in vertex processing, which Halo requires to be able to run. But with a fast enough CPU, you should be fine, which is what Intel was going for with their integrated graphics architecture to begin with.

44 Comments

View All Comments

PrinceGaz - Tuesday, October 12, 2004 - link

I'm assuming the 6200 you tested was a 128-bit version? You don't seem to mention it at all in the review, but I doubt nVidia would send you a 64-bit model unless they wanted to do badly in the benchmarks :)I don't think the X700 has appeared on an AT review before, only the X700 XT. Did you underclock your XT, or have you got hold of a standard X700? I trust those X700 results aren't from the X700 XT at full speed! :)

As #11 and #12 mentioned, with the exception of Doom 3, the X600 Pro is faster than the 6200:

Doom 3 - 39.3 60.1 (-35%)

HL2 Stress Test - 91 76 (+20%)

SW Battlefront - 45 33 (+36%)

Sims 2 - 33.9 32.2 (+5%)

UT2004 (1024x768) - 46.3 37 (+25%) [they were CPU limited at lower resolutions]

BF Vietnam - 81 77 (+5%)

Halo - 45.2 44 (+3%)

Far Cry - 74.7 60.6 (+23%)

So the X600 Pro is slower than the 6200 (128-bit) in Doom 3 by a significant amount, but its marginally faster than it in three games, and its significantly faster than the 6200 in the other three games and also the HL2 Stress Test. So that makes the X600 Pro the better card.

The X700 absolutely thrashed even the 6600, let alone the 6200, in every game except of course Doom 3 where the 6600 was faster, and Halo where the X700 was a bit faster than the 6600 but not by such a large amount.

Given the prices of the ATI cards, X300SE ($75), X300 ($100), X600 Pro ($130), X700 (MSRP $149); the 6600 is going to have to be priced at under its MSRP of $149 because of the far superior X700 at the same price point. Lets say a maximum of $130 for the 6600.

If thats the case, I can't see how the 6200 could have a street-price of $149 (128-bit) and $129 (64-bit). How can the 6200 (128-bit) even have the same price as the faster 6600 anyway? Its also outperformed by the $130 X600 Pro which makes a $149 price ridiculous. I think the 6200 will have to be priced more like the X300 and X300SE-- $100 and $75 for the 128-bit and 64-bit versions respectively, if they are to be successful.

Maybe most 6200's will end up being cheap 64-bit cards that are sold to people who aren't really bothered about gaming, or who mistakenly believe the amount of memory is the most important factor. You just have to look at how many 64-bit FX5200's are sold.

Shinei - Tuesday, October 12, 2004 - link

The PT Barnum theory, wilburpan. There's a sucker born every minute, and if they're willing to drop $60 for a 64-bit version of a card when they could have had a 128-bit version, so much the better for profits. The FX5200 continues to be one of the best selling AGP cards on the market, despite the fact that it's worse than a Ti4200 at playing games, let alone DX9 games.wilburpan - Tuesday, October 12, 2004 - link

"The first thing to notice here is that the 6200 supports either a 64-bit or 128-bit memory bus, and as far as NVIDIA is concerned, they are not going to be distinguishing cards equipped with either a 64-bit or 128-bit memory configuration."This really bothers me a lot. If I knew there were two versions of this card, I definitely would want to know which version I was buying.

What would be the rationale for such a policy?

wilburpan - Tuesday, October 12, 2004 - link

nserra - Tuesday, October 12, 2004 - link

Why do you all keep talking about the Geforce 6600 cards (buying them) when the X700 was the clear winner?You all want to buy the worst card (less performing)? I dont understand.

Why dont anantech use 3Dmark05?

No doubt that mine 9700 was a magnificent buy almost 2 years ago. What a piece of cheat are the Geforce FX line of cards....

Why didnt they use one (a 5600/5700) just to see...

Even 4pipe line Ati cards can keep up with 8 pipe nvidia, gee what a mess... old tech yeah right.

coldpower27 - Tuesday, October 12, 2004 - link

I am very happy you included Sims 2 into your benchmark suite:)I think this game like the amount of vertex processor on X700 plus it's advanatge in fillrate and memory bandwidth, could you please test the Sims 2 when you can on the high end cards from both vendors? :P

jediknight - Tuesday, October 12, 2004 - link

What I'm wondering is.. how do previous generation top-of-the-line cards stack up to current gen mainstream cards?AnonymouseUser - Tuesday, October 12, 2004 - link

Saist, you are an idiot."OpenGl was never really big on ATi's list of supported API's... However, adding in Doom3, and the requirement of OGL on non-Windows-based systems, and OGL is at least as important to ATi now as DirectX."

Quake 3, RtCW, HL, CS, CoD, SW:KotOR, Serious Sam (1&2), Painkiller, etc, etc, etc, etc, are OpenGL games. Why would they ONLY NOW want to optimize for OpenGL?

Avalon - Monday, October 11, 2004 - link

Nice review on the budget sector. It's good to see a review from you again, Anand :)Bonesdad - Monday, October 11, 2004 - link

Affordable gaming??? Not until the 6600GT AGP's come out...affordable is not replacing your mobo, cpu and video card...