Half Life 2 GPU Roundup Part 1 - DirectX 9 Shootout

by Anand Lal Shimpi on November 17, 2004 11:22 AM EST- Posted in

- GPUs

Benchmarking Half Life 2

Unlike Doom 3, Half Life 2 has no build in benchmark demo but it has full benchmark functionality. To run a Half Life 2 timedemo you must first modify your Half Life 2 shortcut to include the -console switch then launch the game.

Once Half Life 2 loads, simply type timedemo followed by the name of the demo file you would like to run. All Half Life 2 demos must reside in the C:\Program Files\Valve\Steam\SteamApps\username\half-life 2\hl2\ directory.

Immediately upon its launch, we spent several hours playing through the various levels of Half Life 2, studying them for performance limitations as well as how representative they were of the rest of Half Life 2. After our first pass we narrowed the game down to 11 levels that we felt would be good, representative benchmarks of gameplay throughout the entire game of Half Life 2. We further trimmed the list to just five levels: d1_canals_08, d2_coast_05, d2_coast_12, d2_prison_05 and d3_c17_12. We have put together a suite of five demos based on these levels that we believe are together representative of Half Life 2 gameplay. You can download a zip of our demos here. As we mentioned earlier, ATI is distributing some of their own demos but we elected not to use them in order to remain as fair as possible.

When benchmarking Half Life 2 we discovered a few interesting things:

Half Life 2's performance is generally shader (GPU) limited when outdoors and CPU limited when indoors; now this rule of thumb will change if you run at unreasonably high resolutions (resolutions too high for your GPU) or if you have a particularly slow CPU/GPU, but for the most part take any of the present day GPUs we are comparing here today and you'll find the above statement to be true.

Using the flashlight can result in a decent performance hit if you are already running close to the maximum load of your GPU. The reason behind this is that the flashlight adds another set of per pixel lighting calculations to anything you point the light at, thus increasing the length of any shaders running at that time.

The flashlight at work

Levels with water or any other types of reflective surfaces generally end up being quite GPU intensive as you would guess, so we made it a point to include some water/reflective shaders in our Half Life 2 benchmarks.

But the most important thing to keep in mind with Half Life 2 performance is that, interestingly enough, we didn't test a single card today that we felt was slow. Some cards were able to run at higher resolutions, but at a minimum, 1024 x 768 was extremely playable on every single card we compared here today - which is good news for those of you who just upgraded your GPUs or who have made extremely wise purchases in the past.

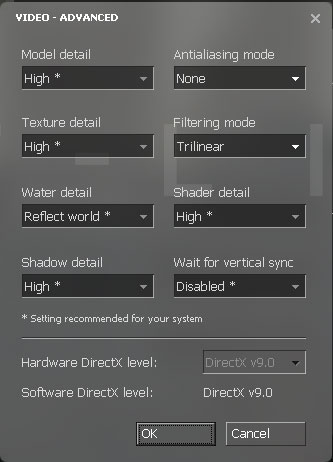

For our benchmarks we used the same settings on all GPUs:

Our test platforms were MSI's K8N Neo2 (nForce3) for AGP cards and ASUS' nForce4 motherboard for PCI Express graphics cards. The two platforms are comparable in performance so you can compare AGP numbers to PCI Express numbers, which was our goal. We used an Athlon 64 4000+ for all of our tests, as well as 1GB of OCZ DDR400 memory running at 2-2-2-10.

79 Comments

View All Comments

Anand Lal Shimpi - Wednesday, November 17, 2004 - link

Thanks for all of the comments guys. Just so you know, I started on Part 2 the minute the first article was done. I'm hoping to be done with testing by sometime tomorrow and then I've just got to write the article. Here's a list of the new cards being tested:9600XT, 9550, 9700, X300, GF 6200, GF 5900XT, GF4 Ti 4600, GF4 MX440

I'm doing both DX9 and DX8 comparisons, including image quality.

After Part 2 I think I'll go ahead and do the CPU comparison, although I've been thinking about doing a more investigative type of article into Half Life 2 performance in trying to figure out where its performance limitations exist, so things may get shuffled around a bit.

We used the PCI Express 6600GT for our tests, but the AGP version should perform quite similarly.

The one issue I'm struggling with right now is the fact that the X700 XT is still not available in retail, while the X700 Pro (256MB) is. If I have the time I may go back and run some X700 Pro numbers to make this a more realistic present-day comparison.

Any other requests?

Take care,

Anand

Cybercat - Wednesday, November 17, 2004 - link

You guys made my day comparing the X700XT, 6800, and 6600GT together. One question though (and I apologize if this was mentioned in the article and I missed it), did you guys use the PCIe or AGP version of the 6600GT?Houdani - Wednesday, November 17, 2004 - link

18: Many users rely on hardware review sites to get a feel for what technology is worth upgrading and when.Most of us have financial contraints which preclude us from upgrading to the best hardware, therefore we are more interested in knowing how the mainstream hardware performs.

You are correct that it would not be an efficient use of resources to have AT repeat the tests on hardware that is two or three generations old ... but sampling the previous generation seems appropriate. Fortunately, that's where part 2 will come in handy.

I expect that part 2 will be sufficient in showing whether or not the previous generation's hardware will be a bottleneck. The results will be invaluable for helping me establish my minimum level of satisfaction for today's applications.

stelleg151 - Wednesday, November 17, 2004 - link

forget what i said in 34.....pio!pio! - Wednesday, November 17, 2004 - link

So how do you softmod a 6800NU to a 6800GT???or unlock the extra stuff....

stelleg151 - Wednesday, November 17, 2004 - link

What drivers were being used here, 4.12 + 67.02??Akira1224 - Wednesday, November 17, 2004 - link

Jedilol I should have seen that one coming!

nastyemu25 - Wednesday, November 17, 2004 - link

i bought a 9600XT because it came boxed with a free coupon for HL2. and now i can't even see how it matches up :(coldpower27 - Wednesday, November 17, 2004 - link

These benchmarks are more in line with what I was predicting, the x800 Pro should be equal to 6800 GT due to similar Pixel Shader fillrate while the X800 XT should have an advantage at higher resolutions due to it's having a higher fillrate being clocked higher.Unlike DriverATIheaven:P.

This is great I am happy knowing Nvidia's current generation of hardware is very competitive in performance in all aspects when at equal amounts of fillrate.

Da3dalus - Wednesday, November 17, 2004 - link

In the 67.02 Forceware driver there's a new option called "Negative LOD bias", if I understand what I've read correctly it's supposed to reduce shimmering.What was that option set to in the tests? And how did it affect performance, image quality and shimmering?