NVIDIA nForce Professional Brings Huge I/O to Opteron

by Derek Wilson on January 24, 2005 9:00 AM EST- Posted in

- CPUs

The New nForce Professional

The nForce Professional marks the fifth core logic offering from NVIDIA, who dubs their motherboard chipsets MCPs (for Media and Communications Processors). Never has the MCP moniker been truer than this time around.

Like the the Quadro and GeForce line, the nForce line is supported by NVIDIA's Unified Driver Architecture. This means that no matter what hardware you are running, any driver will work, whether past present or future. Since NVIDIA brings its UDA to both Windows and Linux, broad corporate support will be available for nForce Pro upon launch.

NVIDIA has also informed us that they have been validating AMD's dual core solutions on nForce Professional before launch as well. NVIDIA wants its customers to know that it's looking to the future, but the statement of dual core validation just serves to create more anticipation for dual core to course through our veins in the meantime. Of course, with dual core coming down the pipe later this year, the rest of the system can't lag behind.

We've seen several good steps in the connectivity department. At the same time, performance and scalability have become more dependant on core logic as functionality moves over the PCI Express bus, more storage needs SATA connections, and more devices are plugged into USB ports, for example. NVIDIA's unique solution is the combination of single chip core logic with the ability to drop multiple MCPs (of lesser function) on a motherboard for expanded I/O capabilities.

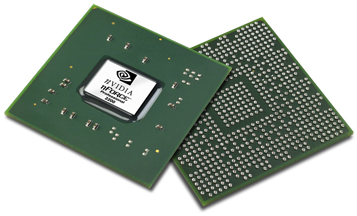

The nForce Professional 2200 MCP

One of the big questions that we first wanted answered was whether or not nForce Pro and nForce 4 Ultra/SLI were the same silicon with different parts turned on/off. NVIDIA maintains that they are different silicon, and it is entirely possible that they are. They did, in fact, give us transistor counts for nForce 4 and nForce Pro:

nForce 4: 22 Million Transistors

nForce Pro: 24 Million Transistors

Economically, it still doesn't make sense to run two different batches of silicon when functionality is so nearly identical, especially when features could just be turned off after the fact. Pro chips don't have the same volume as desktop chips, and desktop chips don't have the same margins as pro silicon. Combining the two allows a company to produce more volume for a single IC (which lowers cost per part) that feeds both high volume and high margin SKUs. Of course, as we saw in our recent article on modding nForce Ultra to SLI, there are some issues with running all your chips from the same silicon. The fact that potential Quadro users have been buying and modding GeForce cards for years speaks to the issue as well. Of course, there's more in a Quadro than just professional performance (build quality and support/service come to mind).

But just because something doesn't make economic sense doesn't mean that we don't want to see it happen. There's just something that doesn't sit right about charging a thousand dollars more for a card that has a few features enabled. We would rather see professional parts be worth their price. Part of that equation is running separate silicon for parts with pro features. We're glad to hear that this is what NVIDIA has said they are doing here.

For now, let's get on to what we do know about the nForce Pro.

55 Comments

View All Comments

ProviaFan - Monday, January 24, 2005 - link

For a long time, making a quad CPU workstation was pretty much not an option, because there was no way to connect an AGP graphics card for good 3D performance (yes, there is a PCI-X Parhelia, and no, that doesn't count). The only one I can remember was an SGI desktop system with 4 PIII's, though maybe the graphics on that were integrated (though of course they weren't bad, unlike Intel's).Now, with a quad CPU system and PCI-E, it will be possible to do whatever you want with those x16 slots, including using a high-performance graphics card (or two, which is something that used to be reserved for Sun systems with their proprietary graphics connectors). Or, with dual core, you could have a virtually 8-way workstation, though I'm not sure what the benefit of that would be outside of complex scientific calculations or 3D rendering.

The sad part is there's no freakin way that I'll be able to afford that... :(

jmautz - Monday, January 24, 2005 - link

I may have missed it, but I didn't see anything about support for dual-core processors. Was this mentioned? I would love to get a dual-core dual Opt board with all PCIe slots (2x16, 1x4, 4x1 would be nice).R3MF - Monday, January 24, 2005 - link

update on the Abit DualCPU board:Chipset

* nVidia CrushK8-04 Pro Chipset

> it does appear to use the nForce4 chipset, so one immediate question springs to mind: why if they can get numa memory on dual CPU boards with the nForce4, can they not do the same with the nForce Pro?

R3MF - Monday, January 24, 2005 - link

what does this mean for the new Abit DualCPU board:http://forums.2cpu.com/showthread.php?s=ef43ac4b9b...

one core-logic chip, yet with NUMA memory, presumably this means it is not an nForce Pro board if i understand anandtechs diagrams correctly.....?

i like the sound of the Abit board:

2x CPU

2x NUMA memory per CPU

2x SLI

4x SATA2 slots

1x GigE with Active Armour (my guess)

best of all i am not paying for stuff i will never use like:

second GigE socket

PCI-X

registered memory

the only thing it lacks is a decent sound solution, but then every nForce4 suffers the same lack. hopefully someone will come out with a decent PCI-E dolby-digital soundcard...............

R3MF - Monday, January 24, 2005 - link

@ 10 - i don't think so. the active armour is a DSP that does the necessary computations to run the firewall, as opposed to letting the CPU do the grunt work.Illissius - Monday, January 24, 2005 - link

Isn't the TCP offload engine thingy just ActiveArmor with a different name?R3MF - Monday, January 24, 2005 - link

two comments:the age of cheap dual opteron speed demons is not yet up on us, because although you only need one 2200 chip to have a dual CPU rig the second CPU connects via the first, thus you only get 6.4GB bandwidth as opposed to 12.8GB. yes you can pair a 2200 and a 2050 together but i bet they will be very pricey!

the article makes mention of SLI for quaddro cards, presumably driver specific to accomodate data sharing over two different PCI-E bridges as opposed to one PCI-E bridge as is the case with nForce4 SLI. this would seem to indicate that regular PCI-E 6xxx series cards will not be able to be used in an SLI confiuration on nForce Pro boards as the ability will not be enabled in the driver. am i right?

DerekWilson - Monday, January 24, 2005 - link

The Intel way of doing PCI Express devices is MUCH simpler. 3 dedicated x8 PCI Express ports on their MCH. These can be split in half and treated as two logical x4 connections or combined into a x16 PEG port. This is easy to approach from a design and implimentation perspective.Aside from NVIDIA having a more complex crossbar that needs to be setup at boot by the bios, allowing 20 devices would meant NVIDIA would potentially have to setup and stream data to 20 different PCI Express devices rather than 4 ... I'm not well versed in the issues of PCI Express, or NVIDIA's internal architecture, but this could pose a problem.

There is also absolutely no reason (or physical spec) to have 20 x1 PCI Express lanes on a motherboard ;-)

I could see an argument to have 5 physcial connections in case someone there was a client that simply needed 5 x4 connections. But that's as far as I would go with it.

The only big limitation is that the controllers can't span MCPs :-)

meaning it is NOT possible to have 5 x16 PCI Express connectors a quad Opteron mobo with the 2200 and 3 2050s. Nor is it possible to have 10 x8 slots. Max bandwidth config would be 8 x8 slots and 4 x4 slots ... or maybe throw in 2 x16, 4 x8, 4 x4 ... Thats still too many connectors for conventional boards ... I think I'll add this note to the article.

#7 ... There shouldn't be much reason for MCPs to communicate explicityly with eachother. It was probably necessary for NVIDIA to extend the RAID logic to allow it to span across multiple MCPs, and it is possible that some glue logic may have been neccessary to allow a boot device to be locate on a 2050 for instance. I can't see it being 2M transistors in MCP-to-MCP kind of stuff though.

ksherman - Monday, January 24, 2005 - link

I agree with #6... I think the extra transistors would be used to allow all the chips to communicate.mickyb - Monday, January 24, 2005 - link

One has to wonder what the 2 million extra transisters are for. I would be surprised if it was "just" to allow multiple MCPs. Sounds like a lot of logic. I am also surprised about the 4 physical connector limit. I didn't realize the PCI-e lanes had to partitioned off like so. I assumed that if there were 20 lanes, they could create up to 20 connectors.