Understanding the Cell Microprocessor

by Anand Lal Shimpi on March 17, 2005 12:05 AM EST- Posted in

- CPUs

High Level Overview of Cell

Cell is just as much of a multi-core processor as the upcoming multi-core CPUs from AMD and Intel, the only difference being that Cell's architecture doesn't have an entirely homogeneous set of cores.Cell's Execution Cores

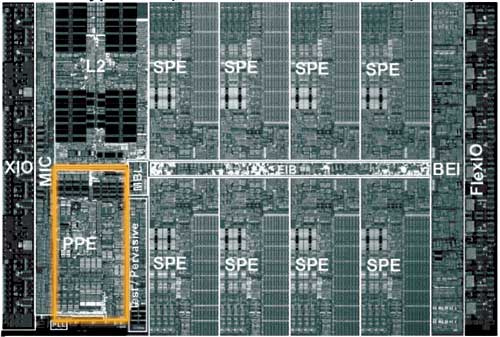

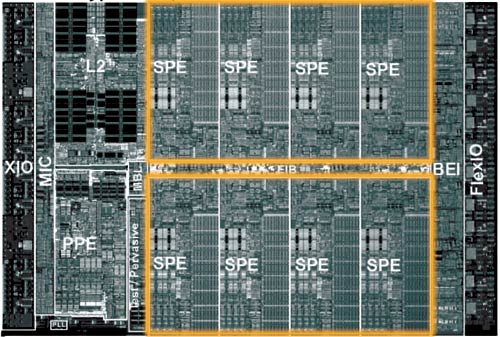

The Cell architecture debuted in a configuration of 9 independent cores: one PowerPC Processing Element (PPE) and eight Synergistic Processing Elements (SPEs). The PPE and SPEs are obviously different, but all eight SPEs are identical to one another.The PPE is IBM's major contribution to the Cell project; it also appears to be very similar to the core being used in the next Xbox console.

The PPE features a 64KB L1 cache and a 512KB L2 cache and features SMT, similar to Intel's Hyper Threading. The PPE features a strictly in-order core, which the desktop x86 market hasn't seen since the death of the original Pentium (the Pentium Pro brought out-of-order execution to the x86 market), so the move for an in-order core is an interesting one. The PPE is also only a 2-issue core, meaning that, at best, it can execute two instructions simultaneously. For comparison, the Athlon 64 is a 3-issue core, so immediately, you get the sense that the PPE is a much simpler core than anything that we have on the desktop. IBM's VMX instruction set (aka Altivec) is also supported by the PPE. Much like the rest of the Cell processor, the PPE is designed to run at very high clock speeds.

There's not much that's impressive about the PPE, other than it's a small, fast, efficient core. Put up against a Pentium 4 or an Athlon 64, the PPE would lose undoubtedly, but the PPE's architecture is one answer to a shift in the performance paradigm. Performance in business/office applications requires a very powerful, very fast general purpose microprocessor, but performance in a game console, for example, does not. The original Xbox used a modified Intel Celeron processor running at 733MHz, while the fastest desktops had 2.0GHz Pentium 4s and 1.60GHz Athlon XPs. Given that the first implementation of Cell is supposed to be Sony's Playstation 3, the simplicity of the PPE is not surprising. Should Cell ever make its way into a PC, the PPE would definitely have to be beefed up, or at least paired with multiple other PPEs.

The majority of the Cell's die is composed of the eight Synergistic Processing Elements (SPEs). If you consider the PPE to be a general purpose microprocessor, think of the SPEs as general purpose processors with a slightly more specific focus.

The SPEs have no branch predictor, meaning that they rely solely on software branch prediction. There are ways that the compiler can avoid branches, and the SPE architecture lends itself very well to things like loop unrolling. Any elementary programmer is familiar with a loop, where one or more lines of code is repeated until a certain condition is met. The checking of that condition (e.g. i < 100) often results in a branch, so one way of removing that branch is simply to unroll the loop. If you have a statement in a loop that is supposed to execute 100 times, you could either keep it in the loop and execute it that way, or you could remove the loop and simply copy the statement 100 times. The end result is the same - the only difference is that in one case, you have a branch condition while the other case results in more lines of code to execute.

The problem with loop unrolling is that you need a large number of registers to unroll some loops, which is one reason that each SPE has 128 registers. Originally, the SPEs were supposed to use the VMX (Altivec) ISA, but because of a need for more than 32 architectural registers, the SPEs implemented a new ISA with support for 128 registers.

Each SPE is only capable of issuing two instructions per clock, meaning that at best, each SPE can execute two instructions at the same time. The issue width of a microprocessor can determine a big part of how large the microprocessor will be; for example, the Itanium 2 is a 6-issue core, so being a 2-issue core makes each SPE significantly smaller than most general purpose microprocessors.

In the end, what we see with the SPEs is that they sacrifice some of the normal tricks to improve ILP in favor of being able to cram more SPEs onto a single die, effectively sacrificing some ILP for greater TLP. Given the direction that the industry is headed, a move to a very TLP centric design makes a lot of sense, but at the same time, it will be quite dependent on developers adhering to very specific development models.

Clearly, the architects of Cell saw the SPEs as being used to run a highly parallelizable workload, and as Derek Wilson mentioned in his article about AGEIA's PhysX PPU:

"One of the properties of graphics that made the feature a good fit for a specialized processor inside a PC is the fact that the task is infinitely parallelizable. Hundreds of thousands, and even millions of pixels, need to be processed every frame. The more detailed a rendering needs to be, the more parallel the task becomes. The same is true with physics. As with the visual world, the physical world is continuous rather than discrete. The more processing power we have, the more things we can simulate at once, and the more realistically we can approximate the real world."

With NVIDIA supplying some form of a GPU for Playstation 3, Cell's array of SPEs have one definite purpose in a gaming console - physics and AI processing. Many have argued that the array of SPEs seems capable of taking over the pixel processing workload of a GPU, but for a high performance console, that's not much of an option. The SPE array could offer better CPU-based 3D rendering, but it would be a tough sell (no pun intended) for this array of SPEs to be the end of dedicated GPU hardware.

70 Comments

View All Comments

scrotemaninov - Thursday, March 17, 2005 - link

#23: True, but I believe that when the SPE's access the outside memory they go through the cache. Sure it's a lower coherancy than we're used to but it's not much worse.Houdani - Thursday, March 17, 2005 - link

18: Top Drawer Post.20: Thanks for the links!

fitten - Thursday, March 17, 2005 - link

"Given the speed of the interconnect and the fact that it is cache-coherant,"Only the PPC core has cache. The individual SPEs don't have cache - they have scratchpad RAM.

#22: I believe the PPC core is a dual issue core that just happens to be 2xSMT.

AndyKH - Thursday, March 17, 2005 - link

Great article.Anand, Could you please clarify something:

I had the impression that the PPE was a SMT processor in the sense that it had to be executing 2 threads in order to issue 2 instructions per clock. In other words: I didn't think the PPE control logic could decide to issue 2 instructions from the same thread at any given clock tick, but rather that it absolutely needed an instruction from each thread to issue two instructions.

After reading the article, I don't assume my impression is right, but a comment from you would be nice.

As I come to think about it, my impression is rather identical to 2 seperate single thread in-order cores. :-)

Koing - Thursday, March 17, 2005 - link

Cell looks VERY interesting.Any of you guys seen Devil May Cry 3 on the PS2? Looks great imo same with T5 and GT4.

Cell at first will be tough like most consoles. BUT eventually THE developers will get around it and make some very solidly good looking games.

Lets hope they are innovative and not just rehashed graphics and nothing else.

Thanks for the great article.

Koing

scrotemaninov - Thursday, March 17, 2005 - link

I really hate just dumping loads of links, but this basically is the available content on the CELL.http://arstechnica.com/articles/paedia/cpu/cell-1....

http://arstechnica.com/articles/paedia/cpu/cell-2....

http://realworldtech.com/page.cfm?ArticleID=RWT021...

http://www.blachford.info/computer/Cells/Cell0.htm...

http://www.realworldtech.com/page.cfm?ArticleID=RW...

http://www.hpcaconf.org/hpca11/papers/25_hofstee-c...

http://www.hpcaconf.org/hpca11/slides/Cell_Public_... (slides)

mrmorris - Thursday, March 17, 2005 - link

Brilliant article, there are few places for in-depth hardcore technology presentations but Anandtech never fails.scrotemaninov - Thursday, March 17, 2005 - link

Real concurrency is hard to do for the programmers. It's a real pain to get it right and it's hard to debug. Systematic analysis just gets too complex as there are just too many states, you end up with a huge graph/markov-model and it's just impossible to solve it tractably.Superscalar and SMT just try to increase ILP at the CPU level without burdening the programmer or compiler-writer. However, we've pretty much come to the end of getting a CPU to go faster - at 5GHz, LIGHT travels 6cm between clocks, and an electic PD will travel slower. As it is, in the P4 pipeline, there are at least 2 stages which are simply there to allow signals to propogate across the chip. Clearly, going faster in Hz isn't going to make the pipeline go faster.

So the ONLY thing that they can do now is to put lots of cores on the same chip and then we're going to have to deal with real concurrency. IBM/Sony are doing it now with CELL and Intel will do it in a few years. It's going to happen regardless. What we need is languages which can support real concurrency. The Java Memory Model is an almost ideal fit for the CELL, but other aspects don't work out so well, maybe. We need Pi-calculus/Join-calculus constructs in languages to be able to really deal with these cpus efficiently.

Your comments about CELL not being general purpose enough are a little wrong. IBM /already/ has the CELL in workstations and are evaluating applications that will work well. Given the speed of the interconnect and the fact that it is cache-coherant, I think we'll be seeing super-computers based on many CELLs, it's an almost ideal fit (as it is, you've almost got ccNUMA on a single chip). Also, bear in mind that this is IBM's 5th (or 6th?) generation of SMT in the PPE - they've been at it MUCH longer than Intel - IBM started it in the mid-90s around the same time that the Alpha crew were working on the EV8 which was going to have 8-way thread-level parallelism (got canned sadly).

Also, if you look at IBMs heavy CPUs - the POWER5, that has SMT and dispatches in groups of 8 instructions, not the 3/4 that AMD/Intel manage.

What I'm saying here, is that sure, the SPEs don't have BPTs of BTBs, they're all 2-way dispatch and not greater, but, they all run REALLY fast, they have short pipelines (so the pain of the branch misprediction won't be so bad), and, IBM have had software branch prediction available since the POWER4, so they've been at it a few years and must have decided that compilers really can successfully predict branch directions.

Backwards compatibility doesn't matter. Sure, Microsoft took several years to support AMD64 but that didn't stop take up of the platform - everyone just ran Linux on it (well, everyone who wanted to use the 64bit CPU they'd bought). It'll only be a few months after the CELL is out that we'll have to wait until Linux can be built on it. 100quid says Microsoft will never support it.

Frankly, considering that it's far more likely to go into super-computer or workstation environments, no one there gives a damn about backwards compatibility or Windows support. No one in those environments /wants/ a damn paper clip.

Reflex - Thursday, March 17, 2005 - link

#14: Replace 'lazy developers' with 'developers on a budget' and you will have a true statement. Its not an issue of laziness, its an issue of having the budget to optimize fully for a platform.GhandiInstinct - Thursday, March 17, 2005 - link

Wow Super CPU and SUPER RAMBUS? AHHHH!This will replace my computer. PS3 that is.