Ghost Recon Advanced Warfighter Tests

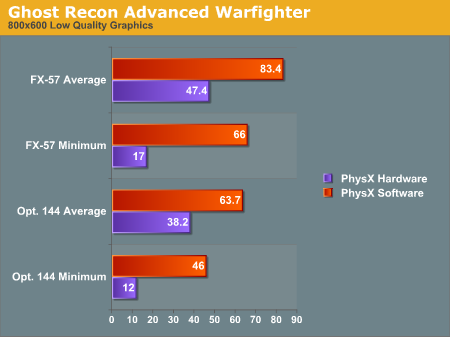

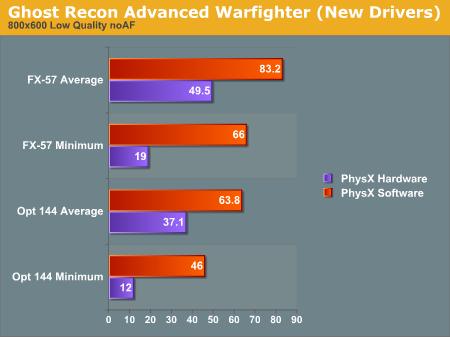

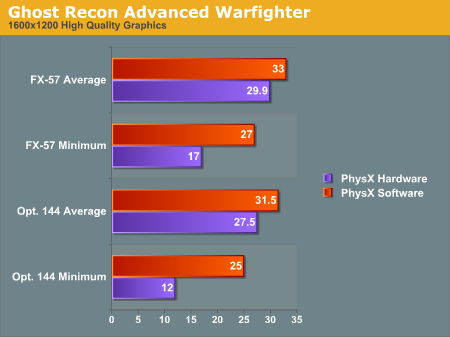

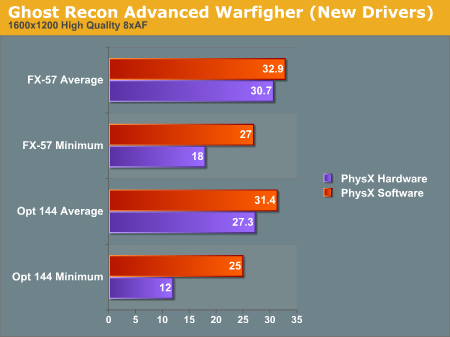

And the short story is that the patch released by AGEIA when we published our previous story didn't really do much to fix the performance issues. We did see an increase in framerate from our previous tests, but the results are less impressive than we were hoping to see (especially with regard to the extremely low minimum framerate).Here are the results from our initial test, as well as the updated results we collected:

There is a difference, but it isn't huge. We are quite impressed with the fact that AGEIA was able to release a driver so quickly after performance issues were made known, but we would like to see better results than this. Perhaps AGEIA will have another trick up their sleeves in the future as well.

Whatever the case, after further testing, it appears our initial assumptions are proving more and more correct, at least with the current generation of PhysX games. There is a bottleneck in the system somewhere near and dear to the PPU. Whether this bottleneck is in the game code, the AGEIA driver, the PCI bus, or on the PhysX card itself, we just can't say at this point. The fact that a driver release did improve the framerates a little implies that at least some of the bottleneck is in the driver. The implementation in GRAW is quite questionable, and a game update could help to improve performance if this is the case.

Our working theory is that there is a good amount of overhead associated with initiating activity on the PhysX hardware. This idea is backed up by a few observations we have made. Firstly, the slow down occurs right as particle systems or objects are created in the game. After the creation of the PhysX accelerated objects, framerates seem to smooth out. The demos we have which use the PhysX hardware for everything physics related don't seem to suffer the same problem when blowing things up (as we will demonstrate shortly).

We don't know enough at this point about either the implementation of the PhysX hardware or the games that use it to be able to say what would help speed things up. It is quite clear that there is a whole lot of breathing room for developers to use. Both the CellFactor demo (now downloadable) and the UnrealEngine 3 demo Hangar of Doom show this fact quite clearly.

67 Comments

View All Comments

hatsurfer - Thursday, May 18, 2006 - link

I just got my card today from Newegg. I only had about an hour to play before I left for work. I wanted to see the effects when I destroyed a building. I played through the first mission on 1600x1200 and my frames stayed a solid 30 with v-sync enabled due to playing on a large LCD. It was pretty nice to see the MANY building parts flying in every direction with the smoke effect. All in all it looked pretty cool and realistic. I am currently gaming on a EVGA 7800 GTX awaiting my 7900 GTX from EVGAs step-up program. I would like to take full advantage of my optimal resolution 1920x1200 but my frames dropped to the 20's. I can only imagine my 7900 GTX will get me my full HD resolution, which I can't sustain on a single 7800 GTX anyway.I think anyone with a high-end system isn't going to have any hangups when it comes to frame rates. Is this for low end budget gaming systems? Probably not just yet, but neither is the price tag of $300+. So, right now it's a nice little extra piece of eye candy for me to enjoy and in the end that's what is important anyway.

I hope this technology takes off and drives the number of supported titles up and if it gets incorporated into other components so be it (I really hope so too!). We'll just do another upgrade that we're all used to doing every few months anyway. Such it has always been at the cutting edge of PC'ing and such it will always be.

Mabus - Wednesday, May 17, 2006 - link

Well I wonder how long before ATI or Nvidia Buys this company and integrates the logic into it's GPU. Not only will this allow better performance but it will allow optimisations with drivers and communications and instruction exclusivity. This will be very nice smaller card, one less space used on mobo and cooler running etc etc..remember the seperate match co-pro well this tech will definatly go the same way, it is only a matter of time. And face it the current benchies show that time is what we need

to get that performance where us gamers want it.

Mabus signing off.....

Trisped - Wednesday, May 17, 2006 - link

Why didn’t you compare to stats of Asus card with those of the BFG card. Sound, power, if they are the same then say so, otherwise what is the point of reviewing two different cards for the same thing?COV is an unfair test- Max Physics Debris Count should be the same so you can see if there is a performance boost at the same level. I know that if I give my GPU 1000 more objects it is going to go much slower, with or without the PhysX card. What I want to see is running with 1500 and 422 “Debris” both with and without the card.

I would like to see the tests run with not only 2 different processors, but what about an Intel dual core and what about with different video card configurations? If you have a lower end video card should you expect to have less of an FPS impact or more?

How long before there is a PCIe card? Would there be a performance boost using PCIe? I would think that if the physics and GPU were on the same bus then there would be less latency as well as faster communication. I think the fact that the PhysX card wouldn’t have to wait for the processor to stop sending it info before it started transmitting would also be important to speed.

Spelling/Grammar:

“If spawning lots of effects on the PhysX card makes the system stutter, then it is defeating its won purpose.” Should be one, not won.

VooDooAddict - Wednesday, May 17, 2006 - link

I'm waiting to pass judgment on this tech till after I see reviews with high end Dual core CPUs and a PhysX board connected via PCI-Express.I just can't see how connection something like this via PCI was ever going to work. This isn't mainly a one way communication like an audio board during gaming. Send the information needing to be output, the sound board outputs it. My understanding is that the PhysX board also needs to communicate the calculated information back to the CPU and GPU.

Now would be a great time for Nvidia to step up with some new demos for their physics hardware acceleration via SLI. For an extra couple bills ... SLI right now is much more justifiable then this physics hardware. AND SLI is really one of those things that isn't a nessesity. With SLI based physics you could run at low physics and max frames for Multiplayer twitch games... just enable hardware physics for Single player and MMOs. I'm hoping that will give you the best of both worlds. I'll be waiting for reviews like that.

DerekWilson - Wednesday, May 17, 2006 - link

You are correct in that PhysX requires two way communication during gameplay in many cases. This hits on why I think the demos run smoother than the games out right now. In GRAW and CoV, data needs to be sent to the PhysX card, the PPU needs to do some work, and data needs to be sent back to the CPU.In the demos that use the PhysX hardware for everything, as the scene is being setup, data is loaded into the PhysX on-board memory. Interacting with the world requires much less data to be sent to the PPU per frame, as all the objects have already been created, setup and stored locally. This should significantly cut down on traffic and provide less of a performance impact while also enabling more complicated effects and physics.

DerekWilson - Wednesday, May 17, 2006 - link

just going to say again that the above is just a theorypoohbear - Wednesday, May 17, 2006 - link

yea yea yea, so how do we overclock these things? :)Tephlon - Wednesday, May 17, 2006 - link

I know this doesn't have EXACT bearing on the article, but I thought some readers would be interested.I now own one of these cards (BFG Physx PPU), and it doesn't seem as bad to me as the benchmarks really make it out to be. I installed the card and played the game with the exact settings I had previously, and didn't FEEL a difference. I havn't straight benchmarked it, though, so the numbers might very well end up similar to anandtech's findings.

Although it has no real bearing on gameplay, I have noticed more with the addition of the PPU than anyone ever credits. Surrounding cars to rock more accuratly when nearby explosions errupt, and their suspension and doors move more accurately due to bullets and explosions. Even lightpoles bend and react better than without the card. I even had one explosion that caused the 'cross bars' (not sure what to call them) on top of the lightpole to break loose and swing back and forth for a good 20 seconds until they broke free and hit the ground. It was really neat. I know its not important stuff, but I can SEE a positive difference with the card, but don't really FEEL the lost performance it's claimed to have. Often I do see the fraps meter on my G15 lcd drop dramatically for a VERY quick instant, but I don't feel this in actual gameplay. I also believe this also happened on my rig before I had the PPU. Maybe its just that I'm not a 'BEST PERFORMANCE EVER' kinda guy. I've heard guys on forums complain about their FPS dropping from 56fps to 51fps. So what. This isn't a run&gun game. My roommate plays co-op with me and fraps tells him he's running 20fps average. It doesn't look it to me, and he also doesn't feel it either. An issue with fraps? Maybe. But again my point is as this point the loss of fps (for me) doesn't equal loss of performance, so I'm pleased with the card. I'm also curious for others that own a card to throw up their comments as well.

Again, my findings aren't that SCIENTIFIC, but they're real life. I enjoy it, and REALLY hope they see enough support to get better implimentation in future games. I think improved support and tweaked drivers will convince the masses. I hope it happens soon, or it might be a lost cause.

For those interested. My rig.

Asus A8N32-SLI

Corsair XMS 2GB (2 x 1GB) TWINX2048-3500LLPRO

BFG Geforce 7900GTOC x2

BFG Ageia Physx PPU

BFG 650watt PSU

Creative Audigy 2 ZS

NEC Black 16X DVD+R

WD 36gb Raptor

WD 320gb SATA

Tephlon - Wednesday, May 17, 2006 - link

ooops. I guess I probebly have a cpu to go with that rig. tehe.AMD Athlon X2 4400+ Toledo

Toodles.

poohbear - Wednesday, May 17, 2006 - link

are u sure those physics effects are'nt already there without the physix's card? havoc states GRAW already uses the havoc engine to begin w/, physix only adds extra particles for grenades and stuff.