BFG PhysX and the AGEIA Driver

Let us begin with the BFG PhysX card itself. The specs are the exact same as the ASUS card we previewed. Specifically, we have:130nm PhysX PPU with 125 million transistors

128MB GDDR3 @ 733MHz Data Rate

32-bit PCI interface

4-pin Molex power connector

The BFG card has a bonus: a blue LED behind the fan. Our BFG card came in a retail box, pictured here:

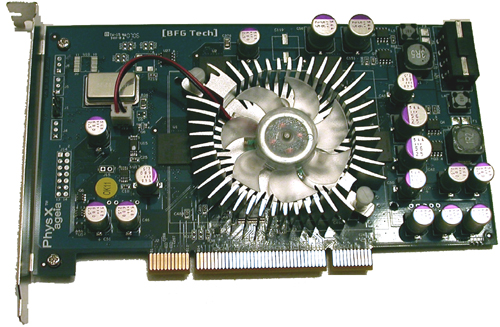

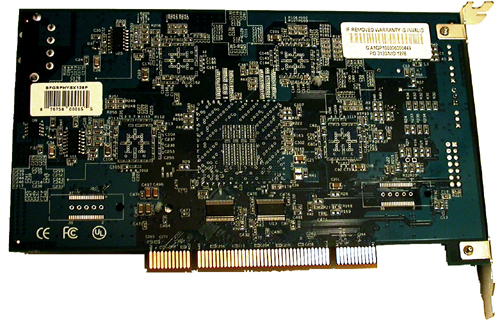

Inside the box, we find CDs, power cables, and the card itself:

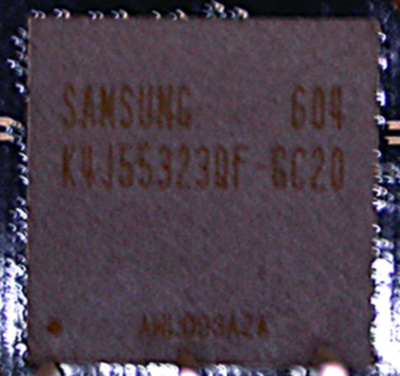

As we can see here, BFG opted to go with Samsung's K4J55323QF-GC20 GDDR3 chips. There are 4 chips on the board, each of which is 4 banks of 2Mb x 32 RAM (32MB). The chips are rated at 2ns, giving a maximum clock speed of 500MHz (1GHz data rate), but the memory clock speed used on current PhysX hardware is only 366MHz (733MHz data rate). It is possible that lower than rated clock speeds could be implemented to save on power and hit a lower thermal envelope. It might be possible that a lower clock speed allows board makers to be more aggressive with chip timing if latency is a larger concern than bandwidth for the PhysX hardware. This is just speculation at this point, but such an approach is certainly not beyond the realm of possibility.

The pricing on the BFG card costs about $300 at major online retailers, but can be found for as low as $280. The ASUS PhysX P1 Ghost Recon Edition is bundled with GRAW for about $340, while the BFG part does not come with any PhysX accelerated games. It is possible to download a demo of CellFactor now, which does add some value to the product, but until we see more (and much better) software support, we will have to recommend that interested buyers take a wait and see attitude towards this part.

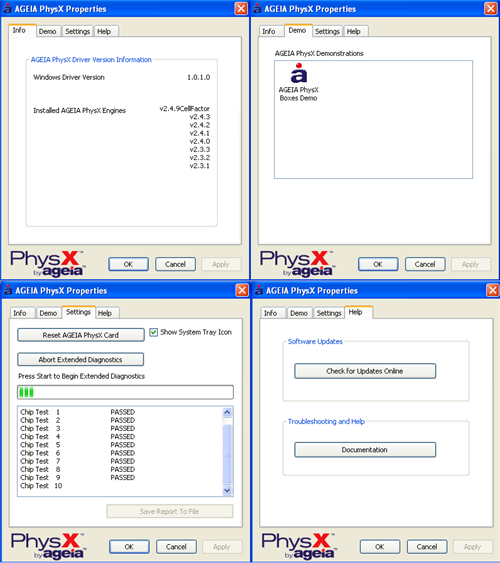

As for software support, AGEIA is constantly working on their driver and pumping out newer versions. The driver interface is shown here:

|

| Click to enlarge |

There isn't much to the user side of the PhysX driver. We see an informational window, a test application, a diagnostic tool to check or reset hardware, and a help page. There are no real "options" to speak of in the traditional sense. The card itself really is designed to be plugged in and forgotten about. This does make it much easier on the end user under normal conditions.

We also tested the power draw and noise of the BFG PhysX card. Here are our results:

Noise (in dB)

Ambient (PC off): 43.4

No BFG PhysX: 50.5

BFG PhysX: 54.0

The BFG PhysX Accelerator does audibly add to the noise. Of course, the noise increase is nowhere near as bad as listening to an ATI X1900 XTX fan spin up to full speed.

Idle Power (in Watts)

No Hardware: 170

BFG PhysX: 190

Load Power without Physics Load

No Hardware: 324

BFG PhysX: 352

Load Power with Physics Load

No Hardware: 335

BFG PhysX: 300

At first glance these results can be a bit tricky to understand. The load tests were performed with our low quality Ghost Recon Advanced Warfighter physics benchmark. Our test "without Physics Load" is taken before we throw the grenade and blow up everything, while the "with Physics Load" reading is made during the explosion.

Yes, system power draw (measured at the wall with a Kill-A-Watt) decreases under load when the PhysX card is being used. This is made odder by the fact that the power draw of the system without a physics card increases during the explosion. Our explanation is quite simple: The GPU is the leading power hog when running GRAW, and it becomes starved for input while the PPU generates its data. This explanation fits in well with our observations on framerate under the games we tested: namely, triggering events which use PhysX hardware in current games results in a very brief (yet sharp) drop in framerate. With the system sending the GPU less work to do per second, less power is required to run the game as well. While we don't know the exact power draw of the PhysX card itself, it is clear from our data that it doesn't pull nearly the power that current graphics cards require.

67 Comments

View All Comments

AndreasM - Wednesday, May 17, 2006 - link

In http://www.xtremesystems.org/forums/showthread.php...">some cases the PPU does increase performance. The next version of Ageia's SDK (ETA July) is supposed to support all physics effects in software, ATM liquid and cloth effects are hardware only; which is why some games like Cellfactor can't really run in software mode properly (yet). Hopefully Immersion releases a new version of their demo with official software support after Ageia releases their 2.4 SDK.UberL33tJarad - Wednesday, May 17, 2006 - link

How come there's never a direct comparison between CPU and PPU using the same physics? Making the PPU do 3x the work and not losing 3x performance doesn't seem so bad. It puts the card in a bad light because 90% of the people who will read this article will skip the text and go straight for the graphs. I know it can't be done in GRAW without different sets of physics (Havok for everything then Ageia for explosions) why not use the same Max Physics Debris Count?Genx87 - Wednesday, May 17, 2006 - link

I am still in contention it is a GPU limitation of having to render the higher amount of objects.One way to test this is to setup identical systems but one with SLI and the other with a single GPU.

1. Test the difference between the two systems without physics applied so we get an idea of how much the game scales.

2. Then test using identical setups using hardware physics and note if we see any difference. My theory is the amount of objects that need to be rendered is killing the GPU's.

There is definately a bottleneck and it would be agreat if an article really tried to get to the bottom of it. Is it CPU, PPU or GPU? It doesnt appear that CPU is "that" big an issue as the difference between the FX57 and Opty 144 isnt that big.

UberL33tJarad - Wednesday, May 17, 2006 - link

Well that's why I would be very intersted if some benchmarks could come out of http://pp.kpnet.fi/andreasm/physx/">this demo. The low res and lack of effects and textures makes it a great example to test CPUvsPPU strain. One guy said he went from http://www.xtremesystems.org/forums/showpost.php?p..."><5fps to 20fps, which is phenomenal.You can run the test in software or hardware mode and has 3k objects interacting with each other.

Also, if you want to REALLY strain a system, try http://www.novodex.com/rocket/NovodexRocket_V1_1.e...">this demo. Some guy on XS tried a 3ghz Conroe and got <3fps.

DigitalFreak - Wednesday, May 17, 2006 - link

Good idea.maevinj - Wednesday, May 17, 2006 - link

"then it is defeating its won purpose"should be one

JarredWalton - Wednesday, May 17, 2006 - link

Actually, I think it was supposed to be "own", but I reworded it anyway. Thanks.Nighteye2 - Wednesday, May 17, 2006 - link

2 things:I'd like to see a comparison done with equal level of physics, even if it's the low level of physics. Such a comparison could be informative about the bottlenecks. In CoV you can set the number of particles - do tests at 200 and 400 without the physx card, and tests at 400, 800 and 1500 with the physx card. Show how the physics scale with and without the physx card.

Secondly, do those slowdowns also occur in Cellfactor and UT2007 when objects are created? It seems to me like the slowdown is caused by suddenly having to route part of the data over the PPU, instead of using the PPU for object locations all the time.

DerekWilson - Wednesday, May 17, 2006 - link

The real issue here is that the type of debris is different. Lowering number on the physx cards still gives me things like packing peanuts, while software never does.It is still an apples to oranges comparison. But I will play around with this.

darkdemyze - Wednesday, May 17, 2006 - link

Seems there is a lot of "theory" and "ideal advantages" surrounding this card.Just as the chicken-egg comparison states, it's going to be a tough battle for AGEIA to get this new product going with lack of support from developers. I seriuosly doubt many people, even the ones who have the money, will want a product they don't get anything out of besides a few extra boxes flying through the air or a couple of extra grenade shards coming out of the explosion when there is such a decrament in performance.

At any rate, seems like just one more hardware component to buy for gamers. Meh.