BFG PhysX and the AGEIA Driver

Let us begin with the BFG PhysX card itself. The specs are the exact same as the ASUS card we previewed. Specifically, we have:130nm PhysX PPU with 125 million transistors

128MB GDDR3 @ 733MHz Data Rate

32-bit PCI interface

4-pin Molex power connector

The BFG card has a bonus: a blue LED behind the fan. Our BFG card came in a retail box, pictured here:

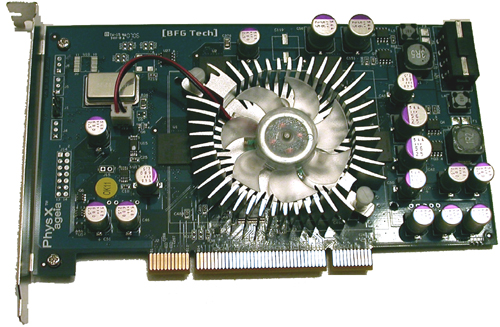

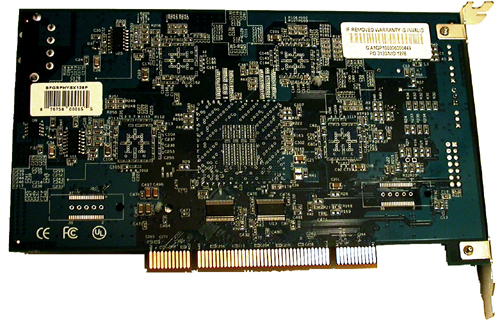

Inside the box, we find CDs, power cables, and the card itself:

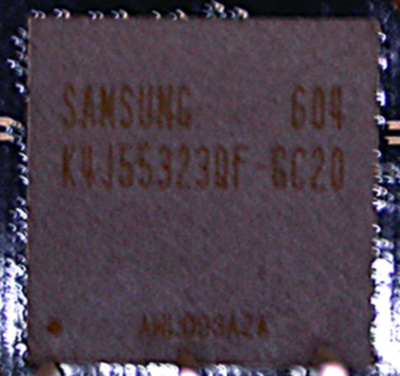

As we can see here, BFG opted to go with Samsung's K4J55323QF-GC20 GDDR3 chips. There are 4 chips on the board, each of which is 4 banks of 2Mb x 32 RAM (32MB). The chips are rated at 2ns, giving a maximum clock speed of 500MHz (1GHz data rate), but the memory clock speed used on current PhysX hardware is only 366MHz (733MHz data rate). It is possible that lower than rated clock speeds could be implemented to save on power and hit a lower thermal envelope. It might be possible that a lower clock speed allows board makers to be more aggressive with chip timing if latency is a larger concern than bandwidth for the PhysX hardware. This is just speculation at this point, but such an approach is certainly not beyond the realm of possibility.

The pricing on the BFG card costs about $300 at major online retailers, but can be found for as low as $280. The ASUS PhysX P1 Ghost Recon Edition is bundled with GRAW for about $340, while the BFG part does not come with any PhysX accelerated games. It is possible to download a demo of CellFactor now, which does add some value to the product, but until we see more (and much better) software support, we will have to recommend that interested buyers take a wait and see attitude towards this part.

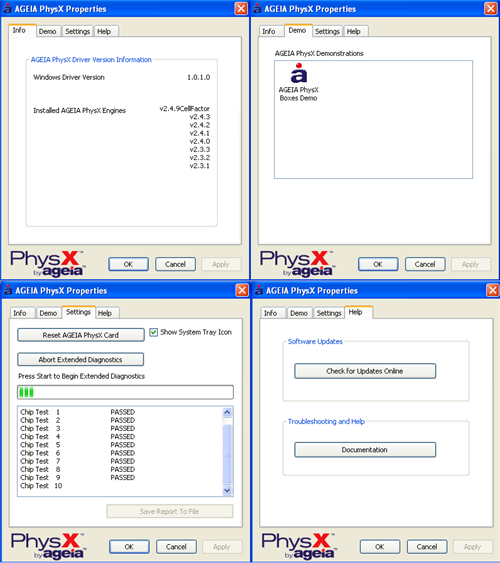

As for software support, AGEIA is constantly working on their driver and pumping out newer versions. The driver interface is shown here:

|

| Click to enlarge |

There isn't much to the user side of the PhysX driver. We see an informational window, a test application, a diagnostic tool to check or reset hardware, and a help page. There are no real "options" to speak of in the traditional sense. The card itself really is designed to be plugged in and forgotten about. This does make it much easier on the end user under normal conditions.

We also tested the power draw and noise of the BFG PhysX card. Here are our results:

Noise (in dB)

Ambient (PC off): 43.4

No BFG PhysX: 50.5

BFG PhysX: 54.0

The BFG PhysX Accelerator does audibly add to the noise. Of course, the noise increase is nowhere near as bad as listening to an ATI X1900 XTX fan spin up to full speed.

Idle Power (in Watts)

No Hardware: 170

BFG PhysX: 190

Load Power without Physics Load

No Hardware: 324

BFG PhysX: 352

Load Power with Physics Load

No Hardware: 335

BFG PhysX: 300

At first glance these results can be a bit tricky to understand. The load tests were performed with our low quality Ghost Recon Advanced Warfighter physics benchmark. Our test "without Physics Load" is taken before we throw the grenade and blow up everything, while the "with Physics Load" reading is made during the explosion.

Yes, system power draw (measured at the wall with a Kill-A-Watt) decreases under load when the PhysX card is being used. This is made odder by the fact that the power draw of the system without a physics card increases during the explosion. Our explanation is quite simple: The GPU is the leading power hog when running GRAW, and it becomes starved for input while the PPU generates its data. This explanation fits in well with our observations on framerate under the games we tested: namely, triggering events which use PhysX hardware in current games results in a very brief (yet sharp) drop in framerate. With the system sending the GPU less work to do per second, less power is required to run the game as well. While we don't know the exact power draw of the PhysX card itself, it is clear from our data that it doesn't pull nearly the power that current graphics cards require.

67 Comments

View All Comments

phusg - Wednesday, May 17, 2006 - link

> Performance issues must not exist, as stuttering framerates have nothing to do with why people spend thousands of dollars on a gaming rig.What does this sentence mean? No, really. It seems to try to say more than just, "stuttering framerates on a multi-thousand dollar rig is ridiculous", or is that it?

nullpointerus - Wednesday, May 17, 2006 - link

I believe he means that the card can't survive in the market if it dramatically lowers framerates on even high end rigs.DerekWilson - Wednesday, May 17, 2006 - link

check plus ... sorry if my wording was a little cumbersome.QChronoD - Wednesday, May 17, 2006 - link

It seems to me like you guys forgot to set a baseline for the system with the PPU card installed. From the picture that you posted in the CoV test, the nuber of physics objects looks like it can be adjusted when the AGIEA support is enabled. You should have ran a benchmark with the card installed but keeping the level of physics the same. That would eliminate the loading on the GPU as a variable. Doing so would cause the GPU load to remain nearly the same with the only difference being to do the CPU and PPU taking time sending info back and forth.Brunnis - Wednesday, May 17, 2006 - link

I bet a game like GRAW actually would run faster if the same physics effects were run directly on the CPU instead of this "decelerator". You could add a lot of physics before the game would start running nearly as bad as with the PhysX card. What a great product...DigitalFreak - Wednesday, May 17, 2006 - link

I'm wondering the same thing."We still need hard and fast ways to properly compare the same physics algorithm running on a CPU, a GPU, and a PPU -- or at the very least, on a (dual/multi-core) CPU and PPU."

Maybe it's a requirement that the developers have to intentionally limit (via the sliders, etc.) how many "objects" can be generated without the PPU in order to keep people from finding out that a dual core CPU could provide the same effects more efficiently than their PPU.

nullpointerus - Wednesday, May 17, 2006 - link

Why would ASUS or BFG want to get mixed up in a performance scam?DerekWilson - Wednesday, May 17, 2006 - link

Or EPIC with UnrealEngine 3?Makes you wonder what we aren't seeing here doesn't it?

Visual - Wednesday, May 17, 2006 - link

so what you're showing in all the graphs is lower performance with the hardware than without it. WTF?yes i understand that testing without the hardware is only faster because it's running lower detail, but that's not clearly visible from a few glances over the article... and you do know how important the first impression really is.

now i just gotta ask, why can't you test both software and hardware with the same level of detail? that's what a real benchmark should show atleast. Can't you request some complete software emulation from AGEIA that can fool the game that the card is present, and turn on all the extra effects? If not from AGEIA, maybe from ATI or nVidia, who seem to have worked on such emulations that even use their GFX cards. In the worst case, if you can't get the software mode to have all the same effects, why not then atleast turn off those effects when testing the hardware implementation? In the city of villians for example, why is the software test ran with lower "Max Physics Debris Count"? (though I assume there are other effects that get automatically enabled with the hardware present and aren't configurable)

I just don't get the point of this article... if you're not able to compare apples to apples yet, then don't even bother with an article.

Griswold - Wednesday, May 17, 2006 - link

I think they clearly stated in the first article, that GRAW for example, doesnt allow higher debris settings in software mode.But even if it did, a $300 part that is supposed to be lightning fast and what not, should be at least as fast as ordinary software calculations - at higher debris count.

I really dont care much about apples and oranges here. The message seems to be clear, right now it isnt performing up to snuff for whatever reason.