The Elder Scrolls IV: Oblivion Performance

While it is disappointing that Oblivion doesn't have a built in benchmark, our FRAPS tests have proven to be fairly repeatable and very intensive on every part of a system. While these numbers will reflect real world playability of the game, please remember that our test system uses the fastest processor we could get our hands on. If a purchasing decision is to be made using Oblivion performance alone, please check out our two articles on the CPU and GPU performance of Oblivion. We have used the most graphically intensive benchmark in our suite, but the rest of the platform will make a difference. We can still easily demonstrate which graphics card is best for Oblivion even if our numbers don't translate to what our readers will see on their systems.

Running through the forest towards an Oblivion gate while fireballs fly by our head is a very graphically taxing benchmark. In order to run this benchmark, we have a saved game that we load and run through with FRAPS. To start the benchmark, we hit "q" which just runs forward, and start and stop FRAPS at predetermined points in the run. While not 100% identical each run, our benchmark scores are usually fairly close. We run the benchmark a couple times just to be sure there wasn't a one time hiccup.

As for settings, we tested a few different configurations and decided on this group of options:

| Oblivion Performance Settings | |

| Texture Size | Large |

| Tree Fade | 60% |

| Actor Fade | 20% |

| Item Fade | 10% |

| Object Fade | 25% |

| Grass Distance | 30% |

| View Distance | 100% |

| Distant Land | On |

| Distant Buildings | On |

| Distant Trees | On |

| Interior Shadows | 45% |

| Exterior Shadows | 20% |

| Self Shadows | Off |

| Shadows on Grass | Off |

| Tree Canopy Shadows | Off |

| Shadow Filtering | High |

| Specular Distance | 80% |

| HDR Lighting | On |

| Bloom Lighting | Off |

| Water Detail | Normal |

| Water Reflections | On |

| Water Ripples | On |

| Window Reflections | On |

| Blood Decals | High |

| Anti-aliasing | Off |

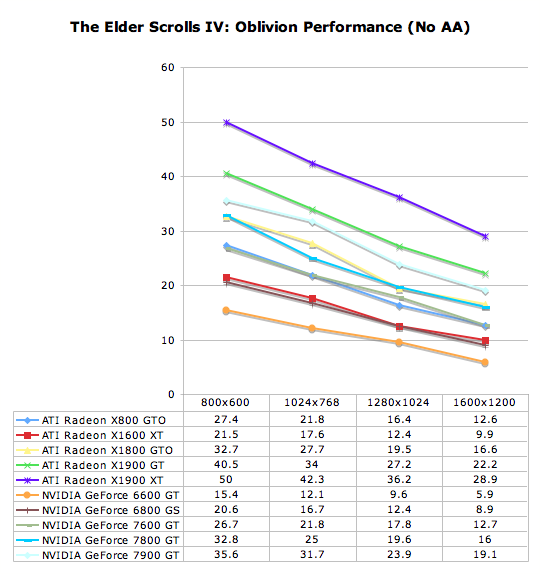

Our goal was to get acceptable performance levels under the current generation of cards at 1280x1024. For the most part we succeeded, but the X1600 XT just wasn't up to the task. Reducing settings for one consistently underperforming card wasn't worth it to us. These settings are also very enjoyable and playable. While more is better in this game, no GPU or CPU is going to give you everything.

While very graphically intensive, and first person, this isn't a twitch shooter. Our experience leads us to conclude that 20fps gives a good experience. It's playable a little lower, but watch out for some jerkiness that may pop up. Getting down to 16fps and below is a little too low to be acceptable. The main point to bring home is that you really want as much eye candy as possible. While Oblivion is an immersive and awesome game from a gameplay standpoint, the graphics certainly help draw the gamer in.

At our target resoltuion, the 6600 GT is utterly unplayable, but the 7600 GT falls into our passable performance range when running on a Core 2 Extreme X6800. Other than the X1600 XT, ATI cards sweep this test in terms of performance and value. In fact, at 1280x1024, we would recommend turning up a few more settings under the X1900 GT and X1900 XT.

For those of you who want higher settings than what we picked, a lower resolution is likely in order. At 1024x768, the 7900 GT and X1800 GTO gain enough head room to increase their load and remain playable, while an overclocked 7900 GT may be able to handle a little more at a higher resolution. Generally, resolution matters less than effects in this game, so we would certainly suggest it. The exception to the rule is that 800x600 starts to look a little too grainy for our tastes. It really isn't necessary to push up to 1600x1200 or beyond, but the X1900 XT does make it possible for those interested.

74 Comments

View All Comments

Sharky974 - Friday, August 11, 2006 - link

I tried comparing numbers for SCCT, FEAR and X3, the problem is Anand didn't bench any of these with AA in this mid-range test, and other sites all use 4XAA as default. So in other words no direct numbers comparison on those three games at least with those two Xbit/FS articles is possible.Although the settings are different, both FS and Anand showed FEAR as a tossup, though.

It does appear other sites are confirming Anand's results more than I thought though.

And the X1900GT for $230 is a kickass card.

JarredWalton - Friday, August 11, 2006 - link

The real problem is that virtually every level of a game can offer higher/lower performance relative to the average, and you also get levels that use effects that work better on ATI or NV hardware. Some people like to make a point about providing "real world" gaming benchmarks, but the simple fact of the matter is that any benchmark is inherently different from actually sitting down and playing a game - unless you happen to be playing the exact segment benchmarked, or perhaps the extremely rare game where performance is nearly identical throughout the entire game. (I'm not even sure what an example of that would be - Pacman?)Stock clockspeed 7900GT cards are almost uncommon these days, since the cards are so easy to overclock. Standard clocks are actually supposed to be 450/1360 IIRC, and most cards are at least slightly overclocked in one or both areas. Throw in all the variables, plus things like whether or not antialiasing is enabled, and it becomes difficult to compare articles between any two sources. I tend to think of it as providing various snapshots of performance, as no one site can provide everything. So if we determine X1900 GT is a bit faster overall than 7900 GT and another site determines the reverse, the truth is that the cards are very similar, with some games doing better on one architecture and other games on the other arch.

My last thought is that it's important to look at where each GPU manages to excel. If for example (and I'm just pulling numbers out of the hat rather than referring to any particular benchmarks) the 7900 GT is 20% faster in Half-Life 2 but the X1900 GT still manages frame rates of over 100 FPS, but then the X1900 GT is faster in Oblivion by 20% and frame rates are closer to 40 FPS, I would definitely wait to Oblivion figures as being more important. Especially if you run on LCDs, super high frame rates become virtually meaningless. If you can average well over 60 frames per second, I would strongly recommend enabling VSYNC on any LCD. Of course, down the road we are guaranteed to encounter games that require more GPU power, but predicting what game engine is most representative of the future requires a far better crystal ball than what we have available.

For what it's worth, I would still personally purchase an overclocked 7900 GT over an X1900 GT for a few reasons, provided the price difference isn't more than ~$20. First, SLI is a real possibility, whereas CrossFire with an X1900 GT is not (as far as I know). Second, I simply prefer NVIDIA's drivers -- the old-style, not the new "Vista compatible" design. Third, I find that NVIDIA always seems to do a bit better on brand new games, while ATI seems to need a patch or a new driver release to address performance issues -- not always, but at least that's my general impression; I'm sure there are exceptions to this statement. ATI cards are still good, and at the current price points it's definitely hard to pick a clear winner. Plus you have stuff like the reduced prices on X1800 cards, and in another month or so we will likely have new hardware in all of the price points. It's a never ending rat race, and as always people should upgrade only when they find that the current level of performance they had is unacceptable from their perspective.

arturnowp - Friday, August 11, 2006 - link

I think another advantage of 7900GT over X1900GT is power consumption. I'm not checking numbers of this matter so I am not 100% sure.coldpower27 - Saturday, August 12, 2006 - link

Yes, this is completely true, going by Xbitlab's numbers.

Stock 7900 GT: 48W

eVGA SC 7900 GT: 54W

Stock X1900 GT: 75W

JarredWalton - Friday, August 11, 2006 - link

Speech-recognition + lack of proofing = lots of typos"... out of a hat..."

"I would definitely weight..."

"... level of performance they have is..."

Okay, so there were only three typos that I saw, but I was feeling anal retentive.

Sharky974 - Friday, August 11, 2006 - link

Not too beat this to death, but at FS the X1900GT vs 7900GT benchmarksX1900GT:

Wins-BF2, Call of Duty 2 (barely)

Loses-Quake 4, Lock On Modern Air Combat, FEAR (barely),

Toss ups- Oblivion (FS runs two benches, foliage/mountains, the cards split them) Far Cry w/HDR (X1900 takes two lower res benches, 7900 GT takes two higher res benches)

At Xbit's X1900 gt vs 7900 gt conclusion

"The Radeon X1900 GT generally provides a high enough performance in today’s games. However, it is only in 4 tests out of 19 that it enjoyed a confident victory over its market opponent and in 4 tests more equals the performance of the GeForce 7900 GT. These 8 tests are Battlefield 2, Far Cry (except in the HDR mode), Half-Life 2, TES IV: Oblivion, Splinter Cell: Chaos Theory, X3: Reunion and both 3DMarks. As you see, Half-Life 2 is the only game in the list that doesn’t use mathematics-heavy shaders. In other cases the new solution from ATI was hamstringed by its having too few texture-mapping units as we’ve repeatedly said throughout this review."

Xbit review: http://www.xbitlabs.com/articles/video/display/pow...">http://www.xbitlabs.com/articles/video/display/pow...

Geraldo8022 - Thursday, August 10, 2006 - link

I wish you would do a similar article concerning the video cards for HDTV and HDCP. It is very confusing. Even though certain crds might state they are HDCP, it is not enabled.tjpark1111 - Thursday, August 10, 2006 - link

the X1800XT is only $200 shipped, why not include that card? if the X1900GT outperforms it, then ignore my comment(been out of the game for a while)LumbergTech - Thursday, August 10, 2006 - link

so you want to test the cheaper gpu's for those who dont want to spend quite as much..ok..well why are you using the cpu you chose then? that isnt exactly in the affordable segement for the average pc user at this pointPrinceGaz - Thursday, August 10, 2006 - link

Did you even bother reading the article, or did you just skim through it and look at the graphs and conclusion? May I suggest you read page 3 of the review, or in case that is too much trouble, read the relevant excerpt-