HD Video Playback: H.264 Blu-ray on the PC

by Derek Wilson on December 11, 2006 9:50 AM EST- Posted in

- GPUs

X-Men: The Last Stand CPU Overhead

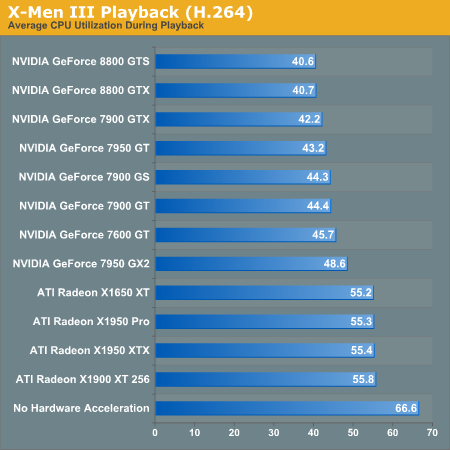

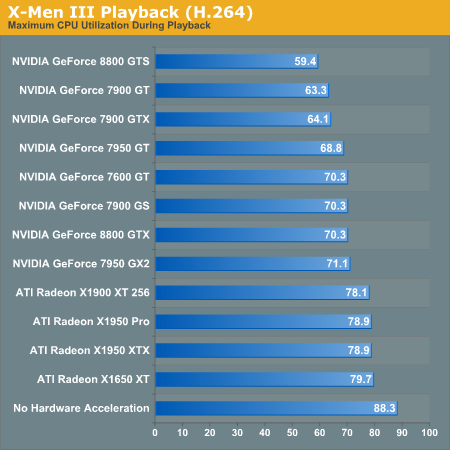

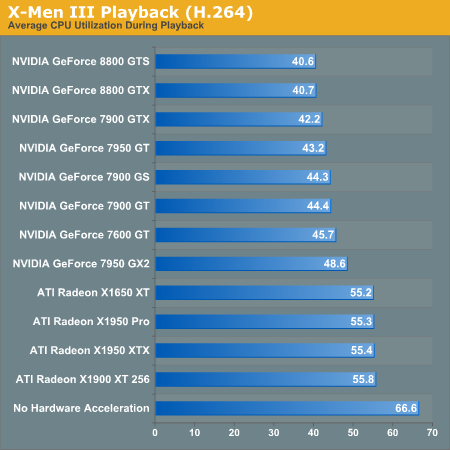

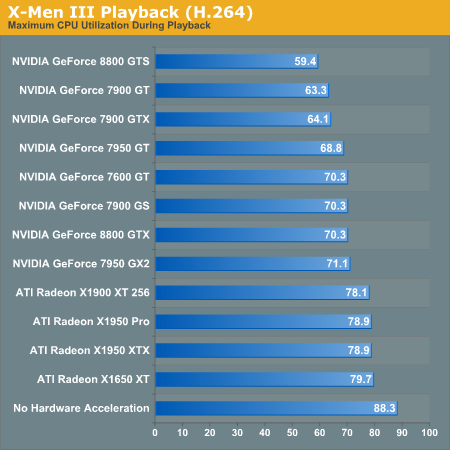

The first benchmark we will see compares the CPU utilization of our X6800 when paired with each one of our graphics cards. While we didn't test multiple variations of each card this time, we did test the reference clock speeds for each type. Based on our initial HDCP roundup, we can say that overclocked versions of these NVIDIA cards will see better CPU utilization. ATI hardware doesn't seem to benefit from higher clock speeds. We have also included CPU utilization for the X6800 without any help from the GPU for reference.

The leaders of the pack are the NVIDIA GeForce 8800 series cards. While the 7 Series hardware doesn't do as well, we can see that clock speed does affect video decode acceleration with these cards. It is unclear whether this will continue to be a factor with the 8 Series, as the results for the 8800 GTX and GTS don't show a difference.

ATI hardware is very consistent, but just doesn't improve performance as much as NVIDIA hardware. This is different than what our MPEG-2 tests indicated. We do still see a marked improvement over our unassisted decode performance test, which is good news for ATI hardware owners.

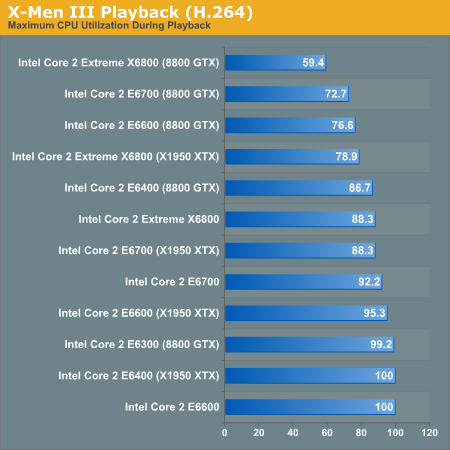

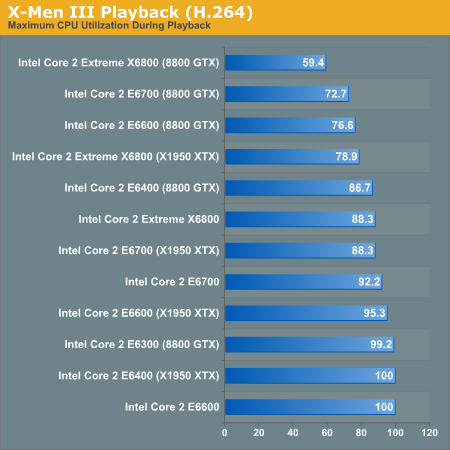

The second test we ran explores different CPUs performance with X-Men 3 decoding. We used NVIDIA's 8800 GTX and ATI's X1950 XTX in order to determine a best and worse case scenario for each processor. The following data isn't based on average CPU utilization, but on maximum CPU utilization. This will give us an indication of whether or not any frames have been dropped. If CPU utilization never hits 100%, we should always have smooth video. The analog to max CPU utilization in game testing is minimum framerate: both tell us the worst case scenario.

While only the E6700 and X6800 are capable of decoding our H.264 movie without help, we can confirm that GPU decode acceleration will allow us to use a slower CPU in order to watch HD content on our PC. The X1950 XTX clearly doesn't help as much as the 8800 GTX, but both make a big difference.

The first benchmark we will see compares the CPU utilization of our X6800 when paired with each one of our graphics cards. While we didn't test multiple variations of each card this time, we did test the reference clock speeds for each type. Based on our initial HDCP roundup, we can say that overclocked versions of these NVIDIA cards will see better CPU utilization. ATI hardware doesn't seem to benefit from higher clock speeds. We have also included CPU utilization for the X6800 without any help from the GPU for reference.

The leaders of the pack are the NVIDIA GeForce 8800 series cards. While the 7 Series hardware doesn't do as well, we can see that clock speed does affect video decode acceleration with these cards. It is unclear whether this will continue to be a factor with the 8 Series, as the results for the 8800 GTX and GTS don't show a difference.

ATI hardware is very consistent, but just doesn't improve performance as much as NVIDIA hardware. This is different than what our MPEG-2 tests indicated. We do still see a marked improvement over our unassisted decode performance test, which is good news for ATI hardware owners.

The second test we ran explores different CPUs performance with X-Men 3 decoding. We used NVIDIA's 8800 GTX and ATI's X1950 XTX in order to determine a best and worse case scenario for each processor. The following data isn't based on average CPU utilization, but on maximum CPU utilization. This will give us an indication of whether or not any frames have been dropped. If CPU utilization never hits 100%, we should always have smooth video. The analog to max CPU utilization in game testing is minimum framerate: both tell us the worst case scenario.

While only the E6700 and X6800 are capable of decoding our H.264 movie without help, we can confirm that GPU decode acceleration will allow us to use a slower CPU in order to watch HD content on our PC. The X1950 XTX clearly doesn't help as much as the 8800 GTX, but both make a big difference.

86 Comments

View All Comments

DerekWilson - Tuesday, December 12, 2006 - link

CPU utilization would be 50% if a single core was maxed on perfmon --PowerDVD is multithreaded and 100% utilization represents both cores being pegged.

Renoir - Tuesday, December 12, 2006 - link

Any chance of doing a quick test on quad-core to see how many threads powerdvd can generate unless you know already? At the very least it can from what you've said evenly distribute the load across 2 threads which is good.DerekWilson - Thursday, December 14, 2006 - link

We are looking into this as well, thanks for the feedback.mi1stormilst - Monday, December 11, 2006 - link

Who the crap cares, stupid movies are freaking dumb gosh! I mean who the crap watches movies on their computers anyway...freaking dorks. LOL!Sunrise089 - Monday, December 11, 2006 - link

This isn't a GPU review that tests a new game that we know will be GPU limited. This is a review of a technology that relies on the CPU. Furthermore, this is a tech that obviously pushes CPUs to their limit, so the legions of people without Core2Duo based CPUs would probably love to know whether or not their hardware is up to the task of decoding these files. I know any AMD product is slower than the top Conroes, but since the hardware GPU acceleration obviously doesn't directly coorespond to GPU performance, is it possible that AMD chips may decode Blue Ray at acceptable speeds? I don't know, but it would have been nice to learn that from this review.abhaxus - Monday, December 11, 2006 - link

I agree completely... I have an X2 3800+ clocked at 2500mhz that is not about to get retired for a Core 2 Duo.Why are there no AMD numbers? Considering the chip was far and away the fastest available for several years, you would think that they would include the CPU numbers for AMD considering most of us with fast AMD chips only require a new GPU for current games/video. I've been waiting AGES for this type of review to decide what video card to upgrade to, and anandtech finally runs it, and I still can't be sure. I'm left to assume that my X2 @ 2.5ghz is approximately equivalent to an E6400.

DerekWilson - Tuesday, December 12, 2006 - link

Our purpose with this article was to focus on graphics hardware performance specificallySunrise089 - Tuesday, December 12, 2006 - link

Frankly Derrick, that's absurd.If you only wanted to focus on the GPUs, then why test different CPUs? If you wanted to find out info about GPUs, why not look into the incredibly inconsistant performance, centerned around the low corelation between GPU performance in games versus movie acceleration? Finally, why not CHANGE the focus of the review when it became apparent that which GPU one owned was far less important then what CPU you were using?

Was it that hard to throw in a single X2 product rather than leave the article incomplete?

smitty3268 - Tuesday, December 12, 2006 - link

With all due respect, if that is the case then why did you even use different cpus? You should have kept that variable the same throughout the article by sticking with the 6800. Instead, what I read seemed to be 50% about GPU's and 50% about Core 2 Duo's.I'd really appreciate an update with AMD numbers, even if you only give 1 that would at least give me a reference point. Thanks.

DerekWilson - Thursday, December 14, 2006 - link

We will be looking at CPU performance in other articles.The information on CPU used was to justify our choice of CPU in order to best demonstrate the impact of GPU acceleration.