ATI Radeon HD 2900 XT: Calling a Spade a Spade

by Derek Wilson on May 14, 2007 12:04 PM EST- Posted in

- GPUs

Finally: A Design House Talks Cache Size

We're quite used to talking about cache sizes on Intel and AMD CPUs, but graphics hardware has been another story. We've been asking for quite some time, while other sites have taken to writing shader code to come up with educated guesses about how much data fits on die. Today we are very happy to bring you everything you could ever want to know about R600 caches.

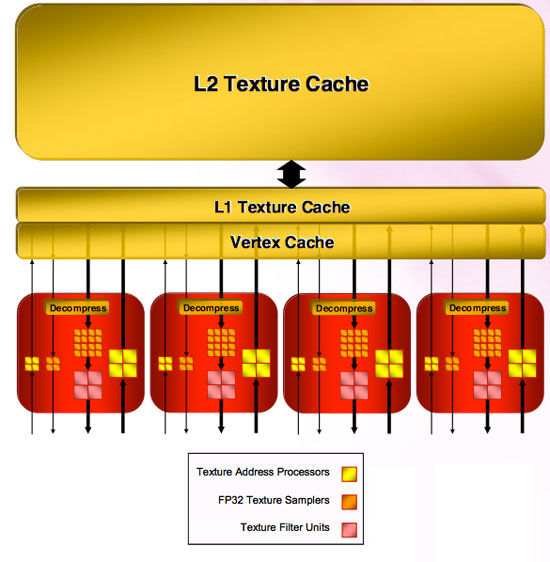

The four texture units are connected to memory through two levels of cache. Unfiltered texture requests go through the Vertex Cache (which is unfiltered) and filtered requests make use of the L1 Texture Cache. Each of these caches is 32kB read only. All texture units share these caches.

Both the L1 Texture Cache and the Vertex Cache are connected to an L2 cache that is 256kB. This is the largest cache on the chip, and will certainly handle quite a bit of data movement with the possibility of 8k x 8k texture sizes moving forward.

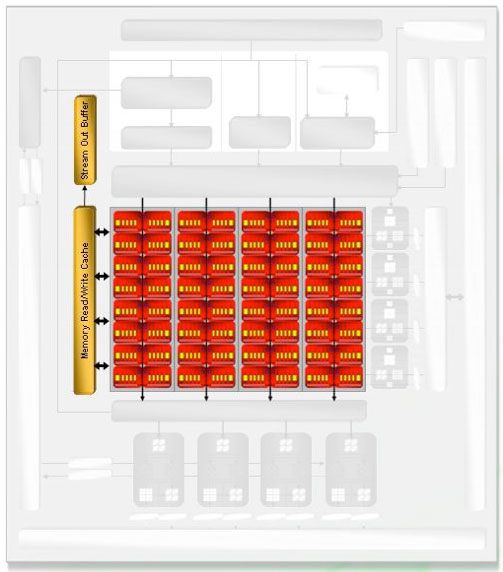

As for the shader hardware, the cache connected to the SIMD units is an 8 kB read / write cache. This cache is used to virtualize register space if necessary, export data to the stream out buffer (which can be done from any type of thread and can bypass the need to send data to the render back ends). This cache is also used to accelerate things like render to vertex buffer.

Most of R600's write caches are write-back caches, although we weren't given any specifics on which write caches are not write-back. The impression is that any unit that needs to write out over the memory bus is connected through a write cache that enables write combining to maximize bus utilization, write latency hiding, and short term reuse. We assume that the shader cache (what AMD calls the Memory Read/Write Cache) is also write-back.

The only thing we are really missing regarding caches is the information for Z/stencil cache and color cache connected off of the render back ends.

86 Comments

View All Comments

johnsonx - Monday, May 14, 2007 - link

and to which are you going to admit to?What was that old saying about glass houses and throwing stones? Shouldn't throw them in one? Definitely shouldn't them if you ARE one!

Puddleglum - Monday, May 14, 2007 - link

You mean, while it does compete performance-wise?johnsonx - Monday, May 14, 2007 - link

No, I'm pretty sure they mean DOESN'T. That is, the card can't compete with a GTX, yet still uses more power.INTC - Monday, May 14, 2007 - link

Chadder007 - Monday, May 14, 2007 - link

When will we have the 2600's out in review?? Thats the card im waiting for.TA152H - Monday, May 14, 2007 - link

Derek,I like the fact you weren't mincing your words, except for a little on the last page, but I'll give you a perspective of why it might be a little better than some people will think.

There are some of us, and I am one, that will never buy NVIDIA. I bought one, had nothing but trouble with it, and have been buying ATI for 20 years. ATI has been around for so long, there is brand loyalty, and as long as they come out with something that is competent, we'll consider it against their other products without respect to NVIDIA. I'd rather give up the performance to work with something I'm a lot more comfortable with.

The power though is damning, I agree with you 100% on this. Any idea if these beasts are being made by AMD now, or still whoever ATI contracted out? AMD is typically really poor in their first iteration of a product on a process technology, but tend to improve quite a bit in succeeding ones. I wonder how much they'll push this product initially. It might be they just get it out to have it out, and the next one will be what is really a worthwhile product. That only makes sense, of course, if AMD is now manufacturing this product. I hope they are, they surely don't need to make anymore of their processors that aren't selling well.

One last thing I noticed is the 2400 Pro had no fan! It had a heatsink from Hell, but that will still make this a really attractive product for a growing market segment. Any chance of you guys doing a review on the best fanless cards?

DerekWilson - Wednesday, May 16, 2007 - link

TSMC is manufacturing the R600 GPUs, not AMD.AnnonymousCoward - Tuesday, May 15, 2007 - link

"I bought one, had nothing but trouble with it, and have been buying ATI for 20 years."That made me laugh. If one bad experience was all it took to stop you from using a computer component, you'd be left with a PS/2 keyboard at best.

"...to work with something I'm a lot more comfortable with."

Are you more comfortable having 4:3 resolutions stretched on a widescreen? Maybe you're also more comfortable with having crappier performance than nvidia has offered for the last 6 months and counting? This kind of brand loyalty is silly.

MadBoris - Monday, May 14, 2007 - link

As far as your brand loyalty, ATI doesn't exist anymore. Furthermore AMD executives will got the staff so you can't call it the same.Secondly, Nvidia has been a stellar company providing stellar products. Everyone has some ups and downs. Unfortunately with the hardware and drivers this is ATI's (er AMD's) downs.

This card should do ok in comparison to the GTS, especially as drivers mature. Some reviews show it doing better than GTS640 in most tests, so I am not sure where or how discrepencies are coming about. Maybe hardware compatibility, maybe settings.

rADo2 - Monday, May 14, 2007 - link

Many NVIDIA 8600GT/GTS cards do not have a fan, are available on the market now, and are (probably; different league) much more powerful than 2400 ;) But as you are a fanboy, you are not interested, right?