ATI Radeon HD 2900 XT: Calling a Spade a Spade

by Derek Wilson on May 14, 2007 12:04 PM EST- Posted in

- GPUs

Finally: A Design House Talks Cache Size

We're quite used to talking about cache sizes on Intel and AMD CPUs, but graphics hardware has been another story. We've been asking for quite some time, while other sites have taken to writing shader code to come up with educated guesses about how much data fits on die. Today we are very happy to bring you everything you could ever want to know about R600 caches.

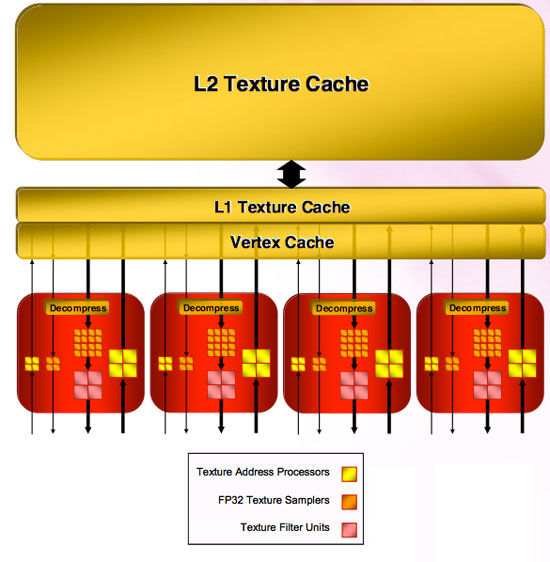

The four texture units are connected to memory through two levels of cache. Unfiltered texture requests go through the Vertex Cache (which is unfiltered) and filtered requests make use of the L1 Texture Cache. Each of these caches is 32kB read only. All texture units share these caches.

Both the L1 Texture Cache and the Vertex Cache are connected to an L2 cache that is 256kB. This is the largest cache on the chip, and will certainly handle quite a bit of data movement with the possibility of 8k x 8k texture sizes moving forward.

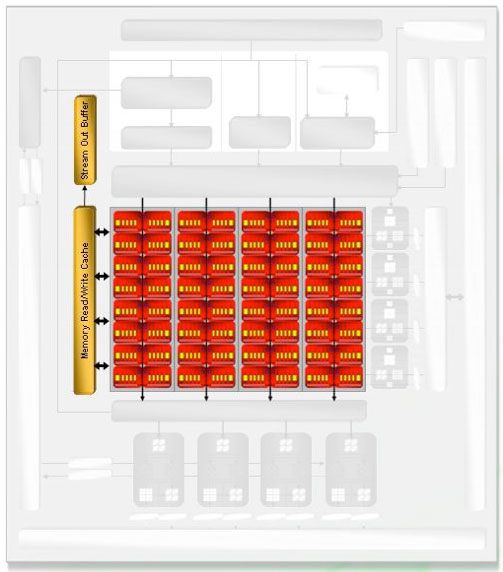

As for the shader hardware, the cache connected to the SIMD units is an 8 kB read / write cache. This cache is used to virtualize register space if necessary, export data to the stream out buffer (which can be done from any type of thread and can bypass the need to send data to the render back ends). This cache is also used to accelerate things like render to vertex buffer.

Most of R600's write caches are write-back caches, although we weren't given any specifics on which write caches are not write-back. The impression is that any unit that needs to write out over the memory bus is connected through a write cache that enables write combining to maximize bus utilization, write latency hiding, and short term reuse. We assume that the shader cache (what AMD calls the Memory Read/Write Cache) is also write-back.

The only thing we are really missing regarding caches is the information for Z/stencil cache and color cache connected off of the render back ends.

86 Comments

View All Comments

imaheadcase - Tuesday, May 15, 2007 - link

Says who? Most people I know don't care to turn on AA since they visually can't see a difference. Only people who are picky about everything they see do normally, the majority of people don't notice "jaggies" since the brain fixes it for you when you play.

Roy2001 - Tuesday, May 15, 2007 - link

Says who? Most people I know don't care to turn on AA since they visually can't see a difference.------------------------------------------

Wow, I never turn it of once I am used to have AA. I cannot play games anymore without AA.

Amuro - Tuesday, May 15, 2007 - link

Says who? No one spent $400 on a video card would turn off AA.

SiliconDoc - Wednesday, July 8, 2009 - link

Boy we'd sure love to hear those red fans claiming they turn off AA nowadays and it doesn't matter.LOL

It's just amazing how thick it gets.

imaheadcase - Tuesday, May 15, 2007 - link

Sure they do, because its a small "tweak" with a performance hit. I say who spends $400 on a video card to remove "jaggies" when they are not noticeable in the first place to most people. Same reason most people don't go for SLI or Crossfire, because it really in the end offers nothing substantial for most people who play games.

Some might like it, but they would not miss it if they stopped using it for some time. Its not like its make or break feature of a video card.

motiv8 - Tuesday, May 15, 2007 - link

Depends on the game or player tbh.I play within ladders without AA turned on, but for games like oblivion I would use AA. Depends on your needs at the time.