More Mainstream DX10: AMD's 2400 and 2600 Series

by Derek Wilson on June 28, 2007 8:35 AM EST- Posted in

- GPUs

The Test and Power

We will only be looking at DX9 performance under Windows XP today. This is still the platform of choice for gamers, and thus very important to examine. This doesn't mean we are ignoring DX10. We have a follow-up article on DX10 performance coming down the pipe next week. Here we'll take a look at how these cards stack up against the currently available DX10 games and demos.

We are also planning to look at UVD vs. PureVideo in a follow up article. Video decode is an important feature of these cards and we are interested in seeing how NVIDIA and AMD hardware stacks up against each other. Please stay tuned for this article as well.

For this series of tests, we used the following setup:

Performance Test Configuration:

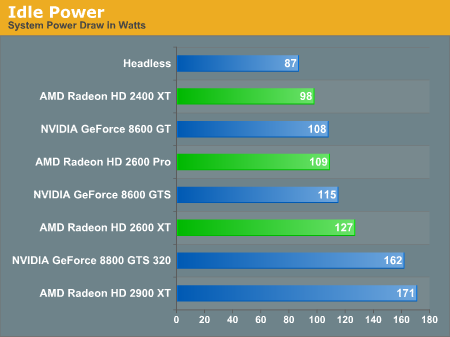

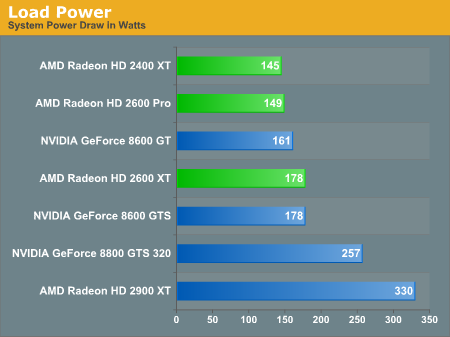

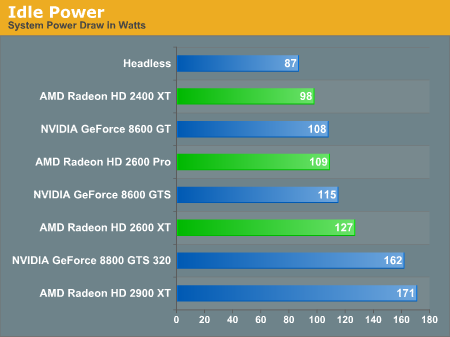

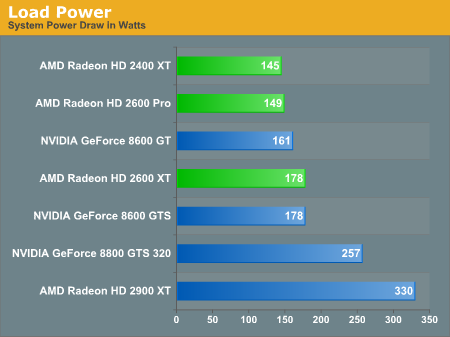

As for power, the 65nm AMD hardware shows rather unimpressive results. At idle, both the 8600 GTS and 8600 GT draw less power than the 2600 XT and 2600 Pro respectively. Under load we see the AMD parts become more competitive in terms of low power. Not even 65nm can help push the 2600 XT past the 8600 GTS in terms of power draw though.

As for our game tests, first we'll take a look at how only the new AMD HD series parts stack up against NVIDIA's 8 series competitors. Following that we'll break down test by game and show performance verses previous and current generation hardware.

We will only be looking at DX9 performance under Windows XP today. This is still the platform of choice for gamers, and thus very important to examine. This doesn't mean we are ignoring DX10. We have a follow-up article on DX10 performance coming down the pipe next week. Here we'll take a look at how these cards stack up against the currently available DX10 games and demos.

We are also planning to look at UVD vs. PureVideo in a follow up article. Video decode is an important feature of these cards and we are interested in seeing how NVIDIA and AMD hardware stacks up against each other. Please stay tuned for this article as well.

For this series of tests, we used the following setup:

Performance Test Configuration:

| CPU: | Intel Core 2 Extreme X6800 (2.93GHz/4MB) |

| Motherboard: | ASUS P5W-DH |

| Chipset: | Intel 975X |

| Chipset Drivers: | Intel 8.2.0.1014 |

| Hard Disk: | Seagate 7200.7 160GB SATA |

| Memory: | Corsair XMS2 DDR2-800 4-4-4-12 (1GB x 2) |

| Video Card: | Various |

| Video Drivers: | ATI Catalyst 8.38.9.1-rc2 NVIDIA ForceWare 158.22 |

| Desktop Resolution: | 1280 x 800 - 32-bit @ 60Hz |

| OS: | Windows XP Professional SP2 |

As for power, the 65nm AMD hardware shows rather unimpressive results. At idle, both the 8600 GTS and 8600 GT draw less power than the 2600 XT and 2600 Pro respectively. Under load we see the AMD parts become more competitive in terms of low power. Not even 65nm can help push the 2600 XT past the 8600 GTS in terms of power draw though.

As for our game tests, first we'll take a look at how only the new AMD HD series parts stack up against NVIDIA's 8 series competitors. Following that we'll break down test by game and show performance verses previous and current generation hardware.

96 Comments

View All Comments

DerekWilson - Thursday, June 28, 2007 - link

I agree that we need to know dx10 performance, which is why we're doing a followup.I would think it would be clear that, if I were buying a card now, I'd buy a card that performed well under dx9.

All the games I play are dx9, all the games I'll play over the next 6 months will have a dx9 codepath, and we don't have dx10 tests that really help indicate what performance will be like on games designed strictly around dx10.

We always recommend people buy hardware to suit their current needs, because these are the needs we can talk about through our testing better.

TA152H - Thursday, June 28, 2007 - link

OK, that recommendation part is a little scary. You should be balancing the two, because as you know, the future does come. DX9 will exist for the next six months, but there are already games using DX10 that look better than DX9. Plus, Vista surely loves DX10.But, we can agree to disagree on what's more important. I think this site's backward looking style is obvious, and while I fundamentally disagree with it, at least you guys are consistent in your love for dying technology. Then again, I still prefer Win 2K over XP, so I guess I'm guilty of it too, but in this case my primary concern would be DX10. It's better, noticeably so. But, the main thing is, you're judging something for what it's not made for. AMD's announcement made it very clear that DX10 was the main point, and HD visual effects. Yet you chose to test neither and condemn the hardware for legacy code. Read the announcement, and judge it on what's it's supposed to be for. Would you condemn a Toyota Celica because it's not as fast as a Porsche? Or a Corvette because it's got bad fuel economy? I doubt it, because that's not why they were made. Why condemn this part without testing it for what it was for? I didn't see DX9 mentioned anywhere in their announcement. Maybe that was a hint?

Chaotic42 - Thursday, June 28, 2007 - link

Yes, but how many people are going to purchase low-to-mid range cards to play games that aren't coming out for several months?poohbear - Thursday, June 28, 2007 - link

celica compared to a porsche?!?! dude that analogy is waayyyyyy off. How the hell is a toyata celica supposed to represent DX9 & a porsche DX10?!?! considering a porsche u can see instant results and enjoy it instantly, there's nothing out right now on a DX10 and i dont think even in 3 years the DX10 AP would ever encompass the differences between a celica and a porsche. get over yourself.KhoiFather - Thursday, June 28, 2007 - link

Wow, what worthless cards! Like does ATI really think people are going to buy this crap? Maybe for a media box and that's about it but for us mid-range gamers, it's worthless! All this hype and wait for nothing I tell ya!Chadder007 - Thursday, June 28, 2007 - link

Yeah, WTF?? They are all sometimes WORSE than the X1650XT!!! What is going on? According to the specs it should be better, could it be driver issues still??tungtung - Thursday, June 28, 2007 - link

I don't think driver alone will help much ... beside ATI has never really known to be able to magically put strong numbers out through driver updates.Personally I'd say the 2xxx line that AMD/ATI has just sunk to the deep abyss. First it was months late, and the performance was light years behind ... all the while the price is just well not right.

As much as I hate saying this ... it seems that we'll have to wait till Intel dips their giant feet into the graphic industry before nVidia and (especially) AMD/ATI woke up and think carefully about their next products (that is if they can bring a competitive product) ... especially in the mainstream and value market.

OrSin - Thursday, June 28, 2007 - link

Very easy to guess what is happening here. Both camps are targeting the the high engame that switch to vista and cna afford the high end cards. And the OEM cards for deal so they push Vista again on people. Niether company want to lower their high sells by releasing a mid-level part. I just wonder if the cards are just more expensive to make for D10. I don't see a reason it would be, maybe I'm wrong.Until Vista is used by more gamer my guess is they will not release a mid range card.

Early adaptors of software is getting screwed.

DigitalFreak - Thursday, June 28, 2007 - link

Agreed. I couldn't help laughing when I read the Final Words section. Kinda like "The Nvidia 86xx/85xx cards suck, and the ATI 26xx/24xx suck worse!"WTF happened this generation? The only cards worth their salt are the 88xx series. Nvidia dropped the ball with their low end stuff, and AMD.... well, AMD never really showed up for the game.

smitty3268 - Thursday, June 28, 2007 - link

I think it's clear that with these low end cards, ATI and NVIDIA both came to the conclusion that they could either spend their transistor budget implementing the DX10 spec or adding performance, and they both went with DX10. Probably so they could be marketed as Vista compatible, or whatever. It's still a mystery why they didn't choose to make any midrange cards, as they tend to sell fairly well AFAIK. Perhaps these were meant to be midrange cards and ATI/NVIDIA were just shocked by how badly performance scaled downwards in their current designs, and were forced to reposition them as cheaper cards.