HD Video Decode Quality and Performance Summer '07

by Derek Wilson on July 23, 2007 5:30 AM EST- Posted in

- GPUs

Serenity (VC-1) Performance

We haven't yet found a VC-1 title to match either of the H.264 titles we tested in complexity or bitrate, so we decided to stick with our tried and true test of Serenity. The main event here is in determining the real advantage of including VC-1 bitstream decoding on the GPU. NVIDIA's claim is that this is not as complex as it is under H.264 so it isn't necessary. AMD is pushing their solution as more complete, but does it really matter? Let's take a look.

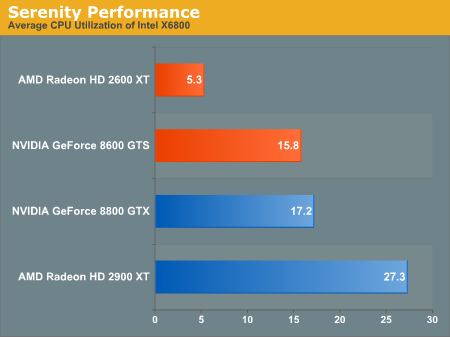

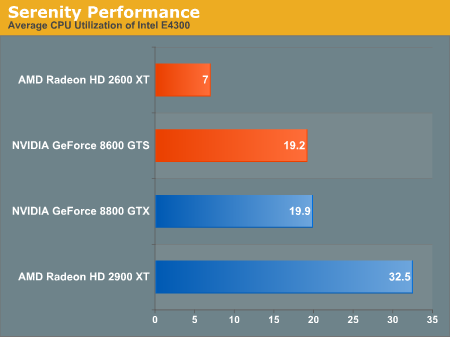

Our HD 2900 XT has the highest CPU utilization, while the 8600 GTS and 8800 GTS share roughly the same performance. The HD 2600 XT leads the pack with an incredibly low CPU overhead of just 5 percent. This is probably approaching the minimum overhead of AACS handling and disk accesses through PowerDVD, which is very impressive. At the same time, the savings with GPU bitstream decode are not as impressive under VC-1 as on H.264 on the high end.

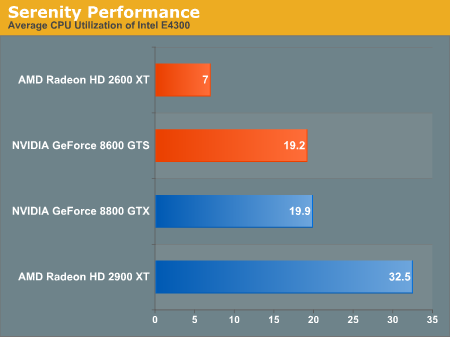

Dropping down in processor power doesn't heavily impact CPU overhead in the case of VC-1.

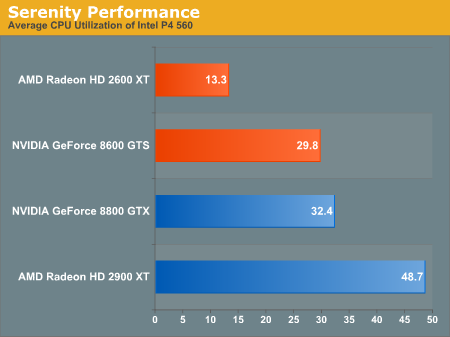

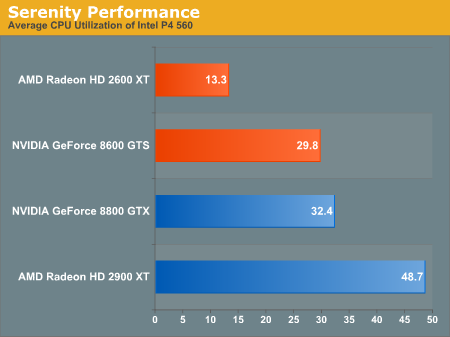

Moving all the way down to a Pentium 4 based processor, we do see higher CPU utilization across the board. The difference isn't as great as under H.264, and, not only that, but VC-1 movies appear to remain very playable on this hardware even without bitstream decoding on the GPU. This is not the case for our H.264 movies. While we wouldn't recommend it with the HD 2900 XT, we could even consider looking at a (fairly fast) single core CPU the other hardware, with or without full decode acceleration.

We haven't yet found a VC-1 title to match either of the H.264 titles we tested in complexity or bitrate, so we decided to stick with our tried and true test of Serenity. The main event here is in determining the real advantage of including VC-1 bitstream decoding on the GPU. NVIDIA's claim is that this is not as complex as it is under H.264 so it isn't necessary. AMD is pushing their solution as more complete, but does it really matter? Let's take a look.

Our HD 2900 XT has the highest CPU utilization, while the 8600 GTS and 8800 GTS share roughly the same performance. The HD 2600 XT leads the pack with an incredibly low CPU overhead of just 5 percent. This is probably approaching the minimum overhead of AACS handling and disk accesses through PowerDVD, which is very impressive. At the same time, the savings with GPU bitstream decode are not as impressive under VC-1 as on H.264 on the high end.

Dropping down in processor power doesn't heavily impact CPU overhead in the case of VC-1.

Moving all the way down to a Pentium 4 based processor, we do see higher CPU utilization across the board. The difference isn't as great as under H.264, and, not only that, but VC-1 movies appear to remain very playable on this hardware even without bitstream decoding on the GPU. This is not the case for our H.264 movies. While we wouldn't recommend it with the HD 2900 XT, we could even consider looking at a (fairly fast) single core CPU the other hardware, with or without full decode acceleration.

63 Comments

View All Comments

bpt8056 - Monday, July 23, 2007 - link

Does it have HDMI 1.3??phusg - Monday, July 23, 2007 - link

Indeed, which makes it strange that he gave the nvidia cards 100% scores! Sure manual control on the noise filter is nice, but 100% is 100% Derek. It working badly when set above 75% makes for a less than perfect HQV score IMHO. Personally I would have gone with knocking off 5 points from the nvidia card's noise scores for this.

Scrogneugneu - Monday, July 23, 2007 - link

I would have cut points back too, but not because at 100% the image quality goes down. There's no sense in providing a slider if every position on the slider gives the same perfect image, doesn't it?Giving a slider, however, isn't very user-friendly, from an average Joe's perspective. I want to dump my movie in the player and listen to it, and I want it to look great. I do not want to move a slider around for every movie to get a good picture quality. Makes me think about the Tracking on old VHS. Quite annoying.

From a technological POV, yes, NVidia's implementation enables players to be great. From a consumer's POV, it doesn't. I wanna listen to a movie not fine tune my player.

Chunga29 - Monday, July 23, 2007 - link

It's all about the drivers, people! TechReport did their review with older drivers (at least on the NVIDIA side). So in the past two weeks, NVIDIA apparently addressed some problems and AT took a look at the current results. Probably delayed the article a couple times to rerun tests as well, I bet!As for the above comment about the slider, what you're failing to realize is that noise reduction impacts the final output. I believe Sin City used a lot of noise intentionally, so if you watch that on ATI hardware the result will NOT be what the director wanted. A slider is a bit of a pain, but then being a videophile is also a pain at times. With an imperfect format and imperfect content, we will always have to deal with imperfect solutions. I'd take NVIDIA here as well, unless/until ATI offers the ability to shut off NR.

phusg - Monday, July 23, 2007 - link

Hi Derek,Nice article, although I've just noticed a major omission: you didn't bench any AGP cards! There are AGP versions of the 2600 and 2400 cards and I think these are very attractive upgrades for AGP HTPC owners who are probably lacking the CPU power for full HD. The big question is whether the unidirectional AGP bus is up to the HD decode task. The previous generation ATi X1900 AGP cards reportedly had problems with HD playback.

Hopefully you'll be able to look into this, as AFAIK no-one else has yet.

Regards, Pete

ericeash - Monday, July 23, 2007 - link

i would really like to see these tests done on an AMD x2 proc. the core 2 duo's don't need as much offloading as we do.Orville - Monday, July 23, 2007 - link

Derek,Thanks so much for the insightful article. I’ve been waiting on it for about a month now, I guess. You or some reader could help me out with a couple of embellishments, if you would.

1.How much power do the ATI Radeon HD 2600 XT, Radeon HD 2600 Pro, Nvidia GeForce 6800 GTS and GeForce 6800 GT graphics cards burn?

2.Do all four of the above mentioned graphics cards provide HDCP for their DVI output? Do they provide simultaneous HDCP for dual DVI outputs?

3.Do you recommend CyberLink’s Power DVD video playing software, only?

Regards,

Orville

DerekWilson - Monday, July 23, 2007 - link

we'll add power numbers tonight ... sorry for the omissionall had hdcp support, not all had hdcp over dual-link dvi support

powerdvd and windvd are good solutions, but powerdvd is currently further along. we don't recommend it exclusively, but it is a good solution.

phusg - Wednesday, July 25, 2007 - link

I still can't see them, have they been added? Thanks.GlassHouse69 - Monday, July 23, 2007 - link

I agree here, good points.15% cpu utilization looks great until.... you find that a e4300 takes so little power that to use 50% of it to decode is only 25 watts of power. It is nice seeing things offloaded from the cpu.... IF the video card isnt cranking up alot of heat and power.