Intel's 45nm Dual-Core E8500: The Best Just Got Better

by Kris Boughton on March 5, 2008 3:00 AM EST- Posted in

- CPUs

Determining a Processor Warranty Period

Like most electrical parts, a CPU's design lifetime is often measured in hours, or more specifically the product's mean time to failure (MTTF), which is simply the reciprocal of the failure rate. Failure rate can be defined as the frequency at which a system or component fails over time. A lower failure rate, or conversely a higher MTTF, suggests the product on average will continue to function for a longer time before experiencing a problem that either limits its useful application or altogether prevents further use. In the semiconductor industry, MTTF is often used in place of mean time between failures (MTBF), a common method of conveying product reliability with hard drive. MTBF suggests the item is capable of repair following failure, which is often not the case when it comes to discrete electrical components such as CPUs.

A particular processor product line's continuous failure rate, as modeled over time, is largely a function of operating temperature - that is, elevated operating temperatures lead to decreased product lifetimes. Which means, for a given target product lifetime, it is possible to derive with a certain degree of confidence - after accounting for all the worst-case end-of-life minimum reliability margins - a maximum rated operating temperature that produces no more than the acceptable number of product failures over a period of time. What's more, although none of Intel's processor lifetimes are expressly published, we can only assume the goal is somewhere near the three-year mark, which just so happens to correspond well with the three-year limited warranty provided to the original purchaser of any Intel boxed processor. (That last part was a joke; this is no accident.)

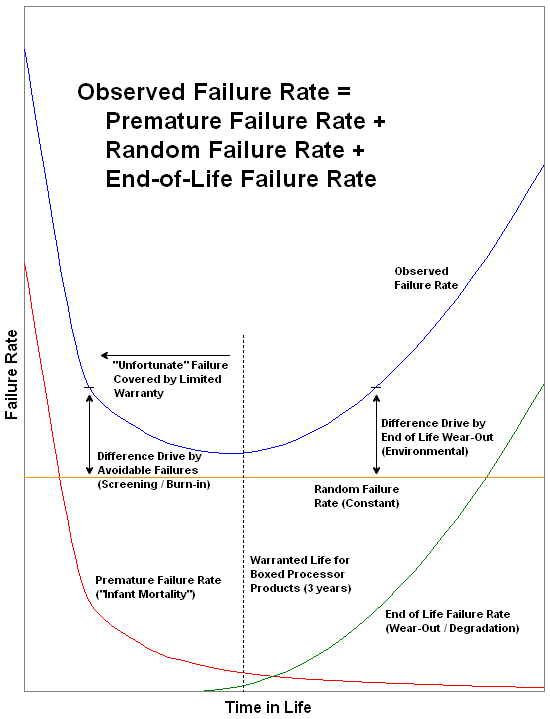

When it comes to semiconductors, there are three primary types of failures that can put an end to your CPU's life. The first, and probably the more well-known of the three, is called a hard failure, which can be said to have occurred whenever a single overstress incident can be identified as the primary root cause of the product's end of life. Examples of this type of failure would be the processor's exposure to an especially high core voltage, a recent period of operation at exceedingly elevated temperatures, or perhaps even a tragic ESD event. In any case, blame for the failure can be (or most often obviously should be) traced back and attributed to a known cause.

This is different from the second failure mode, known as a latent failure, in which a hidden defect or previously unnoted spot of damage from an earlier overstress event eventually brings about the component's untimely demise. These types of failure can lay dormant for many years, sometimes even for the life of the product, unless they are "coaxed" into existence. Several severity factors can be used to determine whether a latent failure will ultimately result in a complete system failure, one of those being the product's operating environment following the "injury." It is accepted that components subjected to a harsher operating environment will on average reach their end of life sooner than those not stressed nearly as hard. This particular failure mode is sometimes difficult to identify as without the proper post-mortem analysis it can be next to impossible to determine whether the product reached end-of-life due to a random failure or something more.

The third and final failure type, an early failure, is commonly referred to as "infant mortality". These failures occur soon after initial use, usually without any warning. What can be done about these seemingly unavoidable early failures? They are certainly not attributable to random failures, so by definition it should be possible to identify them and remove them via screening. One way to detect and remove these failures from the unit population is with a period of product testing known as "burn-in." Burn-in is the process by which the product is subjected to a battery of tests and periods of operation that sufficiently exercise the product to the point where these early failures can be caught prior to packaging for sale.

In the case of Intel CPUs, this process may even be conducted at elevated temperatures and voltages, known as "heat soaking." Once the product passes these initial inspections it is trustworthy enough to enter the market for continuous duty within rated specifications. Although this process can remove some of the weaker, more failure prone products from the retail pool - some of which might have very well gone on to lead a normal existence - the idea is that by identifying them earlier, fewer will come back as costly RMA requests. There's also the fact that large numbers of in-use failures can have a significant negative impact on the public perception of a company's ability to supply reliable products. Case in point: the mere mention of errata usually has most consumers up in arms before they are even aware of the applicability.

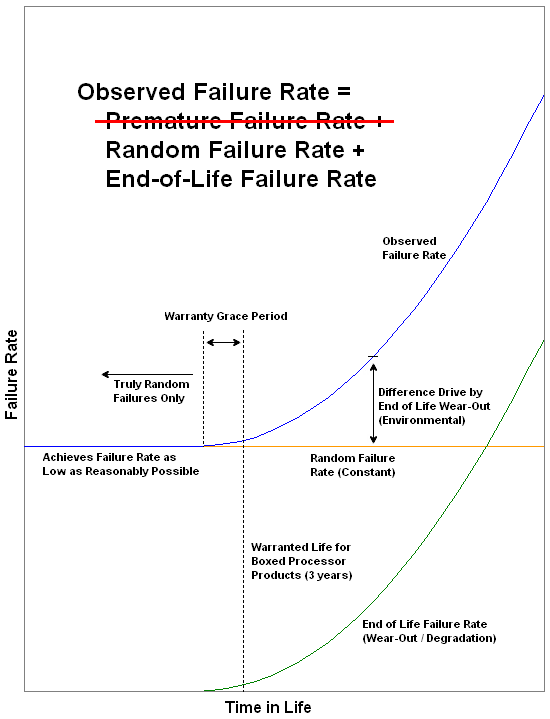

The graphic above illustrates how the observed failure rate is influenced by the removal of early failures. Because of this nearly every in-use failure can be credited as unavoidable and random in nature. By establishing environmental specifications and usage requirements that ensure near worry-free operation for the product's first three years of use, a "warranty grace period" can be offered. This removes all doubt as to whether the failure occurred because of a random event or the start of its eventual wear-out, where degradation starts to play a role in the observed failures.

Implementing process manufacturing, assembly, and testing advancements that lower the probability of random failures is a big part of improving any product's ultimate reliability rate. By carefully analyzing the operating characteristics of each new batch of processors, Intel is able to determine what works in achieving this goal and what doesn't. Changes that bring about significant improvements in product reliability - enough to offset the cost of a change to the manufacturing process - are sometimes implemented as a stepping change. The additional margin created by the change is often exploitable with respect to realizing greater overclocking potential. Let's discuss this further and see exactly what it means.

45 Comments

View All Comments

MrModulator - Wednesday, March 5, 2008 - link

Made a typo in the post. The last sentence should have said "Very interesting indeed, as DAW is an area in much more NEED for cpu-POWER than gaming.Casper42 - Wednesday, March 5, 2008 - link

Q9450 and his friends?For that matter, when am I going to be able to BUY an E8xxx part? Newegg has the E8400 listed but always out of stock.

I tend to be the guy who people come to when they want to build a new rig, and right now I am telling them all to hold off and get an E8000 series or a Q9000 series CPU and a GF9000 series GPU. But right now all these parts arent REALLY available.

So whats the REAL release date Kris?

Margalus - Wednesday, March 5, 2008 - link

I bought an e8400 about 3 weeks ago from http://www.zipzoomfly.com">http://www.zipzoomfly.comI love the thing. with the thermalright ultra-120 extreme it runs at 25°C idle and with both cores at 100% load it hits 34°C! Room temp around 21°C.

MaulSidious - Wednesday, March 5, 2008 - link

http://www.overclockers.co.uk/showproduct.php?prod...">http://www.overclockers.co.uk/showprodu...groupid=... these have been in stock and well stocked for a few weeks now.Kromis - Wednesday, March 5, 2008 - link

Most impressive