NVIDIA's 1.4 Billion Transistor GPU: GT200 Arrives as the GeForce GTX 280 & 260

by Anand Lal Shimpi & Derek Wilson on June 16, 2008 9:00 AM EST- Posted in

- GPUs

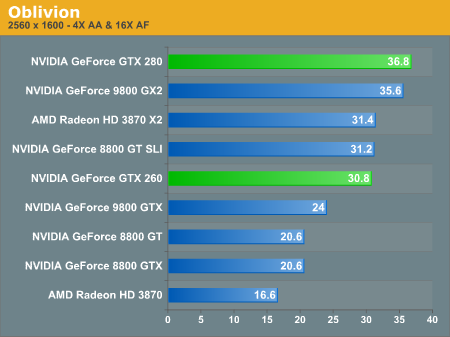

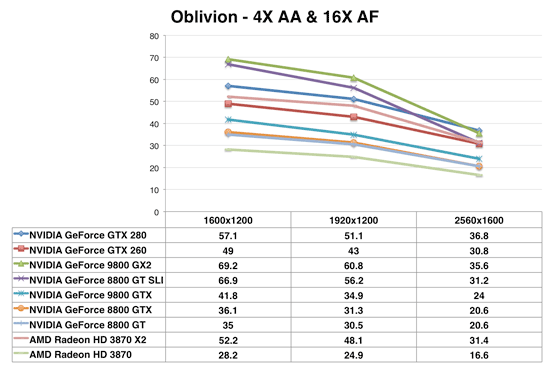

Oblivion

The race is pretty close between the GTX 280 and 9800 GX2 in Oblivion with AA enabled. It's worth noting that at all other resolutions, the GX2 is faster than the GTX 280 but at 2560 x 1600 the memory bandwidth requirements are simply too much for the GX2. Remember that despite having two GPUs, the GX2 doesn't have a real world doubling of memory bandwidth.

The GTX 260 performs like a Radeon HD 3870 X2 and 8800 GT SLI here. Once again it's one card performing like two, it's just that the two card solution is actually cheaper. Hmm...a great way to sell more SLI motherboards.

108 Comments

View All Comments

tkrushing - Wednesday, June 18, 2008 - link

Say what you want about this guy but this is partially true which is why AMD/ATI is in the position they have been. They are slowly climbing out of that hole they've been in though. Would have been nice to see 4870x2 hit the market first. As we know competition = less prices for everyone!hk690 - Tuesday, June 17, 2008 - link

I would love to kick you hard in the face, breaking it. Then I'd cut your stomach open with a chainsaw, exposing your intestines. Then I'd cut your windpipe in two with a boxcutter. Then I'd tie you to the back of a pickup truck, and drag you, until your useless fucking corpse was torn to a million fucking useless, bloody, and gory pieces.

Hopefully you'll get what's coming to you. Fucking bitch

http://www.youtube.com/watch?v=XNAFUpDTy3M">http://www.youtube.com/watch?v=XNAFUpDTy3M

I wish you a truly painful, bloody, gory and agonizing death, cunt

7Enigma - Wednesday, June 18, 2008 - link

Anand, I'm all for free speech and such, but this guy is going a bit far. I read these articles at work frequently and once the dreaded C-word is used I'm paranoid I'm being watched.Mr Roboto - Thursday, June 19, 2008 - link

I thought those comments would be deleted already. I'm sure no one cares if they are. I don't know what that person is so mad about .hk690 - Tuesday, June 17, 2008 - link

Die painfully okay? Prefearbly by getting crushed to death in a garbage compactor, by getting your face cut to ribbons with a pocketknife, your head cracked open with a baseball bat, your stomach sliced open and your entrails spilled out, and your eyeballs ripped out of their sockets. Fucking bitch

Mr Roboto - Wednesday, June 18, 2008 - link

Ouch.. Looks like you hit a nerve with AMD\ATI's marketing team!bobsmith1492 - Monday, June 16, 2008 - link

The main benefit from the 280 is the reduced power at idle! If I read the graph right, at idle the 9800 takes ~150W more than the 280 while at idle. Since that's where computers spend the majority of their time, depending on how much you game, that can be a significant cost.kilkennycat - Monday, June 16, 2008 - link

Maybe you should look at the GT200 series from the point of view of nvidia's GPGPU customers - the academic researchers, technology companies requiring fast number-cruching available on the desktop, the professionals in graphics-effects and computer animation - not necessarily real-time, but as quick as possible... The CUDA-using crew. The Tesla initative. This is an explosively-expanding and highly profitable business for nVidia - far more profitable per unit than any home desktop graphics application. An in-depth analysis by Anandtech of what the GT200 architecture brings to these markets over and above the current G8xx/G9xx architecture would be highly appreciated. I have a very strong suspicion that sales of the GT2xx series to the (ultra-rich) home user who has to have the latest and greatest graphics card is just another way of paying the development bills and not the true focus for this particular architecture or product line.nVidia is strongly rumored to be working on the true 2nd-gen Dx10.x product family, to be introduced early next year. Considering the size of the GTX280 silicon, I would expect them to transition the 65nm GTX280 GPU to either TSMC's 45nm or 55nm process before the end of 2008 to prove out the process with this size of device, then in 2009 introduce their true 2nd-gen GPU/GPGPU family on this latter process. A variant on the Intel "tic-toc" process strategy.

strikeback03 - Tuesday, June 17, 2008 - link

But look at the primary audience of this site. Whatever nvidia's intentions are for the GT280, I'm guessing more people here are interested in gaming than in subsidizing research.Wirmish - Tuesday, June 17, 2008 - link

"...requiring fast number-cruching available on the desktop..."GTX 260 = 715 GFLOPS

GTX 280 = 933 GFLOPS

HD 4850 = 1000 GFLOPS

HD 4870 = 1200 GFLOPS

4870 X2 = 2400 GFLOPS

Take a look here: http://tinyurl.com/5jwym5">http://tinyurl.com/5jwym5