NVIDIA's 1.4 Billion Transistor GPU: GT200 Arrives as the GeForce GTX 280 & 260

by Anand Lal Shimpi & Derek Wilson on June 16, 2008 9:00 AM EST- Posted in

- GPUs

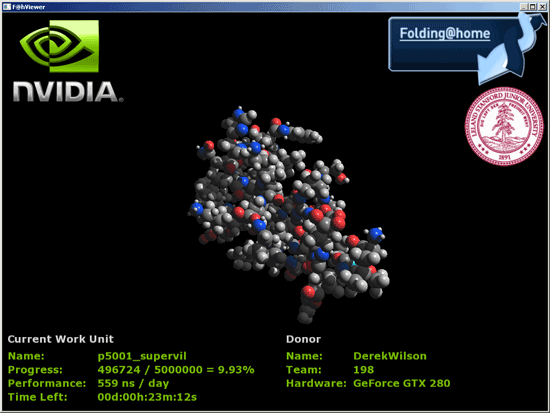

Folding@home Now on NVIDIA

Folding@home, for those who don't know, is a distributed computing app designed to help researchers better understand the process of protien folding. Knowing more about how protiens assemble themselves can help us better understand many diseases such as alzheimers, but protien folding is very complex and takes a long time to simulate. the problem is made much easier by breaking it up into smaller parts and allowing many people to work on the problem.

Most of this work has been done on the CPU, but PS3 and AMD R5xx GPUs have been able to fold for a while now. Recently support for AMD's R6xx lineup was added as well. NVIDIA GPUs haven't been enabled to run folding@home until now (or very soon anyway). Stanford has finally implemented a version of folding@home with CUDA support that will allow all G80 and higher hardware to run the client.

We've had the chance for the past couple days to play around with a pre-beta version of the folding client, and running folding on NVIDIA hardwarwe is definitly very fast. Work units and protiens are different on CPUs and GPUs because the hardware is suited to different tasks, but to give some perspective a quadcore CPU could simulate tens of nanoseconds of a protien fold, while GPUs can simulate hundreds.

While we don't have the ability to bring you any useful comparative benchmarks right now, Stanford is working on implemeting some standard test cases that can be run on different hardware. This will help us actually compare the performance of different hardware in a meaningful way. Right now giving you numbers to compare CPUs, PS3s, AMD and NVIDIA GPUs would be like directly comparing framerates from different games on different hardware as if they were related.

What we will say is that NVIDIA predicts that the GTX 280 will be capable of simulating something between 5 and 6 hundred nanoseconds of folding per day while CPUs are going to be two orders of magnitude slower. They also show the GTX 280 handily ahead of any current AMD solutions by high margins, but until we can test it ourselves we really don't want to put a finer point on it.

In our tests, we've actually seen the GT200 folding client perform at between 600 and 850ns per day (using the timestamps in the log file to determine performance), so we are quite impressed. Work units complete about every 20 to 25 minutes depending on the protien and whether or not the viewer is running (which does have a significant impact since the calculations and the display are both running on the GPU).

Hardware H.264 Encoding

For years now both ATI and NVIDIA have been boasting about how much better their GPUs were for video encoding than Intel's CPUs. They promised multi-fold speedups in performance but never delivered, so we've been stuck encoding and transcoding videos on CPUs.

With the GT200, NVIDIA has taken one step closer to actually delivering on these promises. We got a copy of a severely limited beta of Elemental Technologies' BadaBOOM Media Converter:

The media converter currently only works on the GeForce GTX 280 and GTX 260, but when it ships there will be support for G80/G92 based GPUs as well. The arguably more frustrating issue with it today is its lack of support for CPU-based encoding, so we can't actually make an apples-to-apples comparison to CPUs or other GPUs. The demo will also only encode up to 2 minutes of video.

With that out of the way however, BadaBOOM will perform H.264 encoding on your GPU. There is still a significant amount of work being done on the CPU during the encode, our Core 2 Extreme QX9770 was at 20 - 30% CPU utilization during the entire encode process, but it's better than the 50 - 100% it would normally be at if we were encoding on the CPU alone.

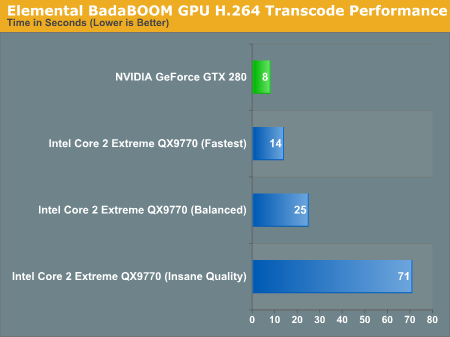

Then there's the speedup. We can't perform a true apples-to-apples comparison since we can't use BadaBOOM's H.264 encoder on anything else, but compared to using the open source x264 encoder the performance speedup is pretty good. We used AutoMKV and played with its presets to vary quality:

In the worst case scenario, the GTX 280 is around 40% faster than encoding on Intel's fastest CPU alone. In the best case scenario however, the GTX 280 can complete the encoding task in 1/10th the time.

We're not sure where a true apples-to-apples comparison would end up, but somewhere between those two extremes is probably a good guesstimate. Hopefully we'll see more examples of GPU based video encoder applications in the future as there seems to be a lot of potential here. Given how long it takes to encode a Blu-ray movie, we needn't even explain why it's necessary.

108 Comments

View All Comments

strikeback03 - Tuesday, June 17, 2008 - link

So are you blaming nvidia for games that require powerful hardware, or just for enabling developers to write those games by making powerful hardware?InquiryZ - Monday, June 16, 2008 - link

Was AC tested with or without the patch? (the patch removes a lot of performance on the ATi cards..)DerekWilson - Monday, June 16, 2008 - link

the patch only affects performance with aa enabled.since the game only allows aa at up to 1680x1050, we tested without aa.

we also tested with the patch installed.

PrinceGaz - Monday, June 16, 2008 - link

nVidia say they're not saying exactly what GT200 can and cannot do to prevent AMD bribing game developers to use DX10.1 features GT200 does not support, but you mention that"It's useful to point out that, in spite of the fact that NVIDIA doesn't support DX10.1 and DX10 offers no caps bits, NVIDIA does enable developers to query their driver on support for a feature. This is how they can support multisample readback and any other DX10.1 feature that they chose to expose in this manner."

Now whilst it is driver dependent and additional features could be enabled (or disabled) in later drivers, it seems to me that all AMD or anyone else would have to do is go through the whole list of DX10.1 features and query the driver about each one. Voila- an accurate list of what is and isn't supported, at least with that driver.

DerekWilson - Monday, June 16, 2008 - link

the problem is that they don't expose all the features they are capable of supporting. they won't mind if AMD gets some devs on board with something that they don't currently support but that they can enable support for if they need to.what they don't want is for AMD to find out what they are incapable of supporting in any reasonable way. they don't want AMD to know what they won't be able to expose via the driver to developers.

knowing what they already expose to devs is one thing, but knowing what the hardware can actually do is not something nvidia is interested in shareing.

emboss - Monday, June 16, 2008 - link

Well, yes and no. The G80 is capable of more than what is implemented in the driver, and also some of the implemented driver features are actually not natively implemented in the hardware. I assume the GT200 is the same. They only implement the bits that are actually being used, and emulate the operations that are not natively supported. If a game comes along that needs a particular feature, and the game is high-profile enough for NV to care, NV will implement it in the driver (either in hardware if it is capable of it, or emulated if it's not).What they don't want to say is what the hardware is actually capable of. Of course, ATI can still get a reasonably good idea by looking at the pattern of performance anomalies and deducing which operations are emulated, so it's still just stupid paranoia that hurts developers.

B3an - Monday, June 16, 2008 - link

@ Derek - I'd really appreciate this if you could reply...Games are tested at 2560x1600 in these benchmarks with the 9800GX2, and some games are even playable.

Now when i do this with my GX2 at this res, a lot of the time even the menu screen is a slide show (often under 10FPS). Epecially if any AA is enabled. Some games that do this are Crysis, GRID, UT3, Mass Effect, ET:QW... with older games it does not happen, only newer stuff with higher res textures.

This never happened on my 8800GTX to the same extent. So i put it down to the GX2 not having enough memory bandwidth and enough usable VRAM for such high resolution.

So could you explain how the GX2 is getting 64FPS @ 2560x1600 with 4x AA with ET:Quake Wars? Aswell as other games at that res + AA.

DerekWilson - Monday, June 16, 2008 - link

i really haven't noticed the same issue with menu screens ... except in black and white 2 ... that one sucked and i remember complaining about it.to be fair i haven't tested this with mass effect, grid, or ut3.

as for menu screens, they tend to be less memory intensive than the game itself. i'm really not sure why it happens when it does, but it does suck.

i'll ask around and see if i can get an explaination of this problem and if i can i'll write about why and when it will happen.

thanks,

Derek

larson0699 - Monday, June 16, 2008 - link

"Massiveness" and "aggressiveness"?I know the article is aimed to hit as hard as the product it's introducing us to, but put a little English into your English.

"Mass" and "aggression".

FWIW, the GTX's numbers are unreal. I can appreciate the power-saving capabilities during lesser load, but I agree, GT200 should've been 55nm. (6pin+8pin? There's a motherboard under that SLI setup??)

jobrien2001 - Monday, June 16, 2008 - link

Seems Nvidia finally dropped the ball.-Power consumption and the price tag are really bad.

-Performance isnt as expected.

-Huge Die

Im gonna wait for a die shrink or buy an ATI. The 4870 with ddr5 seems promising from the early benchmarks... and for $350? who in their right mind wouldnt buy one.