NVIDIA's 1.4 Billion Transistor GPU: GT200 Arrives as the GeForce GTX 280 & 260

by Anand Lal Shimpi & Derek Wilson on June 16, 2008 9:00 AM EST- Posted in

- GPUs

Power and Power Management

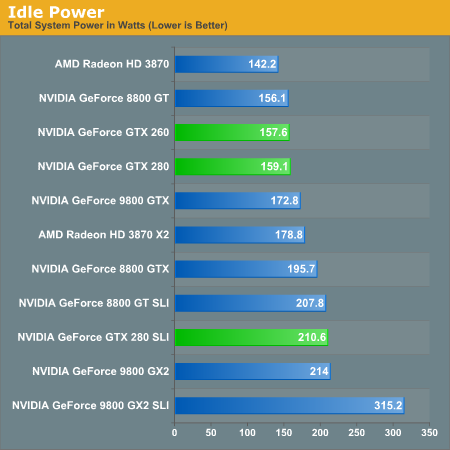

Power is a major concern of many tech companies going forward, and just adding features "because we can" isn't the modus operandi anymore. Now it's cool (pardon the pun) to focus on power management, performance per watt, and similar metrics. To that end, NVIDIA has beat their GT200 into such submission that it's 2D power consumption can reach as low as 25W. As we will show below, this can have a very positive impact on idle power for a very powerful bit of hardware.

These enhancements aren't breakthorugh technologies: NVIDIA is just using clock gating and dynamic voltage and clock speed adjustment to achieve these savings. There is hardware on the GPU to monitor utilization and automatically set the clock speeds to different performance modes (either off for hybrid power, 2D/idle, HD video, or 3D/performance). Mode changes can be done on the millisecond level. This is very similar to what AMD has already implemented.

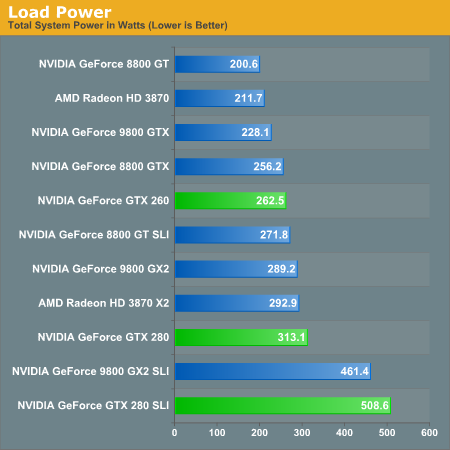

With increasing transistor count and huge GPU sizes with lots of memory, power isn't something that can stay low all the time. Eventually the hardware will actually have to do something and then voltages will rise, clock speed will increase, and power will be converted into dissapated heat and frames per second. And it is hard to say what is more impressive, the power saving features at idle, or the power draw at load.

There is an in between stage for HD video playback that runs at about 32W, and it is good to see some attention payed to this issue specifically. This bodes well for mobile chips based off of the GT200 design, but in the desktop this isn't as mission critical. Yes reducing power (and thus what I have to pay my power company) is a good thing, but plugging a card like this into your computer is like driving an exotic car: if you want the experience you've got to pay for the gas.

Idle power so low is definitely nice to see. Having high end cards idle near midrange solutions from previous generations is a step in the right direction.

But as soon as we open up the throttle, that power miser is out the door and joules start flooding in by the bucket.

Cooling NVIDIA's hottest card isn't easy and you can definitely hear the beast moving air. At idle, the GPU is as quiet as any other high-end NVIDIA GPU. Under load, as the GTX 280 heats up the fan spins faster and moves much more air, which quickly becomes audible. It's not GeForce FX annoying, but it's not as quiet as other high-end NVIDIA GPUs; then again, there are 1.4 billion transistors switching in there. If you have a silent PC, the GTX 280 will definitely un-silence it and put out enough heat to make the rest of your fans work harder. If you're used to a GeForce 8800 GTX, GTS or GT, the noise will bother you. The problem is that returning to idle from gaming for a couple of hours results in a fan that doesn't want to spin down as low as when you first turned your machine on.

While it's impressive that NVIDIA built this chip on a 65nm process, it desperately needs to move to 55nm.

108 Comments

View All Comments

strikeback03 - Tuesday, June 17, 2008 - link

So are you blaming nvidia for games that require powerful hardware, or just for enabling developers to write those games by making powerful hardware?InquiryZ - Monday, June 16, 2008 - link

Was AC tested with or without the patch? (the patch removes a lot of performance on the ATi cards..)DerekWilson - Monday, June 16, 2008 - link

the patch only affects performance with aa enabled.since the game only allows aa at up to 1680x1050, we tested without aa.

we also tested with the patch installed.

PrinceGaz - Monday, June 16, 2008 - link

nVidia say they're not saying exactly what GT200 can and cannot do to prevent AMD bribing game developers to use DX10.1 features GT200 does not support, but you mention that"It's useful to point out that, in spite of the fact that NVIDIA doesn't support DX10.1 and DX10 offers no caps bits, NVIDIA does enable developers to query their driver on support for a feature. This is how they can support multisample readback and any other DX10.1 feature that they chose to expose in this manner."

Now whilst it is driver dependent and additional features could be enabled (or disabled) in later drivers, it seems to me that all AMD or anyone else would have to do is go through the whole list of DX10.1 features and query the driver about each one. Voila- an accurate list of what is and isn't supported, at least with that driver.

DerekWilson - Monday, June 16, 2008 - link

the problem is that they don't expose all the features they are capable of supporting. they won't mind if AMD gets some devs on board with something that they don't currently support but that they can enable support for if they need to.what they don't want is for AMD to find out what they are incapable of supporting in any reasonable way. they don't want AMD to know what they won't be able to expose via the driver to developers.

knowing what they already expose to devs is one thing, but knowing what the hardware can actually do is not something nvidia is interested in shareing.

emboss - Monday, June 16, 2008 - link

Well, yes and no. The G80 is capable of more than what is implemented in the driver, and also some of the implemented driver features are actually not natively implemented in the hardware. I assume the GT200 is the same. They only implement the bits that are actually being used, and emulate the operations that are not natively supported. If a game comes along that needs a particular feature, and the game is high-profile enough for NV to care, NV will implement it in the driver (either in hardware if it is capable of it, or emulated if it's not).What they don't want to say is what the hardware is actually capable of. Of course, ATI can still get a reasonably good idea by looking at the pattern of performance anomalies and deducing which operations are emulated, so it's still just stupid paranoia that hurts developers.

B3an - Monday, June 16, 2008 - link

@ Derek - I'd really appreciate this if you could reply...Games are tested at 2560x1600 in these benchmarks with the 9800GX2, and some games are even playable.

Now when i do this with my GX2 at this res, a lot of the time even the menu screen is a slide show (often under 10FPS). Epecially if any AA is enabled. Some games that do this are Crysis, GRID, UT3, Mass Effect, ET:QW... with older games it does not happen, only newer stuff with higher res textures.

This never happened on my 8800GTX to the same extent. So i put it down to the GX2 not having enough memory bandwidth and enough usable VRAM for such high resolution.

So could you explain how the GX2 is getting 64FPS @ 2560x1600 with 4x AA with ET:Quake Wars? Aswell as other games at that res + AA.

DerekWilson - Monday, June 16, 2008 - link

i really haven't noticed the same issue with menu screens ... except in black and white 2 ... that one sucked and i remember complaining about it.to be fair i haven't tested this with mass effect, grid, or ut3.

as for menu screens, they tend to be less memory intensive than the game itself. i'm really not sure why it happens when it does, but it does suck.

i'll ask around and see if i can get an explaination of this problem and if i can i'll write about why and when it will happen.

thanks,

Derek

larson0699 - Monday, June 16, 2008 - link

"Massiveness" and "aggressiveness"?I know the article is aimed to hit as hard as the product it's introducing us to, but put a little English into your English.

"Mass" and "aggression".

FWIW, the GTX's numbers are unreal. I can appreciate the power-saving capabilities during lesser load, but I agree, GT200 should've been 55nm. (6pin+8pin? There's a motherboard under that SLI setup??)

jobrien2001 - Monday, June 16, 2008 - link

Seems Nvidia finally dropped the ball.-Power consumption and the price tag are really bad.

-Performance isnt as expected.

-Huge Die

Im gonna wait for a die shrink or buy an ATI. The 4870 with ddr5 seems promising from the early benchmarks... and for $350? who in their right mind wouldnt buy one.