Intel's Larrabee Architecture Disclosure: A Calculated First Move

by Anand Lal Shimpi & Derek Wilson on August 4, 2008 12:00 AM EST- Posted in

- GPUs

Putting it all Together - Return of the Ring Bus

Intel is keeping two important details of Larrabee very quiet: the details of the instruction set and the configuration of the finished product. Remember that Larrabee won't ship until sometime in 2009 or 2010, the first chips aren't even back from the fab yet, so not wanting to discuss how many cores Intel will be able to fit on a single Larrabee GPU makes sense.

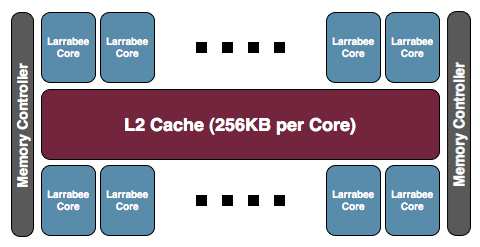

The final product will be some assembly of a multiple of 8 Larrabee cores, we originally expected to see something in the 24-to-32 core range but that largely depends on targeted die size as we'll soon explain:

Intel's own block diagrams indicated two memory controller partitions, but it's unclear whether or not we should read into this. AMD and NVIDIA both use 64-bit memory controllers and simply group multiples of them on a single chip. Given that Intel's Larrabee will be more memory bandwidth efficient than what AMD and NVIDIA have put out, it's quite possible that Larrabee could have a 128-bit memory interface, although we do believe that'd be a very conservative move (we'd expect a 256-bit interface). Coupled with GDDR5 (which should be both cheaper and highy available by the Larrabee timeframe) however, anything is possible.

All of the cores are connected via a bi-directional ring bus (512-bits in each direction), presumably running at core speed. Given that Larrabee is expected to run at 2GHz+, this is going to be one very high-bandwidth bus. This is half the bit-width of AMD's R600/RV670 ring bus, but the higher speed should more than make up the difference.

AMD recently abandoned their ring bus memory architecture citing a savings in die area and a lack of need for such a robust solution as the reason. A ring bus, as memory busses go, is fairly straight forward and less complex than other options. The disadvantage is that it is a lot of wires and it delivers high bandwidth to all the clients on the bus whether they need it or not. Of course, if all your memory clients need or can easily use high bandwidth then that's a win for the ring bus.

Intel may have a better use for going with the ring bus than AMD: cache coherency and inter-core communication. Partitioning the L2 and using the ring bus to maintain coherency and facilitate communication could make good use of this massive amount of data moving power. While Cell also allows for internal communication, Intel's solution of providing direct access to low latency, coherent L1 and L2 partitions while enabling massive bandwidth behind the L2 cache could result in a much faster and easier to program architecture when data sharing is required.

101 Comments

View All Comments

Shinei - Monday, August 4, 2008 - link

Some competition might do nVidia good--if Larrabee manages to outperform nvidia, you know nvidia will go berserk and release another hammer like the NV40 after R3x0 spanked them for a year.Maybe we'll start seeing those price/performance gains we've been spoiled with until ATI/AMD decided to stop being competitive.

Overall, this can only mean good things, even if Larrabee itself ultimately fails.

Griswold - Monday, August 4, 2008 - link

Wake-up call dumbo. AMD just started to mop the floor with nvidias products as far as price/performance goes.watersb - Monday, August 4, 2008 - link

great article!You compare the Larrabee to a Core 2 duo - for SIMD instructions, you multiplied by a (hypothetical) 10 cores to show Larrabee at 160 SIMD instructions per clock (IPC). But you show non-vector IPC as 2.

For a 10-core Larrabee, shouldn't that be x10 as well? For 20 scalar IPC

Adamv1 - Monday, August 4, 2008 - link

I know Intel has been working on Ray Tracing and I'm really curious how this is going to fit into the picture.From what i remember Ray Tracing is a highly parallel and scales quite well with more cores and they were talking about introducing it on 8 core processors, it seems to me this would be a great platform to try it on.

SuperGee - Thursday, August 7, 2008 - link

How it fit's.GPU from ATI and nV are called HArdware renderers. Stil a lot of fixed funtion. Rops TMU blender rasterizer etc. And unified shader are on the evolution to get more general purpouse. But they aren't fully GP.

This larrabee a exotic X86 massive multi core. Will act as just like a Multicore CPU. But optimised for GPU task and deployed as GPU.

So iNTel use a Software renderer and wil first emulate DirectX/OpenGL on it with its drivers.

Like nv ATI is more HAL with as backup HEL

Where Larrabee is pure HEL. But it's parralel power wil boost Software method as it is just like a large bunch of X86 cores.

HEL wil runs fast, as if it was 'HAL' with LArrabee. Because the software computing power for such task are avaible with it.

What this means is that as a GFX engine developer you got full freedom if you going to use larrabee directly.

Like they say first with a DirectX/openGL driver. Later with also a CPU driver where it can be easy target directly. thus like GPGPU task. but larrabee could pop up as extra cores in windows.

This means, because whatever you do is like a software solution.

You can make a software rendere on Ratracing method, but also a Voxel engine could be done to. But this software rendere will be accelerated bij the larrabee massive multicore CPU with could do GPU stuf also very good. But will boost any software renderer. Offcourse it must be full optimised for larrabee to get the most out of it. using those vector units and X86 larrabee extention.

Novalogic could use this to, for there Voxel game engine back in the day's of PIII.

It could accelerate any software renderer wich depend heavily on parralel computing.

icrf - Monday, August 4, 2008 - link

Since I don't play many games anymore, that aspect of Larrabee doesn't interest me any more than making economies of scale so I can buy one cheap. I'm very interested in seeing how well something like POV-Ray or an H.264 encoder can be implemented, and what kind of speed increase it'd see. Sure, these things could be implemented on current GPUs through Cuda/CTM, but that's such an different kind of task, it's not at all quick or easy. If it's significantly simpler, we'd actually see software sooner that supports it.cyberserf - Monday, August 4, 2008 - link

one word: MATROXGuuts - Monday, August 4, 2008 - link

You're going to have to use more than one word, sorry... I have no idea what in this article has anything to do with Matrox.phaxmohdem - Monday, August 4, 2008 - link

What you mean you DON'T have a Parhelia card in your PC? WTF is wrong with you?TonyB - Monday, August 4, 2008 - link

but can it play crysis?!