AMD's Radeon HD 4870 X2 - Testing the Multi-GPU Waters

by Anand Lal Shimpi & Derek Wilson on August 12, 2008 12:00 AM EST- Posted in

- GPUs

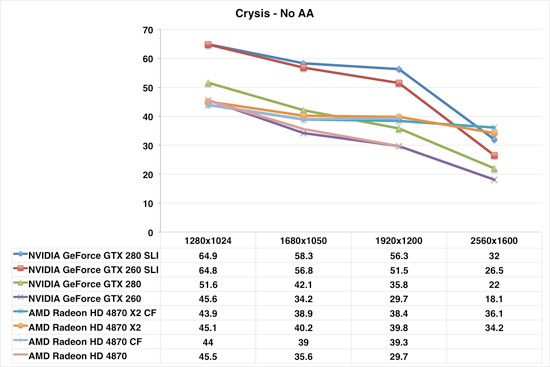

Crysis Performance Scaling

Ahh Crysis. Our familiar friend. Not the greatest game in the world, but it looks really good and still absolutely kills graphics hardware.

In this case things are a little strange. We see the 4870 X2 and 2x 4870 X2 CrossFire solutions very system limited at below 2560x1600. The NVIDIA SLI options provide a marked performance advantage at 1920x1200 and lower, but drop off very steeply at 2560.

The major factor in a purchasing decision here should be monitor size. If you have a 30" monitor, you might want to consider the 4870 X2 soluiton, but other wise it is a much better bet to stay with a single card (and lower settings) or to go with an NVIDIA SLI system.

Not that anyone would want to build a system just to play Crysis. Right?

93 Comments

View All Comments

helldrell666 - Wednesday, August 13, 2008 - link

Anandtech hates DAAMIT.Have you checked the review of the 4870/x2 cards at techreport.com?The cards scored much better than here.

I mean In assassins creed it's well know that ATI cards do much better than nvidia's

It seems that some sites like: anandtech,tweaktown"nvidiatown",guru3d,hexus... do have some good relations with NVIDIA.

It seems that marketing these days is turning into fraud.

Odeen - Wednesday, August 13, 2008 - link

With the majority of the gaming population still running 32-bit operating systems and bound by the 4GB RAM limitation, it seems that a 2GB video card (that leaves AT MOST 2GB of system RAM addressable, and, in some cases, only 1.25-1.5GB of RAM) causes more problems than it solves.Are there tangible benefits to having 1GB of RAM per GPU in modern gaming, or does the GPU bog down before textures require such a gargantuan amount of memory? Wouldn't it really be more sensible to make the 4870x2 a 2x512MB card, which is more compatible with 32-bit OS'es?

BikeDude - Wednesday, August 13, 2008 - link

Because you can't be bothered upgrading to a 64-bit OS, the rest of the world should stop evolving?A 64-bit setup used to be a challenge. Most hw comes with 64-bit drivers now. The question now is: Why bother installing a 32-bit OS in new hardware? You have lots of Win16 apps around that you run on a daily basis?

Odeen - Thursday, August 14, 2008 - link

Actually, no. However, a significant percentage of "enthusiast" gamers at whom this card is aimed run Windows XP (with higher performance and less memory usage than Vista), for which 64-bit support is lackluster.Vista 64-bit does not allow unsigned non-WHQL drivers to be installed. That means that you cannot use beta drivers, or patched drivers released to deal with the bug-of-the-week.

Since a lot of "enthusiast" gamers update their video (and possibly sound) card drivers on a regular basis, and cannot wait until the latest drivers get Microsoft's blessing, 64-bit OS'es are not an option for them.

I'm not saying that the world should stop evolving, but I am looking forward to a single 64-bit codebase for Windows, where the driver signing restriction can be lifted, since ALL drivers will be designed for 64-bit.

rhog - Wednesday, August 13, 2008 - link

Poor Nvidia,DT and Anandtech have their heads in the sand if they don't see the writing on the wall for nvidia. The 4870X2 is the fastest video card out there, the 4870 is excellent in its price range and the 4850 is the same in its price range. The AMD chipsets are excellent (now that the SB750SB is out) and Intel Chipsets have always been a cut above also they really only support Crossfirenot SLI. Why would anyone buy Nvidia (this is why they lost a bunch of money last quarter,no surprise). For example, to get a 280SLI setup you have to buy an Nvidia chipset for either the AMD or Intel processors (the exception may be skulltrail ofr intel?) Neither Nvidia Chipset platform is really better than the equivalents from Intel or AMD so why would you buy them? Along with this Nvidia is currently having issues with their chips dying. Again why woudl you buy Nvidia? I feel that the writing is on the wall Nvidia needs to do something Quick to survive. What I also find Funny is that many people on this site and on others said AMD was stupid for buying ATI but in the end it seems that Nvidia is the one who will suffer the most. Give Nvidia a biased review they need all the help they can get!

helldrell666 - Wednesday, August 13, 2008 - link

AMD didn't get over 40% of the X86 market share when they had the best cpus "athlon 64 /x2".AMD knew back then that beating INTEL "to get over 50%" of the

x86 market share" wont happen by just having the best product.

Now,INTEL has the better cpu/cpus and 86% of the cpu market.

So,to fight such a beast with a huge power you have to change the battle ground.

AMD bought ATI to get the parallel processing technology.Why?

To get a new market where there's no INTEL.

actually, that's not the exact reason

Lately nvidia introduced cuda,"the parallel processing for general processing "And as we saw,The parallel procesing is much faster than the x86 processing in some taskes.

Like in transcoding the 280gtx with a 933 Giga flops/cycle of processing power {processing power is the number of constructions or flops a gpu can handle in a single cycle} was 14 times faster than a QX 9770 clocked at 4GHz.

NVIDIA claims that there are much more areas where the parallel processing can take over easily.

So,We have two types of processing and each one has it's adavantages over the other.

What i meant by changing the battle ground wasn't the gpu market.

AMD is woking at these seconds on the first parallel+x86 processor .

A processor that will include x86 and parallel cores working together to handle everthing much faster than a x86 processor at least in some tasks.So the x86 core will handle the tasks that they are faster at,and the parallel cores will handle tha tasks that the're faster at.

Now,Intel claims that geometry can be handled better via the x86 processing.

you can see it as a battle ground between INTEL and NVIDIA but,It's actually where AMD can win.

I think that we're going to see not only x86+parallel cpus but also

x86+parallel gpus.Easily put as much processing power of each type as it needs to make a gpu or a cpu.

I think that AMD is going to change the micro processing industry to where it can win.

lee1210mk2 - Wednesday, August 13, 2008 - link

Fastest card out - all that matters! - #1Ezareth - Wednesday, August 13, 2008 - link

I wouldn't be suprised to see the test setup done on a P45 much like Tweaktown did for their 4870X2 CF setup. Doesn't anyone realize the 2 X PCIe X8 is not the same as 2 X PCIe X16? That is the only thing that really explains the low scoring of the CF setups here.Crassus - Wednesday, August 13, 2008 - link

I think this is actually a positive sign when viewed from a little further away. Remember all the hoopla about "native quad core" with AMD's Phenom? They stuck with it, and they're barely catching up with Intel (and probably lose out big in yield).Here Sideport apparently doesn't bring the expected benefits - so they cut it out and moved on. No complaints from me here - at the end of the day the performance counts, not how you get there. And if disabling it lowers the power requirements a bit, with the power draw Anand measured I don't think it's an unreasonable choice to disable it. And if it makes the board cheaper, again, I don't mind paying less. :D

And if AMD/ATI choses to enable it one or two years down the road - by then we've probably moved on by one or two generations, and the gain is negligible compared to just replacing them.

[rant]

At any rate, I'm happy with my 7900 GT SLI - and I can run the whole setup with a 939 4200+ on a 350 W PSU. If power requirements continue to go up like that, I see the power grid going down if s/o hosts a LAN party in my block. We already had brownouts this summer with multiple ACs kicking in at the same time, and it looks like PC PSUs are moving into the same power draw ballpark. R&D seriously needs to look into GPU power efficiency.

[/rant]

My $.02

drank12quartsstrohsbeer - Wednesday, August 13, 2008 - link

My guess (before the reviews came out) was that the sideport would be used with the unified framebuffer memory. When the unified memory feature didn't work out, there was no need for it.I wonder if the non functioning unified memory was due to technical problems, or if it was disabled for strategic reasons... ie since this card already beats Nvidias, why use it. This way they can make it a feature of the firegl and GPGPU cards only.