ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275

by Anand Lal Shimpi & Derek Wilson on April 2, 2009 12:00 AM EST- Posted in

- GPUs

New Drivers From NVIDIA Change The Landscape

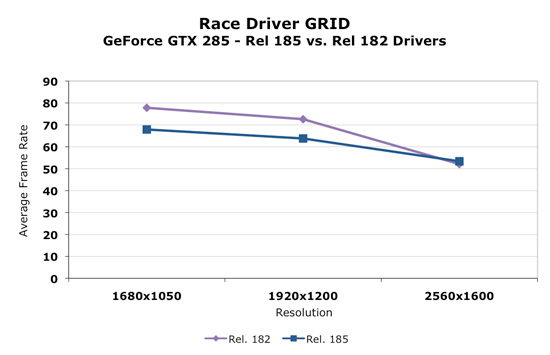

Today, NVIDIA will release it's new 185 series driver. This driver not only enables support for the GTX 275, but affects performance in parts across NVIDIA's lineup in a good number of games. We retested our NVIDIA cards with the 185 driver and saw some very interesting results. For example, take a look at before and after performance with Race Driver: GRID.

As we can clearly see, in the cards we tested, performance decreased at lower resolutions and increased at 2560x1600. This seemed to be the biggest example, but we saw flattened resolution scaling in most of the games we tested. This definitely could affect the competitiveness of the part depending on whether we are looking at low or high resolutions.

Some trade off was made to improve performance at ultra high resolutions at the expense of performance at lower resolutions. It could be a simple thing like creating more driver overhead (and more CPU limitation) to something much more complex. We haven't been told exactly what creates this situation though. With higher end hardware, this decision makes sense as resolutions lower than 2560x1600 tend to perform fine. 2560x1600 is more GPU limited and could benefit from a boost in most games.

Significantly different resolution scaling characteristics can be appealing to different users. An AMD card might look better at one resolution, while the NVIDIA card could come out on top with another. In general, we think these changes make sense, but it might be nicer if the driver automatically figured out what approach was best based on the hardware and resolution running (and thus didn't degrade performance at lower resolutions).

In addition to the performance changes, we see the addition of a new feature. In the past we've seen the addition of filtering techniques, optimizations, and even dynamic manipulation of geometry to the driver. Some features have stuck and some just faded away. One of the most popular additions to the driver was the ability to force Full Screen Antialiasing (FSAA) enabling smoother edges in games. This features was more important at a time when most games didn't have an in-game way to enable AA. The driver took over and implemented AA even on games that didn't offer an option to adjust it. Today the opposite is true and most games allow us to enable and adjust AA.

Now we have the ability to enable a feature, which isn't available natively in many games, that could either be loved or hated. You tell us which.

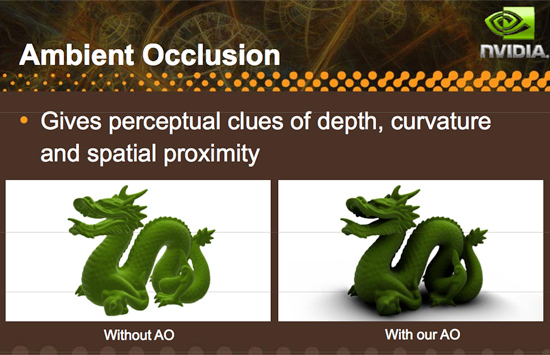

Introducing driver enabled Ambient Occlusion.

What is Ambient Occlusion you ask? Well, look into a corner or around trim or anywhere that looks concave in general. These areas will be a bit darker than the surrounding areas (depending on the depth and other factors), and NVIDIA has included a way to simulate this effect in it's 185 series driver. Here is an example of what AO can do:

Here's an example of what AO generally looks like in games:

This, as with other driver enabled features, significantly impacts performance and might not be able to run on all games or at all resolutions. Ambient Occlusion may be something some gamers like and some do not depending on the visual impact it has on a specific game or if performance remains acceptable. There are already games that make use of ambient occlusion, and some games that NVIDIA hasn't been able to implement AO on.

There are different methods to enable the rendering of an ambient occlusion effect, and NVIDIA implements a technique called Horizon Based Ambient Occlusion (HBAO for short). The advantage is that this method is likely very highly optimized to run well on NVIDIA hardware, but on the down side, developers limit the ultimate quality and technique used for AO if they leave it to NVIDIA to handle. On top of that, if a developer wants to guarantee that the feature work for everyone, they would need implement it themselves as AMD doesn't offer a parallel solution in their drivers (in spite of the fact that they are easily capable of running AO shaders).

We haven't done extensive testing with this feature yet, either looking for quality or performance. Only time will tell if this addition ends up being gimmicky or really hits home with gamers. And if more developers create games that natively support the feature we wouldn't even need the option. But it is always nice to have something new and unique to play around with, and we are happy to see NVIDIA pushing effects in games forward by all means possible even to the point of including effects like this in their driver.

In our opinion, lighting effects like this belong in engine and game code rather than the driver, but until that happens it's always great to have an alternative. We wouldn't think it a bad idea if AMD picked up on this and did it too, but whether it is more worth it to do this or spend that energy encouraging developers to adopt this and comparable techniques for more complex writing is totally up to AMD. And we wouldn't fault them either way.

294 Comments

View All Comments

SiliconDoc - Monday, April 6, 2009 - link

Oh great, a whole other sku to lose another billion a year with. Wonderful. Any word on the new costs of the bigger cpu and expensive capacitors and vrm upgrades ?Ahh, nevermind, heck, this ain't a green greedy monster card, screw it if they lose their shirts making it - I mean there's no fantasy satisfaction there.

Get back to me on the nvidia costs - so I can really dream about them losing money.

itbj2 - Thursday, April 2, 2009 - link

I am not sure about you guys but NVIDIA has problems with their drivers as well. I have a 9400GT and a 8800 GTS in my machine and the new drivers can't make the two work well enough for my computer to come out of hibernation with out Windows XP crashing every so often. This use to work just fine before I upgraded the drivers to the latest version.FishTankX - Thursday, April 2, 2009 - link

For anyone who REALLY wants temp data..Firingsquad 4890/GTX275 review

http://www.firingsquad.com/hardware/ati_radeon_489...">http://www.firingsquad.com/hardware/ati...4890_nvi...

Idle

GTX 260 216 (45C)

GTX 285 (46C)

GTX 275 (47C)

4890 1GB (51C)

4870 (60C)

Load

4890 1GB (64C)

GTX 260 216 (64C)

GTX 275 (68C)

GTX 285 (70C)

4870 1GB (80C)

Power consumption

(Total system power)

Idle

GTX 275 (143W)

4890 (172W)

Load

4890 (276W)

GTX 275 (279W)

There, now you can can it! :D

SiliconDoc - Monday, April 6, 2009 - link

There it is again, 30 watts less idle for nvidia, and only 3 watts more in 3d. NVIDIA WINS - that's why they left it out - they just couldn't HANDLE it....So, if you're 3d gaming 91% of the time, and only 2d surfing 9% of the time, the ati card comes in at equal power useage...

Otherwise, it LOSES - again.

I doubt the red raging reviewers can even say it. Oh well, thanks for posting numbers.

7Enigma - Thursday, April 2, 2009 - link

Can anyone confirm whether or not the heatsink/fan has been altered between the 4870 and the 4890? I'm interested to know if the decreased temps of the higher clocked 4890 are due in part to a better cooling mechanism, or strictly from a respin/binning.Warren21 - Thursday, April 2, 2009 - link

Yes, the cooler has been slightly revised. I believe it's a combination of both. I'll admit I'm a bit disappointed AT didn't explore the differences between the HD 4870 and the 4890 more in-depth.Comparisson:

http://www.hardwarecanucks.com/forum/hardware-canu...">http://www.hardwarecanucks.com/forum/ha...phire-ra...

bill3 - Thursday, April 2, 2009 - link

"It looks like NVIDIA might be the marginal leader at this new price point of $250." you wroteBut looking at your own benches..

Since you run 3 resolutions of your benches, lets reasonably declare that the card that can win 2 or more of them "wins" that game. In that case 4890 wins over 275 in: COD WaW, Warhead, Fallout 3, Far Cry 2, GRID, and Left 4 Dead. 275 wins over 4890 in Age of Conan. Either with AA or without the results stay the same.

The only way I think you can contend 275 has an edge is if you place a premium on the 2560X1600 results, where it seems to edge out the 4890 more often. However, it's often at unplayable framerates. Further I dont see a reason to place undue importance on the 2560X benches, the majority of people still game on 1680X1050 monitors, and as you yourself noted, Nvidia released a new driver that trades off performance at low res for high res, which I think is arguably neither here nor their, not a clear advantage at all.

Even at 2560 (using the AA bar graphs because its often difficult to spot the winner at 2560 on the line graphs), where the 275 wins 5 and loses 2, the margins are often so ridiculously close it essentially a tie. 275 takes AOC, COD WaW, and L4D by a reasonable margin at the highest res, while the 4890 wins Fallout3 and GRID comfortably. Warhead and Far Cry 2 are within .7 FPS although nominally wins for 275. Thats a difference of all of 3-2 in materially relevant wins, or exactly 1 game. But keep in mind again that 4890 is fairly clearly winning the lower reses more often, and to me it's wrong to state 275 has the edge.

SiliconDoc - Monday, April 6, 2009 - link

The funny thing is, if you're in those games and constantly looking at your 5-10 fps difference at 50-60-100-200 fps - there's definitely something wrong with you.I find reviews that show LOWEST framerate during game when it's a very high resolution and a demanding game useful - usually more useful when the playable rate is hovering around 30 or below 50 (and dips a ways below 30.

Otherwise, you'd have to be an IDIOT to base your decision on the very often, way over playable framerates in the near equally matched cards. WE HAVE A LOT OF IDIOTS HERE.

Then comes the next conclusion, or the follow on. Since framerates are at playable, and are within 10% at the top end, the things that really matter are : game drivers / stability , profiles , clarity, added features, added box contents (a free game one wants perhaps).

Almost ALWAYS, Nvidia wins that - with the very things this site continues to claim simply do not matter, and should not matter - to ANYONE they claim - in effect.

I think it's one big fat lie, and they most certainly SHOULD know it.

Note now, that NVidia - having released their, according to this site, high resolution driver tweak for 2560xX , wins at that resolution, the review calmly states it does'nt matter much, most people don't play at that resolution - and recommend ati now instead.

Whereas just prior, for MONTHS on end, when ati won only the top resolution, and NVidia took the others, this same site could not stop ranting and raving that ATI won it all and was the only buy that made sense.

It's REALLY SICK.

I pointed out their 30" monitor for ATI bias months ago, and they continued with it - but now they agree with me - when ATI loses at that rezz... LOL

Yeah, they're schesiters. Ain't no doubt about it.

Others notice as well - and are saying things now.

I see Jarred is their damage control agent.

JonnyDough - Thursday, April 2, 2009 - link

Why not just use RivaTuner or ATI Tool to underclock OC'd cards?Jamahl - Thursday, April 2, 2009 - link

How can the conclusion be that the 275 is the leader at the price point? The benchmarks are clearly in favour of the 4890 apart from the extreme end 2560x1600.