Digging Deeper: Galloping Horses Example

Rather than pull out a bunch of math and traditional timing diagrams, we've decided to put together a more straight forward presentation. The diagrams we will use show the frames of an actual animation that would be generated over time as well as what would be seen on the monitor for each method. Hopefully this will help illustrate the quantitative and qualitative differences between the approaches.

Our example consists of a fabricated example (based on an animation example courtesy of Wikipedia) of a "game" rendering a horse galloping across the screen. The basics of this timeline are that our game is capable of rendering at 5 times our refresh rate (it can render 5 different frames before a new one gets swapped to the front buffer). The consistency of the frame rate is not realistic either, as some frames will take longer than others. We cut down on these and other variables for simplicity sake. We'll talk about timing and lag in more detail based on a 60Hz refresh rate and 300 FPS performance, but we didn't want to clutter the diagram too much with times and labels. Obviously this is a theoretical example, but it does a good job of showing the idea of what is happening.

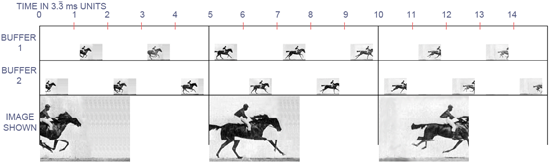

First up, we'll look at double buffering without vsync. In this case, the buffers are swapped as soon as the game is done drawing a frame. This immediately preempts what is being sent to the display at the time. Here's what it looks like in this case:

Good performance but with quality issues.

The timeline is labeled 0 to 15, and for those keeping count, each step is 3 and 1/3 milliseconds. The timeline for each buffer has a picture on it in the 3.3 ms interval during which the a frame is completed corresponding to the position of the horse and rider at that time in realtime. The large pictures at the bottom of the image represent the image displayed at each vertical refresh on the monitor. The only images we actually see are the frames that get sent to the display. The benefit of all the other frames are to minimize input lag in this case.

We can certainly see, in this extreme case, what bad tearing could look like. For this quick and dirty example, I chose only to composite three frames of animation, but it could be more or fewer tears in reality. The number of different frames drawn to the screen correspond to the length of time it takes for the graphics hardware to send the frame to the monitor. This will happen in less time than the entire interval between refreshes, but I'm not well versed enough in monitor technology to know how long that is. I sort of threw my dart at about half the interval being spent sending the frame for the purposes of this illustration (and thus parts of three completed frames are displayed). If I had to guess, I think I overestimated the time it takes to send a frame to the display.

For the above, FRAPS reported framerate would be 300 FPS, but the actual number of full images that get flashed up on the screen is always only a maximum of the refresh rate (in this example, 60 frames every second). The latency between when a frame is finished rendering and when it starts to appear on screen (this is input latency) is less than 3.3ms.

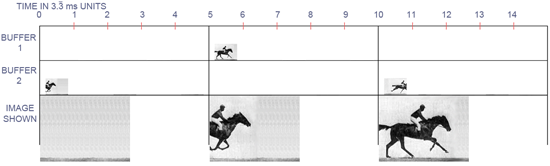

When we turn on vsync, the tearing goes away, but our real performance goes down and input latency goes up. Here's what we see.

Good quality, but bad performance and input lag.

If we consider each of these diagrams to be systems rendering the exact same thing starting at the exact same time, we can can see how far "behind" this rendering is. There is none of the tearing that was evident in our first example, but we pay for that with outdated information. In addition, the actual framerate in addition to the reported framerate is 60 FPS. The computer ends up doing a lot less work, of course, but it is at the expense of realized performance despite the fact that we cannot actually see more than the 60 images the monitor displays every second.

Here, the price we pay for eliminating tearing is an increase in latency from a maximum of 3.3ms to a maximum of 13.3ms. With vsync on a 60Hz monitor, the maximum latency that happens between when a rendering if finished and when it is displayed is a full 1/60 of a second (16.67ms), but the effective latency that can be incurred will be higher. Since no more drawing can happen after the next frame to be displayed is finished until it is swapped to the front buffer, the real effect of latency when using vsync will be more than a full vertical refresh when rendering takes longer than one refresh to complete.

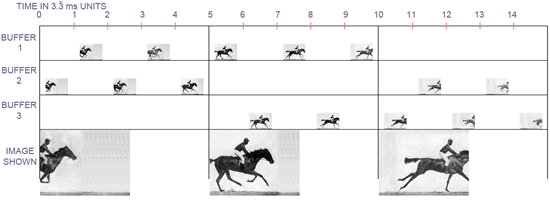

Moving on to triple buffering, we can see how it combines the best advantages of the two double buffering approaches.

The best of both worlds.

And here we are. We are back down to a maximum of 3.3ms of input latency, but with no tearing. Our actual performance is back up to 300 FPS, but this may not be reported correctly by a frame counter that only monitors front buffer flips. Again, only 60 frames actually get pasted up to the monitor every second, but in this case, those 60 frames are the most recent frames fully rendered before the next refresh.

While there may be parts of the frames in double buffering without vsync that are "newer" than corresponding parts of the triple buffered frame, the price that is paid for that is potential visual corruption. The real kicker is that, if you don't actually see tearing in the double buffered case, then those partial updates are not different enough than the previous frame(s) to have really mattered visually anyway. In other words, only when you see the tear are you really getting any useful new information. But how useful is that new information if it only comes with tearing?

184 Comments

View All Comments

greylica - Friday, June 26, 2009 - link

I always use triple buffering in OpenGL apps, and the performance is superb, until Vista/7 cames and crippled my hardware with Vsync enabled by default. This sh*t of hell Microsoft invention crippled my flawless GTX 285 to a mere 1/3 of the performance in OpenGL in the two betas I have tested.Thanks to GNU/Linux I have at least one chance to be free of the issue and use my 3D apps with full speed.

The0ne - Friday, June 26, 2009 - link

Love your comment lolJonP382 - Friday, June 26, 2009 - link

I always avoided triple buffering because it introduced input lag for me. I guess the implementation that ATI and Nvidia have for OpenGL is not the same as this one. Too bad. :(I'm going to try triple buffering in L4D and TF2 later today, but I'm just curious if their implementation is the same as the one promoted in this article?

DerekWilson - Friday, June 26, 2009 - link

I haven't spoke with valve, but I suspect their implementation is good and should perform as expected.JonP382 - Friday, June 26, 2009 - link

Same old story - I get even more input lag on triple buffering than on double buffering. :(JonP382 - Friday, June 26, 2009 - link

I should say that triple buffering introduced additional lag. Vsync itself introduces an enormous amount of input lag and drives me insane. But I do hate tearing...prophet001 - Friday, June 26, 2009 - link

one of the best articles i've read on here in a long time. i knew what vsync did as far as degrading performance (only in that it waited for the frame to be complete before displaying) but i never knew how double and triple buffering actually worked. triple buff from here on out4.9 out of 5.0 :-D

(but only b/c nobody gets a 5.0 lol)

thank you

Preston

danielk - Friday, June 26, 2009 - link

This was an excellent article!While im a gamer, i dont know much about the settings i "should" be running for optimal FPS vs. quality. I've run with vsync on as thats been the only remedy ive found for tearing, but had it set to "always on" in the gfx driver, as i didnt know better.

Naturally, triple buffering will be on from here on.

I would love to see more info about the different settings(anti aliasing etc) and their impact on FPS and image quality in future articles.

Actually, if anyone has a good guide to link, i would appreciate it!

Regards,

Daniel

DerekWilson - Friday, June 26, 2009 - link

keep in mind that you can't force triple buffering on in DirectX games from the control panel (yet - hopefully). It works for OpenGL though.For DX games, there are utilities out there that can force the option on for most games, but I haven't done indepth testing with these utilities, so I'm not sure on the specifics of how they work/what they do and if it is a good implementation.

The very best option (as with all other situtions) is to find an in-game setting for triple buffering. Which many developers do not include (but hopefully that trend is changing).

psychobriggsy - Friday, June 26, 2009 - link

I can see the arguments for triple buffering when the rendered frame rate is above the display frame rate. Of course a lot of work is wasted with this method, especially with your 300fps example.However I've been drawing out sub-display-rate examples on paper here to match your examples, and it's really not better than VsyncDB apart from the odd frame here and there.

What appears to be the best solution is for a game to time each frame's rendering (on an ongoing basis) and adjust when it starts rendering the frame so that it finishes rendering just before the Vsync. I will call this "Adaptive Vsync Double Buffering", which uses the previous frame rendering time to work out when to render the next frame so that what is displayed is up to date, but work is reduced.

In the meantime, lets work on getting 120fps monitors, in terms of the input signal. That would be the best way to reduce input lag in my opinion.