Of the GPU and Shading

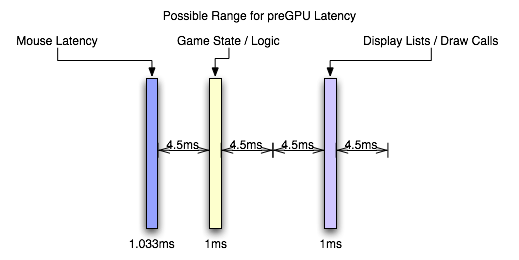

This is my favorite part, really. After the CPU has started sending draw calls to the graphics card, the GPU can begin work on actually rendering the frame containing the input that was generated somewhere in the vicinity of 3ms to 21ms ago depending on the software (and it would be an additional 1ms to 7ms for a slower mouse). Modern, complex, games will tend push up to the long end of that spectrum, while older games (or games that aren't designed to do a lot of realistic simulation like twitch shooters) will have a lower latency.

Again, the actual latency during this stage depends greatly on the complexity of the scene and the techniques used in the game.

These days, geometry processing and vertex shading tend to be pretty fast (geometry shading is slower but less frequently used). With features like instancing and the fact that the majority of detail is introduced via the pixel shader (which is really a fragment shader, but we'll dispense with the nit picking for now). If the use of tessellation catches on after the introduction of DX11, we could see even less actual time spent on geometry as the current level of detail could be achieved with fewer triangles (or we could improve quality with the same load). This step could still take a millisecond or two with modern techniques.

When it comes to actual fragment generation from the geometry data (called rasterization), the fixed function hardware and early z / z culling techniques used make this step pretty fast (yet this can be the limiting factor in how much geometry a GPU can realistically handle per frame).

Most of our time will likely be spent processing pixel shader programs. This is the step where every pin point spot on every triangle that falls behind the area of a screen space pixel (these pin point spots are called fragments) is processed and its color determined. During this step, texture maps are filtered and applied, work is done on those textures based on things like the fragments location, the angle of the underlying triangle to the screen, and constants set for the fragment. Lighting is also part of the pixel shading process.

Lighting tends to be one of the heaviest loads in a heavily loaded portion of the pipeline. Realistic lighting can be very GPU intensive. Getting into the specifics is beyond the scope of this article, but this lighting alone can take a good handful of milliseconds for an entire frame. The rest of the pixel shading process will likely also take multiple milliseconds.

After it's all said and done, with the pixel shader as the bottleneck in modern games, we're looking at something like 6ms to 25ms. In fact, the latency of the pixel shaders can hide a lot of the processing time of other parts of the GPU. For instance, pixel shaders can start executing before all the geometry is processed (pixel shaders are kicked off as fragments start coming out of the rasterizer). The color/z hardware (render outputs, render backends or ROPs depending on what you want to call them) can start processing final pixels in the framebuffer while the pixel shader hardware is still working on the majority of the scene. The only real latency that is added by the geometry/vertex processing portion of the pipeline is the latency that happens before the first pixels begin processing (which isn't huge). The only real latency added by the ROPs is the processing time for the last batch of pixels coming out of the pixel shaders (which is usually not huge unless really complicated blending and stencil technique are used).

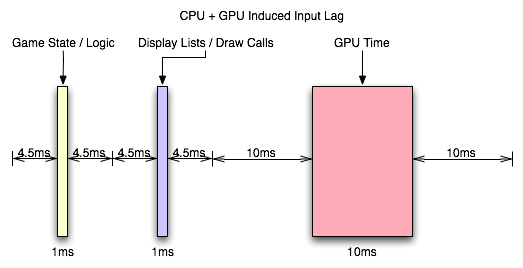

With the pixel shader as the bottleneck, we can expect that the entire GPU pipeline will add somewhere between 10ms and 30ms. This is if we consider that most modern games, at the resolutions people run them, produce something between 33 FPS and 100 FPS.

But wait, you might say, how can our framerate be 33 to 100 FPS if our graphics card latency is between 10ms and 30ms: don't the input and CPU time latencies add to the GPU time to lower framerate?

The answer is no. When we are talking about the total input lag, then yes we do have to add these latencies together to find out how long it has been since our input was gathered. After the GPU, we are up to something between 13ms and 58ms of input lag. But the cool thing is that human response happens in parallel to input gathering which happens in parallel to CPU time spent processing game logic and draw calls (which can happen in parallel to each other on multicore CPUs) which happens in parallel to the GPU rendering frames. There is a sequential path from input to the screen, but we can almost look at this like a heavily pipelined path where each stage operates in parallel on a different upcoming frame.

So we have the GPU rendering the previous frame while simulation and game logic are executing and input is being gathered for the next frame. In this way, the CPU can be ready to send more draw calls to the GPU as soon as the GPU is ready (provided only that we are not CPU limited).

So what happens after the frame is finished? The easy answer is a buffer swap and scanout. The subtle answer is mounds of potential input lag.

85 Comments

View All Comments

DerekWilson - Monday, July 20, 2009 - link

This is how we disable vsync.We got the same results in lag with present interval set to either 1 or 0 ... it really didn't make a measurable difference in our testing.

DerekWilson - Monday, July 20, 2009 - link

to clarify a little, this is why i think that Gamebryo (or Bethesda) must do some sort of internal timing that strictly enforces framerate, CPU time, or something based on some other factor than present interval.NetSoerfer - Monday, July 20, 2009 - link

On page 5, the fifth paragraph begins with "If our frametime is just longer than 16.67ms...". The next paragraph is meant to describe the opposite but begins with "When framerate is lower than refresh rate...".Longer frametime equals lower refresh rate. The second paragraph should read "When framerate is higher than refresh rate..." or "When frametime is shorter than refresh rate...".

DerekWilson - Monday, July 20, 2009 - link

No, the next paragraph is not meant to describe the opposite case ...The first paragraph you cite describes the effects of double-buffered vsync on framerates both lower than refresh (first half of the paragraph) and higher than refresh (second half of the paragraph).

The second paragraph you cite describes the effects of a 1 frame flip-queue with vsync or triple buffering on framerates that are lower than refresh.

Sorry if that wasn't clear.

Per Hansson - Sunday, July 19, 2009 - link

Hi, I tried your recommendation with "overclocking" the mouse (erm, we are really just changing the speed of the USB port, not the mouse right?)Anyway, I've got a MS IntelliMouse Explorer v3.0

When I run "Direct Input Mouse Rate" it shows my lag as 8ms at 125hz...

So I used the driver hidusbf and changed the frequency to 1000hz, this resulted in 1.4ms and 700hz with my mouse...

But now to begin with I had the mouse speed set to max in the Intellipoint mouse setup, and also "enhance pointer precision" enabled...

And at 125hz / 8ms lag that gave me a good speed, a bit slower than I had in Win2K but still acceptable (current os is XP x64)

But now with my "overclocked" mouse the movement is waaay to slow, I need a bigger mousepad to move the mousepointer all across my monitor

Is this intended or just due to MS drivers or whatever?

I was planning on getting the Microsoft Habu gaming mouse developed by Razer because the current iteration of the Explorer 3.0 is a POS with crap microbuttons that keep failing, think I've been through 3 of these in the last 2 years, even replaced them with ones bought at Elfa but they also failed after a couple months

Anyway, will all mouse have this speed issue at high ouse rates? (above 125hz)

MarktheC - Monday, July 27, 2009 - link

Re: "But now with my "overclocked" mouse the movement is waaay to slow, I need a bigger mousepad to move the mousepointer all across my monitor. Is this intended or just due to MS drivers or whatever?"Yes, this is "how it works" (but it can be fixed).

What's happening is this: At 125 Hz and a given on-the-pad mouse speed, each mouse report might be returning (say) 16 counts/report.

The XP/Vista/7 "Enhance pointer precision" code uses the "16" value to lookup an acceleration curve (SmoothMouseXCurve/SmoothMouseYCurve) and apply a scaling factor to the mouse input (approx x 1.4 when the mouse count is 16). The pointer moves ~1.4 * 16 = ~22 pixels.

If the report rate is changed to to 1000 Hz, each mouse report returns 2, 2, 2, 2, 2, 2, 2, 2 instead (same gross movement of 16, but spread over 8 times as many reports). Now the XP/Vista/7 "Enhance pointer precision" code uses "2" to lookup the acceleration curve and returns a scaling factor (~0.6 when the mouse count is 2). The pointer moves ~0.6 * 2 * 8 = ~9 pixels and you perceive the mouse as slow.

This is (somewhat) described here:

http://www.codinghorror.com/blog/archives/000977.h...">http://www.codinghorror.com/blog/archives/000977.h...

http://www.microsoft.com/whdc/archive/pointer-bal....">http://www.microsoft.com/whdc/archive/pointer-bal....

BUT Microsoft made a silly design mistake!:

http://donewmouseaccel.blogspot.com/2009/06/out-of...">http://donewmouseaccel.blogspot.com/200...t-of-syn...

A solution is to tweak the Registry: HKEY_CURRENT_USER\Control Panel>Mouse>SmoothMouseXCurve and SmoothMouseYCurve values.

Treat each group of 4 bytes as a 32-bit integer, and divide by 8 (for 1000 Hz). AFAIK, doing this for both SmoothMouseYCurve & SmoothMouseXCurve should return the acceleration back to normal.

A BETTER solution may be to stick with "Enhance pointer precision" and 125 Hz for normal Windows work, and use 1000 Hz only for gaming AND TURN OFF "Enhance pointer precision" when gaming (if required by the game: most modern games uses DirectX to read the mouse, which ignores the "Enhance pointer precision" checkbox anyway).

Re: "I was planning on getting the Microsoft Habu ... will all mouse have this speed issue at high mouse rates? (above 125hz)"

I don't know: I expect the Habu driver will do the right thing and not need any fix as above, but I don't know...

DerekWilson - Monday, July 20, 2009 - link

Actually ... the report / second rate should have zero impact on the speed of the pointer. I do say should -- something odd could be happening like it could be dropping counts in order to assemble reports that fast (i.e. your mouse could be too overclocked and might be doing things wrong). But I am not a hardcore mouse overclocker myself so I'd do a little research on it.I would recommend, if your mouse can't actually hit 1000Hz, to drop it down to 500 reports/second instead of 1000 ... it should be more consistent that way, and maybe it will fix your pointer speed issue.

The CPI (reported as DPI) will have an impact on pointer speed. But so will things like setting mouse speed to maximum and using "enhance pointer precision" ... though these latter two don't really have desirable results.

I strongly recommend leaving mouse speed at the middle notch ... setting it higher actually skips pixels (though "enhance pointer precisions" makes your mouse able to move one pixel at a time if you move it really slowly). And I also recommend not using "enhance pointer precision" as well ...

These MS pointer ballistics can cause problems in older games, but if the developer did the "right" thing and used either DirectInput or raw input devices then the pointer speed settings shouldn't affect games (only the sensitivity slider in the game should affect pointer speed if it's done right). In most cases going forward you should be able to use the OS to manipulate your pointer speed without negatively impacting your game ... but there is a chance that these settings could negatively impact your gaming experience if the developer used a less desirable way to access the mouse data.

Per Hansson - Monday, July 20, 2009 - link

Thanks, the behaviour is the same at 250hz and 500hzThose rates just slow down the mouse more...

There would be no way at all that I could set the mouse speed slider to the middle and get used to that, same for not having enhance pointer precision on

Guess sometimes you just can't win eh? ;)

In fact I was quite annoyed by the change in ballistics going from Win2K which supported acceleration which I used and really liked to WinXP which only has this "enhance pointer precision" option

Xcrypt - Thursday, November 20, 2014 - link

You shouldn't enable enhance pointer precision, nor should you have your mouse speed set to maximum. Both will adversely affect your ability to aim, especially the acceleration will.valnar - Sunday, July 19, 2009 - link

"It is possible to overclock your mouse."Now I've seen everything. :)