NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

Architecting Fermi: More Than 2x GT200

NVIDIA keeps referring to Fermi as a brand new architecture, while calling GT200 (and RV870) bigger versions of their predecessors with a few added features. Marginalizing the efforts required to build any multi-billion transistor chip is just silly, to an extent all of these GPUs have been significantly redesigned.

At a high level, Fermi doesn't look much different than a bigger GT200. NVIDIA is committed to its scalar architecture for the foreseeable future. In fact, its one op per clock per core philosophy comes from a basic desire to execute single threaded programs as quickly as possible. Remember, these are compute and graphics chips. NVIDIA sees no benefit in building a 16-wide or 5-wide core as the basis of its architectures, although we may see a bit more flexibility at the core level in the future.

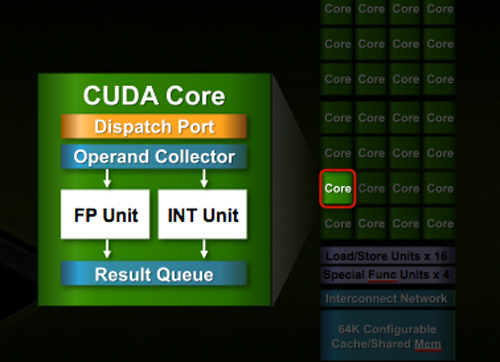

Despite the similarities, large parts of the architecture have evolved. The redesign happened at low as the core level. NVIDIA used to call these SPs (Streaming Processors), now they call them CUDA Cores, I’m going to call them cores.

All of the processing done at the core level is now to IEEE spec. That’s IEEE-754 2008 for floating point math (same as RV870/5870) and full 32-bit for integers. In the past 32-bit integer multiplies had to be emulated, the hardware could only do 24-bit integer muls. That silliness is now gone. Fused Multiply Add is also included. The goal was to avoid doing any cheesy tricks to implement math. Everything should be industry standards compliant and give you the results that you’d expect.

Double precision floating point (FP64) performance is improved tremendously. Peak 64-bit FP execution rate is now 1/2 of 32-bit FP, it used to be 1/8 (AMD's is 1/5). Wow.

NVIDIA isn’t disclosing clock speeds yet, so we don’t know exactly what that rate is yet.

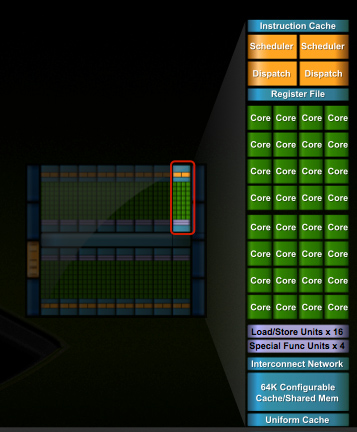

In G80 and GT200 NVIDIA grouped eight cores into what it called an SM. With Fermi, you get 32 cores per SM.

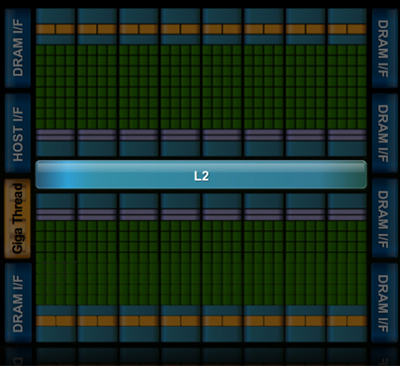

The high end single-GPU Fermi configuration will have 16 SMs. That’s fewer SMs than GT200, but more cores. 512 to be exact. Fermi has more than twice the core count of the GeForce GTX 285.

| Fermi | GT200 | G80 | |

| Cores | 512 | 240 | 128 |

| Memory Interface | 384-bit GDDR5 | 512-bit GDDR3 | 384-bit GDDR3 |

In addition to the cores, each SM has a Special Function Unit (SFU) used for transcendental math and interpolation. In GT200 this SFU had two pipelines, in Fermi it has four. While NVIDIA increased general math horsepower by 4x per SM, SFU resources only doubled.

The infamous missing MUL has been pulled out of the SFU, we shouldn’t have to quote peak single and dual-issue arithmetic rates any longer for NVIDIA GPUs.

NVIDIA organizes these SMs into TPCs, but the exact hierarchy isn’t being disclosed today. With the launch's Tesla focus we also don't know specific on ROPs, texture filtering or anything else related to 3D graphics. Boo.

A Real Cache Hierarchy

Each SM in GT200 had 16KB of shared memory that could be used by all of the cores. This wasn’t a cache, but rather software managed memory. The application would have to knowingly move data in and out of it. The benefit here is predictability, you always know if something is in shared memory because you put it there. The downside is it doesn’t work so well if the application isn’t very predictable.

Branch heavy applications and many of the general purpose compute applications that NVIDIA is going after need a real cache. So with Fermi at 40nm, NVIDIA gave them a real cache.

Attached to each SM is 64KB of configurable memory. It can be partitioned as 16KB/48KB or 48KB/16KB; one partition is shared memory, the other partition is an L1 cache. The 16KB minimum partition means that applications written for GT200 that require 16KB of shared memory will still work just fine on Fermi. If your app prefers shared memory, it gets 3x the space in Fermi. If your application could really benefit from a cache, Fermi now delivers that as well. GT200 did have an L1 texture cache (one per TPC), but the cache was mostly useless when the GPU ran in compute mode.

The entire chip shares a 768KB L2 cache. The result is a reduced penalty for doing an atomic memory op, Fermi is 5 - 20x faster here than GT200.

415 Comments

View All Comments

Moricon - Thursday, October 1, 2009 - link

Silicondoc you are a big GREEN C*OK lol it even rhymes!Alberto - Thursday, October 1, 2009 - link

The GPGPU market is very little...doesn't make good revenue for a company. Moreover the sw development is HARD and expensive; this piece of silicon seems like a NVidia mistake: too big to manifacture for a graphic card, nice (on the paper) for a market of niche.In comparison Amd is working far better and Intel too with Larrabee.

Hard times at the orizon for Nvidia, it has a monster with very low manufacturing yields, but nothing feasible for the consumer arena.

A prediction ? AMD will have the lead of graphic cards in the next years.....

SiliconDoc - Thursday, October 1, 2009 - link

The yields are fine, apparently you caught wind of the ati pr crew who was caught out lying.If not, you just made your standard idiot assumption, because the actual FACTS concerning the these tow latest 40nm chips is that ati yields have been very poor, and nvidias have been good.

---

Nice try, but you're wrong.

" Scalable Informatics has been selling NVidia Tesla (C1060) units as part of our Pegasus-GPU and Pegasus-GPU+Cell units. Several issues have arisen with Tesla availability and pricing.

First issue: Tesla units are currently on a 4-8 week back order. We have no control over this, all other vendors have the exact same issues. NVidia is not shipping Tesla in any appreciable volumes.

Our workaround: Until NVidia is able to ramp its volume to appropriate levels, Scalable Informatics will provide loaner GTX260 cards in place of the Tesla units. Once the Tesla units ship, we will exchange the GTX260 units for the Tesla units.

Update: 1-September-2009

Tesla C1060 units are now readily available for Pegasus and JackRabbit systems.

---

NOW THE PRICING

" Scalable Informatics JackRabbit systems are available in deskside and rackmount configurations, starting at 8 TB (TeraByte) in size, with individual systems ranging from 8TB to 96TB, and storage clusters up to 62 PB (PetaByte), with most systems starting price under $1USD/GB."

So, an 8TB system is 8 grand, 96TB 96 grand, and a 62 petabyte in the approaching one MILLION range.

http://www.scalableinformatics.com/catalog">http://www.scalableinformatics.com/catalog

Yes, not much there. LOLOLOL

--

POWER SAVINGS replacing massive cpu computers

--

The BNP Paribas (finance) study showed a $250,000 500 core cluster (37.5 kW) replaced with a 2 S1070 Tesla cluster at a cost of $24,000 and using only 2.8 kW. A study with oil and gas company Hess showed an $8M 2000-socket system (1.2Mw) being replaced by a 32 S1070 cluster for $400,000 and using only 45 kW in 31x less space. If you are running a CUDA-enabled application, or have access to the source code (you’ll need that to take advantage of the GPUs), you can clearly get significant performance gains for certain applications.

-

about 4 TFLOPS of peak from four C1060 cards (or 3 C1060 and a Quadro) and plugs into a standard wall outlet. Word from some of those selling this system is that sales have been mostly in the academic space and a little slower than expected, possibly due to the initially high ($10k+) price point. Prices have started to come down, however, and that might help sales. You can buy these today from vendors like Dell, Colfax, AMAX, Microway, and Penguin (for a partial list see NVIDIA’s PS product page).

-

---

And, of course you predict amd will have the lead in videocards the next few years. LOL

bhwahahahahaaaaaaaaaaaaaaaaaa

thebeastie - Thursday, October 1, 2009 - link

Personally I think NVidia has made the best bet it can make with supporting more Telsa style stuff, and in general just building a bigger madder GPU.The fact is that there aren't many good PC games around, I would say NVidia made some good sales out of Crysis by it self, people building a new PC with that game in mind having a very large weight on GPU choice.

But it is just not enough. L4D 2 is the next big title but being on the Vavle engine everyone know you will get 100fps on a GTX 275.

The other twist is that Steam has probably been one of the best things for gaming on the PC it just makes things 10 times easier.

Manually patching games etc is a killer for all but those who are gaming enthusiasts.

Dante80 - Thursday, October 1, 2009 - link

GT300 looks like a revolutionary product as far as HPC and GPU Computing are concerned. Happy times ahead, for professionals and scientists at least...Regarding the 3d gaming market though, things are not as optimistic. GT300 performance is rather irrelevant, due to the fact that nvidia currently does not have a speedy answer for the discrete, budget, mainstream and lower performance segments. Price projections aside, the GT300 will get the performance crown, and act as a marketing boost for the rest of the product line. Customers in the higher performance and enthusiast markets that have brand loyalty towards the greens are locked anyway. And yes, thats still irrelevant.

Remember ppl, the profit and bulk in the market is in a price segment nvidia does not even try to address currently. We can only hope that the greens can get sth more than GT200 rebranding/respins out for the lower market segments. Fast. Ideally, the new architecture should be able to be downscaled easily. Lets hope for that, or its definitely rough times ahead for nvidia. Especially if you look closely at the 5850 performance per $ ratio, as well as the juniper projections. And add in the economy crisis, shifting consumer focus, the difference of performance needed by sotware and performance given by the hw, the locking of TFT resolutions and heat/power consumption concerns.

With AMD getting out of the warehouses the whole 5XXX family in under 6months (I think thats a first for the GPU industry, I might be wrong though), the greens are in a rather tight spot atm. GT200 respins wont save the round, GT300 @500$++ wont save the round, and tesla wont certainly save the round (just look at sales and profit in the last years concerning the HPC-GPUCU segments).

Lets hope for the best, its in our interest as consumers anyway..

blindbox - Thursday, October 1, 2009 - link

I'm sorry, but I couldn't resist.The Adventures of SiliconDoc.

NVIDIA GeForce GTS 250: A Rebadged 9800 GTX+

http://www.anandtech.com/showdoc.aspx?i=3523">http://www.anandtech.com/showdoc.aspx?i=3523

ATI Radeon HD 4890 vs. NVIDIA GeForce GTX 275

http://www.anandtech.com/video/showdoc.aspx?i=3539...">http://www.anandtech.com/video/showdoc.aspx?i=3539...

AMD's Radeon HD 5870: Bringing About the Next Generation Of GPUs

http://www.anandtech.com/video/showdoc.aspx?i=3643...">http://www.anandtech.com/video/showdoc.aspx?i=3643...

The Radeon HD 4870 1GB: The Card to Get

http://www.anandtech.com/showdoc.aspx?i=3415">http://www.anandtech.com/showdoc.aspx?i=3415

Overclocking Extravaganza: GTX 275's Complex Characteristics

http://www.anandtech.com/video/showdoc.aspx?i=3575">http://www.anandtech.com/video/showdoc.aspx?i=3575

NVIDIA GeForce GTX 295: Leading the Pack

http://www.anandtech.com/showdoc.aspx?i=3498&p...">http://www.anandtech.com/showdoc.aspx?i=3498&p...

Faster Graphics For Lower Prices: ATI Radeon HD 4770

http://www.anandtech.com/video/showdoc.aspx?i=3553...">http://www.anandtech.com/video/showdoc.aspx?i=3553...

Of course, check the comments.

I couldn't find his comments in the 4870x2 review, nor the pre-DX10 days.

tamalero - Friday, October 2, 2009 - link

this guy is such a epic trainwreck....I actually wonder if this guy is the ANGRY GERMAN KID on disguise ( check the video on youtube lol )

Docket - Thursday, October 1, 2009 - link

Yep SiliconDoc has been making same nonsense noise elsewhere as well and been banned at least from one other site (google silicondocs):http://forums.bit-tech.net/showthread.php?p=203896...">http://forums.bit-tech.net/showthread.php?p=203896...

Here extract from bit-tech staff:

-----------

OK time for you to go, you contribute nothing to the community other than trolling, bye bye.

I'm leaving all your posts here for evidence that you're a complete lunatic, but I'm glad you realise that you do need help. It's the first step.

I recommend checking out Nvidia forums and posting there - you'll feel more at home.

-----------

S/He is obviously retarded person. I mean initially it was "fun" to read but now I'm just so bored with this s*it and it is actually interfering while trying to read comments from other readers. Maybe that is the whole point of the noise; to side track any meaningful conversation.

I vote silicondoc to banned from this site (or give me an ability to filter all the post from and related to this user)... anyone else?

SiliconDoc - Thursday, October 1, 2009 - link

It seems to me, you wish to remain absolutely blind with your fuming hatred and emotional issues, let's see WHAT was supposedly said at your link:" Originally Posted by wuyanxu

nVidia is trying very hard to NOT loose this round, they've priced this too aggressively, surely there's some cooperate law on this? "

---

Here we see the brain deranged red rooster, who has been decieved by the likes of you know who, for so long, that a low priced Nvidia card that beats the ati card, must be "illegally priced", according to the little red communist overseas.

I suppose pointing that out in a fashion you and your glorious roosters don't like, is plenty reason for you to shriek "contributes nothing" and "let's ban him!"

Well, fire up your glowing red torches, and I will gladly continue to show what fools red roosters can be, and often are.

I'm so happy you linked some silicondoc post on some other forum, and we had the chance to see the deranged red rooster screech that a low priced Nvidia GTX275 is illegal.

--

Good for you, you're such a big help here.

strikeback03 - Thursday, October 1, 2009 - link

would be nice, I was wondering when I saw this article how it could have 140 comments already, forgetting he was sure to come trolling. I've stopped reading each comment thread after he got involved, since any chance of reliable information coning out has ceased.