NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

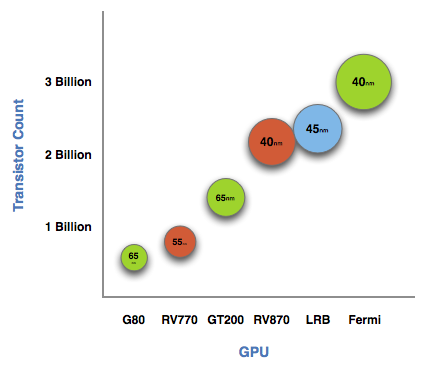

The graph below is one of transistor count, not die size. Inevitably, on the same manufacturing process, a significantly higher transistor count translates into a larger die size. But for the purposes of this article, all I need to show you is a representation of transistor count.

See that big circle on the right? That's Fermi. NVIDIA's next-generation architecture.

NVIDIA astonished us with GT200 tipping the scales at 1.4 billion transistors. Fermi is more than twice that at 3 billion. And literally, that's what Fermi is - more than twice a GT200.

At the high level the specs are simple. Fermi has a 384-bit GDDR5 memory interface and 512 cores. That's more than twice the processing power of GT200 but, just like RV870 (Cypress), it's not twice the memory bandwidth.

The architecture goes much further than that, but NVIDIA believes that AMD has shown its cards (literally) and is very confident that Fermi will be faster. The questions are at what price and when.

The price is a valid concern. Fermi is a 40nm GPU just like RV870 but it has a 40% higher transistor count. Both are built at TSMC, so you can expect that Fermi will cost NVIDIA more to make than ATI's Radeon HD 5870.

Then timing is just as valid, because while Fermi currently exists on paper, it's not a product yet. Fermi is late. Clock speeds, configurations and price points have yet to be finalized. NVIDIA just recently got working chips back and it's going to be at least two months before I see the first samples. Widespread availability won't be until at least Q1 2010.

I asked two people at NVIDIA why Fermi is late; NVIDIA's VP of Product Marketing, Ujesh Desai and NVIDIA's VP of GPU Engineering, Jonah Alben. Ujesh responded: because designing GPUs this big is "fucking hard".

Jonah elaborated, as I will attempt to do here today.

415 Comments

View All Comments

SiliconDoc - Thursday, October 1, 2009 - link

Jeezus, you're just that bright, aren't you.The article is dated September 19th, and "they scored a picture" from another website, that "scored a picture".

Our friendly reviewer herer at AT had the cards in his hands, on the bench, IRL.

--

I mean you have like no clue at all, don't you.

palladium - Thursday, October 1, 2009 - link

I agree. GPGPU has come a long way, but it's still in its infancy, at least in the consumer space (Badaboom and AVIVO both had bugs).I just want a card that can play Crysis all very high 19x12 4xAA @60fps. Maybe a dual-GPU GT300 can deliver that.

wumpus - Wednesday, September 30, 2009 - link

First first reaction after reading that the cost of double multiply would be twice that of a single was "great. Half the transistors will be sitting there idle during games." Sure, this isn't meant to be a toy, but it looks like they have given up the desktop graphics to AMD (and whenever Intel gets something working). Maybe they will get volume up enough to lower the price, but there are only so many chips TMSC can make that size.On second thought, those little green squares can't take up half the chip. Any guess what part of the squares are multiplies? Is the cost of fast double point something like 10% of the transistors idle during single (games)? On the gripping hand, makes the claim that "All of the processing done at the core level is now to IEEE spec. That’s IEEE-754 2008 for floating point math (same as RV870/5870)". If they seriously mean that they are prepared to include all rounding, all exceptions, and all the ugly, hairy corner cases that inhabit IEEE-754, wait for Juniper. I really mean it. If you are doing real numerical computing you need IEEE-754. If you don't (like you just want a real framerate from Crysis for once) avoid it like the plague.

Sorry about the rant. Came for the beef on doubles, but noticed that quote when checking the article. Looks like we'll need some real information about what "core level at IEEE-754" means on different processors. Who provides all the rounding modes, and what parts get emulated slowly? [side note. Is anybody with a 5870 able to test underflow in OpenCL? You might find out a huge amount about your chip with a single test].

SiliconDoc - Wednesday, September 30, 2009 - link

I think I'll stick with the giant profitables greens proven track record, not your e-weened redspliferous dissing.Did you watch the NV live webcast @ 1pm EST ?

---

Nvidia is the only gpu company with OBE BILLION DOLLARS PER YEAR IN R&D.

---

That's correct, nvidia put into research on the Geforce, the whoile BILLION ati loses selling their crappy cheap hot cores on weaker thinner pcb with near zero extra features only good for very high rez, which DOESN'T MATCH the cheapo budget pinching purchasrs who buy red to save 5-10 bang for bucks...--

--

Now about that marketing scheme ?

LOL

Ati plays to high rez wins, but has the cheapo card, expecting $2,000 monitor owners to pinch pennies.

"great marketing" ati...

LOL

PorscheRacer - Wednesday, September 30, 2009 - link

Just so you know, ATI is a seperate division in AMD (the graphics side obviously) and did post earnings this year. ATI is keeping the CPU side of AMD afloat in all intents and purposes. Is there a way to ban or block you? I was excited to read about the GF300 and expecting some good comments and discussion about this, and then you wrecked the experience. Now I just don't care.Adul - Thursday, October 1, 2009 - link

silicon idiot is doing more harm than good. please ban himSiliconDoc - Thursday, October 1, 2009 - link

The truth is a good thing, even if you're so used to lies that you don't like it.I guess it's good too, that so many people have tried so hard to think of a rebuttal to any or of all my points, and they don't have one, yet.

Isn't that wonderful ! You fit that category, too.

SiliconDoc - Wednesday, September 30, 2009 - link

Do you think yhour LIES will pass with no backup ?" A.M.D. has struggled for two years to return to profitability, losing billions of dollars in the process.

A.M.D., the No. 2 maker of computer microprocessors after Intel, lost $330 million, or 49 cents a share, in the second quarter. In the same period last year, it lost $1.2 billion, or $1.97 a share.

Excluding one-time gains, A.M.D. says its loss was 62 cents a share. On that basis, analysts had predicted a loss of 47 cents a share, according to Thomson Reuters. Sales fell to $1.18 billion, down 13 percent. Analysts were expecting $1.13 billion."

---

http://www.nytimes.com/2009/07/22/technology/compa...">http://www.nytimes.com/2009/07/22/technology/compa...

ATI card sales did increase a bit, but LOST MONEY anyway. More than expected.

--

PS I'm not sorry I've ruined your fantasy and expsoed your lie. If you keep lying, should you be banned for it ?

PorscheRacer - Thursday, October 1, 2009 - link

http://arstechnica.com/hardware/news/2009/07/intel...">http://arstechnica.com/hardware/news/20...-graphic...Again, the graphics group of AMD turned a profit (albeit a small one after R&D and costs) while the other divisions lost money.

SiliconDoc - Thursday, October 1, 2009 - link

LOL- YOU'VE SIMPLY LIED AGAIN, AND PROVIDED A LINK, THAT CONFIRMS YOU LIED.It must be tough being such a slumbag.

--

" After the channel stopped ordering GPUs and depleted inventory in anticipation of a long drawn out worldwide recession in Q3 and Q4 of 2008, expectations were hopeful, if not high that Q1’09 would change for the better. In fact, Q1 showed improvement but it was less than expected, or hoped. Instead, Q2 was a very good quarter for vendors – counter to normal seasonality – but then these are hardly normal times.

Things probably aren't going to get back to the normal seasonality till Q3 or Q4 this year, and we won't hit the levels of 2008 until 2010."

As you should have a clue, noting, 2008 was bad, and they can't even reach that pathetic crash until 2010.

An increase in sales from a recent prior full on disaster decrease, is still less than the past, is low in the present, and is " A LOSS " PERIOD.

You don't provide text because NOTHING at your link claims what you've said, you are simply a big fat LIAR.

Thanks for the link anyway, that links my link:

http://jonpeddie.com/press-releases/details/amd-so...">http://jonpeddie.com/press-releases/det...ntel-and...

This is a great quote: " We still believe there will be an impact from the stimulus programs worldwide "

LOL

hahahhha - just as I kept supposing.

" -Jon Peddie Research (JPR), the industry's research and consulting firm for graphics and multimedia"

---

NOTHING, AT either link, describes a profit for ati graphics, PERIOD.

Try again mr liar.