NVIDIA's Fermi: Architected for Tesla, 3 Billion Transistors in 2010

by Anand Lal Shimpi on September 30, 2009 12:00 AM EST- Posted in

- GPUs

Architecting Fermi: More Than 2x GT200

NVIDIA keeps referring to Fermi as a brand new architecture, while calling GT200 (and RV870) bigger versions of their predecessors with a few added features. Marginalizing the efforts required to build any multi-billion transistor chip is just silly, to an extent all of these GPUs have been significantly redesigned.

At a high level, Fermi doesn't look much different than a bigger GT200. NVIDIA is committed to its scalar architecture for the foreseeable future. In fact, its one op per clock per core philosophy comes from a basic desire to execute single threaded programs as quickly as possible. Remember, these are compute and graphics chips. NVIDIA sees no benefit in building a 16-wide or 5-wide core as the basis of its architectures, although we may see a bit more flexibility at the core level in the future.

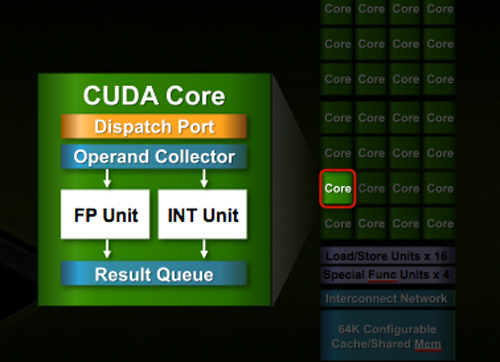

Despite the similarities, large parts of the architecture have evolved. The redesign happened at low as the core level. NVIDIA used to call these SPs (Streaming Processors), now they call them CUDA Cores, I’m going to call them cores.

All of the processing done at the core level is now to IEEE spec. That’s IEEE-754 2008 for floating point math (same as RV870/5870) and full 32-bit for integers. In the past 32-bit integer multiplies had to be emulated, the hardware could only do 24-bit integer muls. That silliness is now gone. Fused Multiply Add is also included. The goal was to avoid doing any cheesy tricks to implement math. Everything should be industry standards compliant and give you the results that you’d expect.

Double precision floating point (FP64) performance is improved tremendously. Peak 64-bit FP execution rate is now 1/2 of 32-bit FP, it used to be 1/8 (AMD's is 1/5). Wow.

NVIDIA isn’t disclosing clock speeds yet, so we don’t know exactly what that rate is yet.

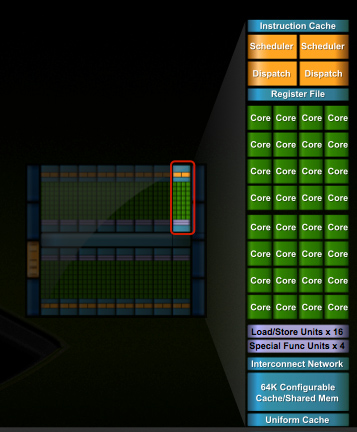

In G80 and GT200 NVIDIA grouped eight cores into what it called an SM. With Fermi, you get 32 cores per SM.

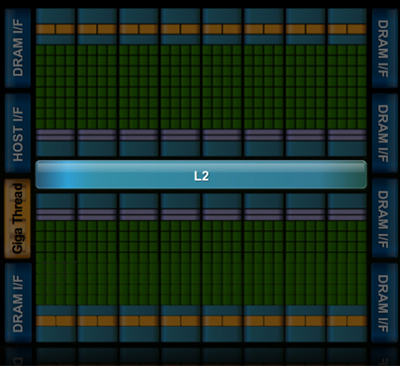

The high end single-GPU Fermi configuration will have 16 SMs. That’s fewer SMs than GT200, but more cores. 512 to be exact. Fermi has more than twice the core count of the GeForce GTX 285.

| Fermi | GT200 | G80 | |

| Cores | 512 | 240 | 128 |

| Memory Interface | 384-bit GDDR5 | 512-bit GDDR3 | 384-bit GDDR3 |

In addition to the cores, each SM has a Special Function Unit (SFU) used for transcendental math and interpolation. In GT200 this SFU had two pipelines, in Fermi it has four. While NVIDIA increased general math horsepower by 4x per SM, SFU resources only doubled.

The infamous missing MUL has been pulled out of the SFU, we shouldn’t have to quote peak single and dual-issue arithmetic rates any longer for NVIDIA GPUs.

NVIDIA organizes these SMs into TPCs, but the exact hierarchy isn’t being disclosed today. With the launch's Tesla focus we also don't know specific on ROPs, texture filtering or anything else related to 3D graphics. Boo.

A Real Cache Hierarchy

Each SM in GT200 had 16KB of shared memory that could be used by all of the cores. This wasn’t a cache, but rather software managed memory. The application would have to knowingly move data in and out of it. The benefit here is predictability, you always know if something is in shared memory because you put it there. The downside is it doesn’t work so well if the application isn’t very predictable.

Branch heavy applications and many of the general purpose compute applications that NVIDIA is going after need a real cache. So with Fermi at 40nm, NVIDIA gave them a real cache.

Attached to each SM is 64KB of configurable memory. It can be partitioned as 16KB/48KB or 48KB/16KB; one partition is shared memory, the other partition is an L1 cache. The 16KB minimum partition means that applications written for GT200 that require 16KB of shared memory will still work just fine on Fermi. If your app prefers shared memory, it gets 3x the space in Fermi. If your application could really benefit from a cache, Fermi now delivers that as well. GT200 did have an L1 texture cache (one per TPC), but the cache was mostly useless when the GPU ran in compute mode.

The entire chip shares a 768KB L2 cache. The result is a reduced penalty for doing an atomic memory op, Fermi is 5 - 20x faster here than GT200.

415 Comments

View All Comments

457R4LDR34DKN07 - Thursday, October 1, 2009 - link

A few points I have about this chip. First it is massive which leads me to believe it is going to be hot and use a lot of power (depending on frequencies). Second it is a one size fits all processor and not specifically a graphics processor. Third is it is going to be difficult to make with decent yields IE expensive and will be hard to scale performance up. I do believe It will be fast due to cache but redesigning cache will be hard for this monolith.silverblue - Thursday, October 1, 2009 - link

It should take the performance crown back from ATI but I'm worried that it's going to be difficult to scale it down for lesser cards (which is where nVidia will make more of its money anyway).When it's out and we can compare its performance as well as price with the 58x0 series, I'll be happier. Choice is never a bad thing. I also don't want nVidia to be too badly hurt by Larrabee so it's in their best interests to get this thing out soon.

AnnonymousCoward - Thursday, October 1, 2009 - link

The Atom is for mobile applications, and Intel is still designing faster desktop chips. The "Atom" of graphics is called "integrated", and it has been around forever. There's no reason to believe that PC games of 2010 won't require faster graphics.The fact that nvidia wants to GROW doesn't mean their bread-and-butter business is going away. Every company wants to grow.

If Fermi's die size is significantly increased by adding stuff that doesn't benefit 3D games, that's a problem, and they should consider 2 different designs for Tesla and gaming. Intel has Xeon chips separate, don't they?

If digital displays overcome their 60Hz limitation, there will be more incentive for cards to render more than 60fps.

Lastly, Anand, you have a reoccurring grammar problem of separating two complete sentences with a comma. This is hard to read and annoying. Please either use a semicolon or start a new sentence. Two examples are, Page 8, sentences that begin with "Display resolutions" and "The architecture". Aside from that, excellent article as usual.

Ananke - Thursday, October 1, 2009 - link

Actually, NVidia is great company, as well as AMD is. However, NVidia cards recently tend to be more expensive compared to their counterparts, so WHY somebody would pay more for the same result?If and when they bring that Fermi to the market, and if that thing is $200 per card delivered to me, I may consider buying. Most people here don't care if NVidia is capable of building supercomputers. They care if they can buy descent gaming card for less than $200. Very simple economics.

SiliconDoc - Thursday, October 1, 2009 - link

I'm not sure, other than there's another red raver ready on repeat, but if all that you and your "overwhelming number" of fps freaks care about is fps dollar bang, you still don't have your information correct.Does ATI have a gaming presets panel, filled with a hundred popular games all configurable with one click of the mouse to get there?

Somehow, when Derek quickly put up the very, very disappointing new ati CCC shell, it was immediately complained about from all corners, and the worst part was lesser functionality in the same amount of clicks. A drop down mess, instead of a side spread nice bookmarks panel.

So really, even if you're only all about fps, at basically perhaps a few frames more at 2560x with 4xaa and 16aa on only a few specific games, less equal or below at lower rez, WHY would you settle for that CCC nightmare, or some other mushed up thing like ramming into atitool and manually clicking and typing in everything to get a gaming profile, or endless jacking with rivatuner ?

Not only that, but then you've got zero PhysX (certainly part of 3d gaming), no ambient occlusion, less GAME support with TWIMTBP dominating the field, and no UNIFIED 190.26 driver, but a speckling hack of various ati versions in order to get the right one to work with your particular ati card ?

---

I mean it's nice to make a big fat dream line that everything is equal, but that really is not the case at all. It's not even close.

-

I find ragin red roosters come back with "I don't run CCC !" To which of course one must ask "Why not ? Why can't you run your "equal card" panel, why is it - because it sucks ?

Well it most definitely DOES compared to the NVidia implementation.

--

Something usually costs more because, well, we all know why.

Divide Overflow - Thursday, October 1, 2009 - link

Agreed. I'm a bit worried that this monster will cost an arm and a leg and won't scale well into consumer price points.Kingslayer - Thursday, October 1, 2009 - link

Silicon duck is the greatest fanboy I've ever seen, maybe less annomynity would quiet his rhetoric.http://www.automotiveforums.com/vbulletin/member.p...">http://www.automotiveforums.com/vbulletin/member.p...

tamalero - Thursday, October 1, 2009 - link

well that answers everything, when someone has to spam the "catholic", must be a bibblethumper who only spreads a single thing and doesnt believe nor accept any other information, even with confirmed facts.SiliconDoc - Thursday, October 1, 2009 - link

What makes you think I'm "catholic" ?And that's interesting you've thrown out another nutball cleche', anyway.

How is it that you've determined that a "catholic" doesn't accept "any other 'even confirmed' facts" ? ( I rather doubt you know what Confirmation is, so you don't get a pun point, and that certainly doesn't prove I'm anything but knowledgeable. )

Or even a "Bible thumper" ?

Have you ever met a bible thumper?

Be nice to meet one some day, guess you've been sinnin' yer little lying butt off - you must attract them ! Not sure what proives either, other than it is just as confirmed a fact as you've ever shared.

I suppose that puts 95% of the world's population in your idiot bucket, since that's low giving 5% to athiests, probably not that many.

So in your world, you, the athiest, and your less than 5%, are those who know the facts ? LOL

About what ? LOL

Now aren't you REALLY talking about, yourself, and all your little lying red ragers here ?

Let's count the PROOFS you and yours have failed, I'll be generous

1. Paper launch definition

2. Not really NVIDIA launch day

3. 5870 is NOT 10.5" but 11.1" IN FACT, and longer than 285 and 295

4. GT300 is already cooked and cards are being tested just not by your red rooster master, he's low down on the 2 month plus totem pole

5. GT300 cores have a good yield

6. ati cores did/do not have a good yield on 5870

7. Nvidia is and has been very profitable

8. ati amd have been losing lots of money, BILLIONS on billions sold BAD BAD losses

9. ati cores as a general rule and NEARLY ALWAYS have hotter running cores as released, because of their tiny chip that causes greater heat density with the same, and more and even less power useage, this is a physical law of science and cannot be changed by red fan wishes.

10. NVIDIA has a higher market share 28% than ati who is 3rd and at only 18% or so. Intel actually leads at 50%, but ati is LAST.

---

Shall we go on, close minded, ati card thumping red rooster ?

---

I mean it's just SPECTACULAR that you can be such a hypocrit.

tamalero - Friday, October 2, 2009 - link

a.-Since when the yields of the 5870 are lower than the GT300?they use the same tech and since the 5870 is less complex and smaller core, it will obviusly have HIGHER YIELDS. (also where are your sources, I want facts not your imaginary friend who tells you stuff)

2.-Nvidia wasnt profiteable last year when they got caught shipping defective chipsets and forced by ATI to lower the prices of the GT200 series.

3.- only Nvidia said "everyhing is OK" while demostrating no working silicon, thats not the way to show that the yields are "OK".

4.- only the AMD division is lossing money, ATI is earning and will for sure earn a lot more now that the 58XX series are selling like hot cakes.

5.- 50% if you count the INTEGRATED market, wich is not the focus of ATI, ATI and NVidia are mostly focused on discrete graphics.

intel as 0% of the discrete market.

and actually Nvidia would be the one to dissapear first, as they dont hae exclusivity for their SLIs and Corei7 corei5 chipsets.

while ATI can with no problem produce stuff for their AMD mobos.

and dude, I might be from another country, but at least Im not trying to spit insults every second unlike you, specially when proven wrong with facts.

please, do the world a favor and get your medicine.