GF100’s Gaming Architecture

Although it feels like ages ago, NVIDIA announced the Fermi architecture back in September of 2009, focusing on the compute abilities of the GPU that would be GF100. Today’s announcement is about filling in the blanks – where does the graphics hardware fit in to the design that NVIDIA revealed earlier.

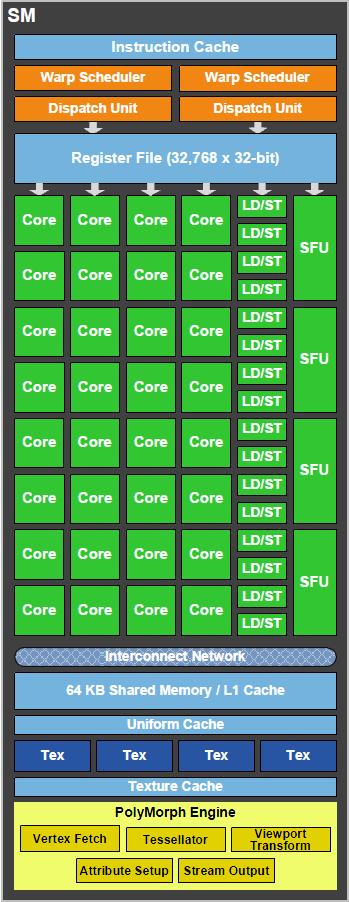

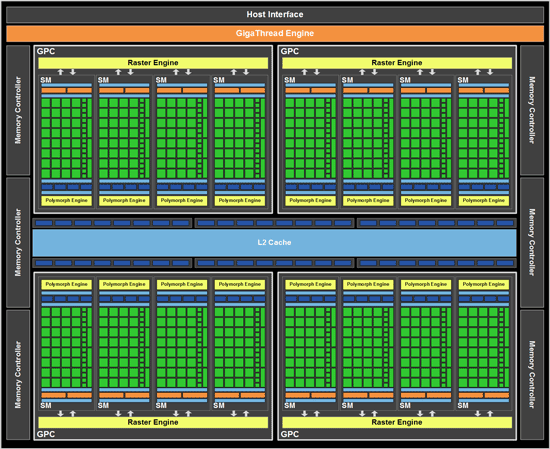

As a quick refresher, the GF100 is composed of 512 SPs, which NVIDIA now calls CUDA cores. Each CUDA core is organized in to a block of 32 cores which we call a Streaming Multiprocessor (SM), which in turn are laid out so that 4 of them are in a Graphics Processing Cluster (GPC). Finally, there are 4 GPCs in a single GF100 chip. 32x4x4 = 512 CUDA cores.

| GF100 | GT200x2 (GTX 295) | GT200 (GTX 285) | G92 (9800+ GTX) | |

| Stream Processors | 512 | 2 x 240 | 240 | 128 |

| Texture Address / Filtering | 64/256 | 2 x 80 / 80 | 80 / 80 | 64 / 64 |

| ROPs | 48 | 2x 28 | 32 | 16 |

| Core Clock | ? | 576MHz | 648MHz | 738MHz |

| Shader Clock | ? | 1242MHz | 1476MHz | 1836MHz |

| Memory Clock | ? GDDR5 | 999MHz (1998MHz data rate) GDDR3 | 1242MHz (2484MHz data rate) GDDR3 | 1100MHz (2200MHz data rate) GDDR3 |

| Memory Bus Width | 384-bit | 2 x 448-bit | 512-bit | 256-bit |

| Frame Buffer | ? | 2 x 896MB | 1GB | 512MB |

| Transistor Count | 3B | 2 x 1.4B | 1.4B | 754M |

| Manufacturing Process | TSMC 40nm | TSMC 55nm | TSMC 55nm | TSMC 55nm |

| Price Point | $? | $500 | $400 | $150 - 200 |

When NVIDIA first unveiled GF100, we did not know anything about the ROPs, texture units, or any of the fixed-function graphics units that are customary in a GPU. Today we now have that information, and can discuss where it goes.

While GF100 resembles GT200 in a number of ways as a compute GPU, as a gaming GPU it’s very close to being a complete departure from GT200. The big change here is that the single block of fixed-function hardware as we know it is gone. Virtually every piece of hardware has been split up and moved down a level, and can now be found as part of a GPC or as a SM. NVIDIA’s GF100 block diagram does a good job of showing this.

What’s left of the fixed function hardware is the GigaThread Engine, which is what NVIDIA is calling their scheduler. To understand where our fixed function hardware went, we must meet the newest execution units: The PolyMorph Engine and the Raster Engine.

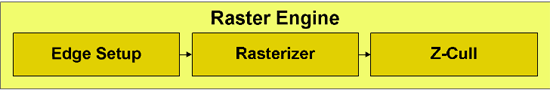

We’ll start with the Raster Engine. The Raster Engine is the combination of all the raster related hardware that we would have found previously in the fixed function pipeline. It does edge/triangle setup, rasterization, and z-culling in a pipelined manner. Each GPC has its own Raster Engine. NVIDIA has very little to say about the Raster Engine, but very little has changed from GT200 besides the fact that there are now 4 of them (one for each GPC) as opposed to 1 larger block. Each rasterizer can do 8 pixels per clock, for a total of 32 pixels per clock over the entirety of GF100.

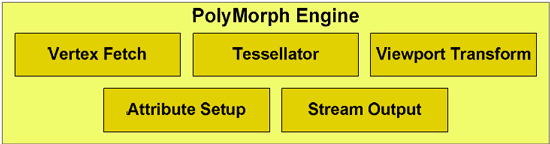

The much more important of the two new execution units is the PolyMorph Engine, which is what NVIDIA is calling the execution unit that handles geometry for GF100. The PolyMorph Engine is responsible for handling Vertex Fetch, Tessellation, Viewport Transform, Attribute Setup, and Stream Output. It’s here where the Tessellator is located, one of the biggest changes that DX11 is bringing to GPU design. Unlike the Raster Engine, each SM has a PolyMorph Engine, giving the GF100 16 PolyMorph Engines altogether.

While the PolyMoprh Engine may sound simple in its description, don’t let it fool you. NVIDIA didn’t just move their geometry hardware to a different place, clone it 15 times, and call it a day. This was previously fixed-function hardware where a single unit sat in a pipeline and did its share of the work. By splitting up the fixed-function pipeline like this, NVIDIA in actuality created a lot of work for themselves. Why? Out of order execution.

OoO is something we usually reserve for CPUs, where high-end CPUs are built to execute instructions out of order in order to extract more performance out of them through instruction level parallelism. OoO is very hard to accomplish, because you can only execute certain instructions ahead of other ones while maintaining the correct result for your data. Execute an add instruction that relies on a previous operation before that’s done, and you have problems. GF100 isn’t a full OoO design, so we’re not going to cover OoO in-depth here, but if you’d like to know more please see this article.

At any rate, internally each PolyMorph Engine is still a simple in-order design. NVIDIA hasn’t gone so far as to make a PolyMorph Engine an OoO design – but because there are 16 of them when there used to be just 1, OoO hazards can occur just as they would in an OoO executing CPU. NVIDIA now has to keep track of what each PolyMorph Engine is doing in respect to the other 15, and put the brakes on any of them that get too far ahead in order to maintain the integrity of results.

To resolve the hazards of OoO, GF100 has a private communication channel just for the PolyMorph Engines that allows them to stay together on a task in spite of being spread apart. The fact of the matter is that all of the work that goes in to making a design like this work correctly is an immense amount of effort, and NVIDIA’s engineers are quite proud of this effort. They have taken the monolithic design of prior GPUs’ geometry units, and made it parallel. We can’t overstate how much of an engineering challenge this is.

However all of this work came at a cost, and not just the significant engineering resources NVIDIA threw at GF100. The other cost was time – we believe that the PolyMorph Engine is the single biggest reason that GF100 didn’t make it out last year. It’s the single biggest redesign of any component in GF100, and is something that NVIDIA had to start virtually from scratch on. When NVIDIA told us that designing a big GPU is hard, this is what they had in mind.

Now why did NVIDIA put themselves through all of this? Because in their eyes, they had to. The use of a fixed-function pipeline in their eyes was a poor choice given the geometric complexity that a tessellator would create, and hence the entire pipeline needed to be rebalanced. By moving to the parallel design of the PolyMorph Engine, NVIDIA’s geometry hardware is no longer bound by any limits of the pipelined fixed-function design (such as bottlenecks in one stage of the pipeline), and for better or for worse, they can scale their geometry and raster abilities with the size of the chip. A smaller GF100 derivative will not have as many PolyMorph or Raster units as GF100, and as a result won’t have the same level of performance; G92 derivatives and AMD’s designs both maintain the same fixed function pipeline through all chips, always offering the same level of performance.

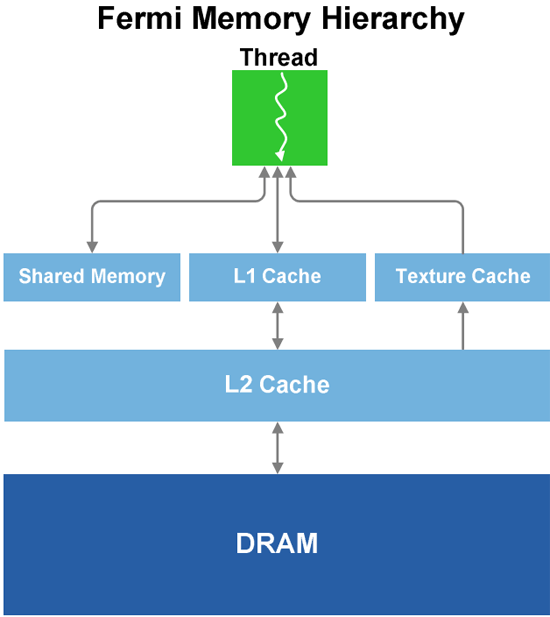

Speaking of performance, we’ll get to that in a bit, but for now we’ll finish our discussion of GF100’s graphics units. Each SM has 4 texture units, which have changes somewhat from the GT200. In GT200, the texture units were at the TPC level; here they are per SM. The texture units are also affected by the cache reorganization of GF100; for L1 cache they still have the same 12KB of texture cache per set of 4 texture units, while they all share GF100’s 768KB L2 cache, which is now used by all execution units and not just textures. Each unit can compute 1 texture address and fetch 4 texture samples per clock, a significantly higher texture fetch rate than on the GT200. Furthermore in conjunction with DX11, NVIDIA’s texture units now support DX11’s new compressed texture formats. Finally, texture units are now tied to the shader clock and not the core clock. They run at 1/2 the shader clock speed of GF100.

Last but not least, this brings us to the ROPs. The ROPs have been reorganized, there are now 48 of them in 6 parttions of 8, and a 64bit memory channel serving each partition. The ROPs now share the L2 cache with the rest of GF100, while under GT200 they had their own L2 cache. Each ROP can do 1 regular 32bit pixel per clock, 1 FP16 pixel over 2 clocks, or 1 FP32 pixel over 4 clocks, giving the GF100 the ability to retire 48 regular pixels per clock. The ROPs are clocked together with the L2 cache.

That leaves us on a final note: clocks. The core clock has been virtually done away with on GF100, as almost every unit now operates at or on a fraction of the shader clock. Only the ROPs and L2 cache operate on a different clock, which is best described as what’s left of the core clock. The shader clock now drives the majority of the chip, including the shaders, the texture units, and the new PolyMorph and Raster Engines. Specifically, the texture units, PolyMorph Engine, and Raster Engine all run at 1/2 shader clock (which NVIDIA is tentatively calling the "GPC Clock"), while the L1 cache and the shaders themselves run at the full shader clock. Don’t be surprised if GF100 overclocking is different from GT200 overclocking as a result.

115 Comments

View All Comments

chizow - Monday, January 18, 2010 - link

Looks like Nvidia G80'd the graphics market again by completely redesigning major parts of their rendering pipeline. Clearly not just a doubling of GT200, some of the changes are really geared toward the next-gen of DX11 and PhysX driven games.One thing I didn't see mentioned anywhere was HD sound capabilities similar to AMD's 5 series offerings. I'm guessing they didn't mention it, which makes me think its not going to be addressed.

mm2587 - Monday, January 18, 2010 - link

for nvidia to "g80" the market again they would need parts far faster then anything amd had to offer and to maintain that lead for several months. The story is in fact reversed. AMD has the significantly faster cards and has had them for months now. gf100 still isn't here and the fact that nvidia isn't signing the praises of its performance up and down the streets is a sign that they're acceptable at best. (acceptable meaning faster then a 5870, a chip that's significantly smaller and cheaper to make)chizow - Monday, January 18, 2010 - link

Nah, they just have to win the generation, which they will when Fermi launches. And when I mean "generation", I mean the 12-16 month cycles dictated by process node and microarchitecture. It was similar with G80, R580 had the crown for a few months until G80 obliterated it. Even more recently with the 4870X2 and GTX 295. AMD was first to market by a good 4 months but Nvidia still won the generation with GTX 295.FaaR - Monday, January 18, 2010 - link

Win schmin.The 295 ran extremely hot, was much MUCH more expensive to manufacture, and the performance advantage in games was negligible for the most part. No game is so demanding the 4870 X2 can't run it well.

The geforce 285 is at least twice as expensive as a radeon 4890, its closest competitor, so how you can say Nvidia "won" this round is beyond me.

But I suppose with fanboy glasses on you can see whatever you want to see. ;)

beck2448 - Monday, January 18, 2010 - link

Its amazing to watch ATI fanboys revise history.The 295 smoked the competition and ran cooler and quieter. Fermi will inflict another beatdown soon enough.

chizow - Monday, January 18, 2010 - link

Funny the 295 ran no hotter (and often cooler) with a lower TDP than the 4870X2 from virtually every review that tested temps and was faster as well. Also the GTX 285 didn't compete with the 4890, the 275 did in both price and performance.Its obvious Nvidia won the round as these points are historical facts based on mounds of evidence, I suppose with fanboy glasses on you can see whatever you want to see. ;)

Paladin1211 - Monday, January 18, 2010 - link

Hey kid, sometimes less is more. You dont need to post that much just to say "nVidia wins, and will win again". This round AMD has won with 2mil cards drying up the graphics market. You cant change this, neither could nVidia.Just come out and buy a Fermi, which is 15-20% faster than a HD 5870, for $500-$600. You only have to wait 3 months, and save some bucks until then. I have a HD 5850 here and I'm waiting for Tegra 2 based smartphone, not Fermi.

Calin - Tuesday, January 19, 2010 - link

Both Tegra 2 and Fermi are extraordinary products - if what NVidia says about them is true. Unfortunately, it doesn't seem like any of them is a perfect fit for the gaming desktop.Calin - Monday, January 18, 2010 - link

You don't win a generation with a very-high-end card - you win a generation with a mainstream card (as this is where most of the profits are). Also, low-end cards are very high-volume, but the profit from each unit is very small.You might win the bragging rights with the $600, top-of-the-line, two-in-one cards, but they don't really have a market share.

chizow - Monday, January 18, 2010 - link

But that's not how Nvidia's business model works for the very reasons you stated. They know their low-end cards are very high-volume and low margin/profit and will sell regardless.They also know people buying in these price brackets don't know about or don't care about features like DX11 and as the 5670 review showed, such features are most likely a waste on such low-end parts to begin with (a 9800GT beats it pretty much across the board).

The GPU market is broken up into 3 parts, High-end, performance and mainstream. GF100 will cover High-end and the top tier in performance with GT200 filling in the rest to compete with the lower-end 5850. Eventually the technology introduced in GF100 will diffuse down to lower-end parts in that mainstream segment, but until then, Nvidia will deliver the cutting edge tech to those who are most interested in it and willing to pay the premium for it. High-end and performance minded individuals.