The RV870 Story: AMD Showing up to the Fight

by Anand Lal Shimpi on February 14, 2010 12:00 AM EST- Posted in

- GPUs

TPS Rep...err PRS Documents

At ATI there’s a document called the Product Requirement Specification, PRS for short. It was originally a big text document written in Microsoft Word.

The purpose of the document is to collect all of the features that have to go into the GPU being designed, and try to prioritize them. There are priority 1 features, which are must-haves in the document. Very few of these get canned. Priority 2, priority 3 and priority 4 features follow. The higher the number, the less likely it’ll make it into the final GPU.

When Carrell Killebrew first joined ATI, his boss at the time (Dave Orton) tasked him with changing this document. Orton asked Carrell to put together a PRS that doesn’t let marketing come up with excuses for failure. This document would be a laundry list of everything marketing wants in ATI’s next graphics chip. At the same time, the document wouldn’t let engineering do whatever it wanted to do. It would be a mix of what marketing wants and what engineering can do. Orton wanted this document to be enough of a balance that everyone, whether from marketing or engineering, would feel bought into when it’s done.

Carrell joined in 2003, but how ATI developed the PRS didn’t change until 2005.

The Best Way to Lose a Fight - How R5xx Changed ATI

In the 770 story I talked about how ATI’s R520 delay caused a ripple effect impacting everything in the pipeline, up to and including R600. It was during that same period (2005) that ATI fundamentally changed its design philosophy. ATI became very market schedule driven.

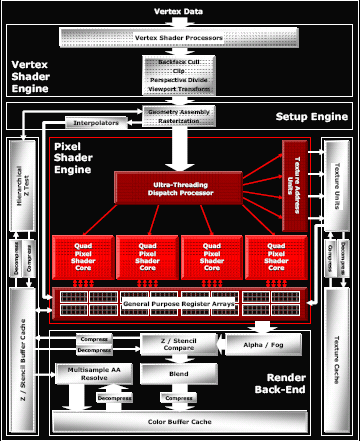

ATI's R520 Architecture. It was delayed.

The market has big bulges and you had better deliver at those bulges. Having product ready for the Q4 holiday season, or lining up with major DirectX or Windows releases, these are important bulges in the market. OEM notebook design cycles are also very important to align your products with. You have to deliver at these bulges. ATI’s Eric Demers (now the CTO of AMD's graphics group) put it best: if you don’t show up to the fight, by default, you lose. ATI was going to stop not showing up to the fight.

ATI’s switch to being more schedule driven meant that feature lists had to be kept under control. Which meant that Carrell had to do an incredible job drafting that PRS.

What resulted was the 80% rule. The items that made it onto the PRS were features that engineering felt had at least an 80% chance of working on time. Everyone was involved in this process. Every single senior engineer, everyone. Marketing and product managers got their opportunities to request what they wanted, but nothing got committed to without some engineer somewhere believing that the feature could most likely make it without slipping schedule.

This changed a lot of things.

First, it massively increased the confidence level of the engineering team. There’s this whole human nature aspect to everything in life, it comes with being human. Lose confidence and execution sucks, but if you are working towards a realistic set of goals then morale and confidence are both high. The side effect is that a passionate engineer will also work to try and beat those goals. Sly little bastards.

The second change is that features are more easily discarded. Having 200 features on one of these PRS documents isn’t unusual. Getting it down to about 80 is what ATI started doing after R5xx.

In the past ATI would always try to accommodate new features and customer requests. But the R5xx changes meant that if a feature was going to push the schedule back, it wasn’t making it in. Recently Intel changed its design policy, stating that any feature that was going into the chip had to increase performance by 2% for every 1% increase in power consumption. ATI’s philosophy stated that any feature going into the chip couldn’t slip schedule. Prior to the R5xx generation ATI wasn’t really doing this well; serious delays within this family changed all of that. It really clamped down on feature creep, something that’s much worse in hardware than in software (bigger chips aren’t fun to debug or pay for).

132 Comments

View All Comments

AdiQue - Sunday, February 14, 2010 - link

I fully subscribe to point raised by a few previous posters. Namely, the article being such a worthy read, it actually justifies the creation of an account for the sheer reason of expressing appreciation to your fantastic work, which does stand out in the otherwise well saturated market of technology blogs.geok1ng - Sunday, February 14, 2010 - link

"I almost wonder if AMD’s CPU team could learn from the graphics group's execution. I do hope that along with the ATI acquisition came the open mindedness to learn from one another"it would be a true concern if based on mere observation, but the hard facts are soo much terrible: AMD fired tons of ATI personnel, hence ATI drivers are years behind NVIDIA- we are still begging for centered timings on ATO cards, a feature that NVIDIA offers 6 generations past! ATI produces cards that are gameless. DirectX 10.1?! There was a single game with DirectX 10.1 support, and NVIDIA made the game developer REMOVE DirectX 10.1 features with a game patch that "increased" performance. DirectX 11?! ATI has to put money on driver developing team and spend TONS of cash in game developing.

I would be a happier costumer if the raw performance of my 4870X2 was paired with the seamless driver experience of my previous 8800GT.

And another game that AMD was too late is the netbook and ultralow voltage mobile market. A company with the expertise in integrated graphics and HTPCs GPUs with ZERO market share on this segment?! give me a break!

LordanSS - Monday, February 15, 2010 - link

Funny... after the heaps of problems I had with drivers, stability and whatnot with my old 8800GTS (the original one, 320MB), I decided to switch to ATI with a 4870. Don't regret doing that.My only gripe with my current 5870 is the drivers' and the stupid giant mouse cursor. The Catalyst 9.12 hotfix got rid of it, but it came back on the 10.1.... go figure. Other than that, haven't had problems with it and have been getting great performance.

blackbrrd - Monday, February 15, 2010 - link

I think the reason he had issues with the X2 is that it's a dual card. I think most gfx card driver problems comes from dual cards in any configuration (dual, crossfire, sli)The reason you had issues with the 320mb card is that it had some real issues because of the half-memory. The 320mb cards where cards originally intended as gtx cards, but binned as gts cards that again got binned as 320mb cards instead of 640mb cards. Somehow Nvidia didn't test these cards good enough.

RJohnson - Sunday, February 14, 2010 - link

Please get back under your bridge troll...Warren21 - Sunday, February 14, 2010 - link

Are you kidding me? Become informed before you spread FUD like this. I've been able to choose centered timings in my CCC since I've had my 2900 Pro back in fall 2007. Even today on my CrossFire setup you can still use it.As for your DX10.1 statement, thank NVIDIA for that. You must remember that THEY are the 600lb gorilla of the graphics industry - I fail to see how the exact instance you cite does anything other than prove just that.

As for the DX11 statement, if NVIDIA had it today I bet you'd be singing a different tune. The fact that it's here today is because of Microsoft's schedule which both ATI and NVIDIA follow. NV would have liked nothing more than to have Fermi out in 2009, believe that.

Kjella - Sunday, February 14, 2010 - link

"AMD fired tons of ATI personnel, hence ATI drivers are years behind NVIDIA-"Wow, you got it backwards. The old ATI drivers sucked horribly, they may not be great now either but whatever AMD did or didn't do the drivers have been getting better, not worse.

Scali - Sunday, February 14, 2010 - link

It's a shame that AMD doesn't have its driver department firing on all cylinders like the hardware department is.The 5000-series are still plagued with various annoying bugs, such as the video playback issues you discovered, and the 'gray screen' bug under Windows 7.

Then there's OpenCL, which still hasn't made it into a release driver yet (while nVidia has been winning over many developers with Cuda and PhysX in the meantine, while also offering OpenCL support in release drivers, which support a wider set of features than AMD, and better performance).

And through the months that I've had my 5770 I've noticed various rendering glitches aswell, although most of them seem to have been solved with later driver updates.

And that's just the Windows side. Linux and OS X aren't doing all that great either. FreeBSD isn't even supported at all.

hwhacker - Sunday, February 14, 2010 - link

I don't log in and comment very often, but had to for this article.Anand, these type of articles (Rv770,'Rv870',and SSD) are beyond awesome. I hope it continues for Northern Islands and beyond. Everything from the RV870 jar tidbit to the original die spec to the SunSpotting info. It's great that AMD/ATi allows you to report this information, and that you have the journalistic chops to inquire/write about it. Can not provide enough praise. I hope Kendell and his colleagues (like Henri Richard) continue this awesome 'engineering honesty' PR into the future. The more they share, within understandable reason, the more I believe a person can trust a company and therefore support it.

I love the little dropped hints BTW. Was R600 supposed to be 65nm but early TSMC problems cause it revert to 80nm like was rumored? Was Cypress originally planned as ~1920 shaders (2000?) with a 384-bit bus? Would sideport have helped the scaling issues with Hemlock? I don't know these answers, but the fact all of these things were indirectly addressed (without upsetting AMD) is great to see explored, as it affirms my belief I'm not the only one interested in them. It's great to learn the informed why, not just the unsubstantiated what.

If I may preemptively pose an inquiry, please ask whomever at AMD when NI is briefed if TSMC canceling their 32nm node and moving straight to 28nm had anything to do with redesigns of that chip. There are rumors it caused them to rethink what the largest chip should be, and perhaps revert back to what the original Cypress design (as hinted in this article?) for that chip, causing a delay from Q2-Q3 to Q3-Q4, not unlike the 30-45 day window you mention about redesigning Cypress. I wonder if NI was originally meant to be a straight shrink?

hwhacker - Sunday, February 14, 2010 - link

I meant Carrell above. Not quite sure why I wrote Kendell.