Understanding the Cell Microprocessor

by Anand Lal Shimpi on March 17, 2005 12:05 AM EST- Posted in

- CPUs

High Level Overview of Cell

Cell is just as much of a multi-core processor as the upcoming multi-core CPUs from AMD and Intel, the only difference being that Cell's architecture doesn't have an entirely homogeneous set of cores.Cell's Execution Cores

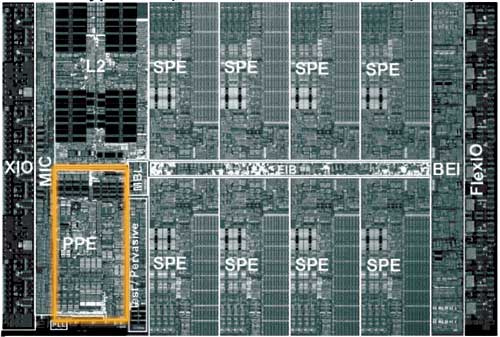

The Cell architecture debuted in a configuration of 9 independent cores: one PowerPC Processing Element (PPE) and eight Synergistic Processing Elements (SPEs). The PPE and SPEs are obviously different, but all eight SPEs are identical to one another.The PPE is IBM's major contribution to the Cell project; it also appears to be very similar to the core being used in the next Xbox console.

The PPE features a 64KB L1 cache and a 512KB L2 cache and features SMT, similar to Intel's Hyper Threading. The PPE features a strictly in-order core, which the desktop x86 market hasn't seen since the death of the original Pentium (the Pentium Pro brought out-of-order execution to the x86 market), so the move for an in-order core is an interesting one. The PPE is also only a 2-issue core, meaning that, at best, it can execute two instructions simultaneously. For comparison, the Athlon 64 is a 3-issue core, so immediately, you get the sense that the PPE is a much simpler core than anything that we have on the desktop. IBM's VMX instruction set (aka Altivec) is also supported by the PPE. Much like the rest of the Cell processor, the PPE is designed to run at very high clock speeds.

There's not much that's impressive about the PPE, other than it's a small, fast, efficient core. Put up against a Pentium 4 or an Athlon 64, the PPE would lose undoubtedly, but the PPE's architecture is one answer to a shift in the performance paradigm. Performance in business/office applications requires a very powerful, very fast general purpose microprocessor, but performance in a game console, for example, does not. The original Xbox used a modified Intel Celeron processor running at 733MHz, while the fastest desktops had 2.0GHz Pentium 4s and 1.60GHz Athlon XPs. Given that the first implementation of Cell is supposed to be Sony's Playstation 3, the simplicity of the PPE is not surprising. Should Cell ever make its way into a PC, the PPE would definitely have to be beefed up, or at least paired with multiple other PPEs.

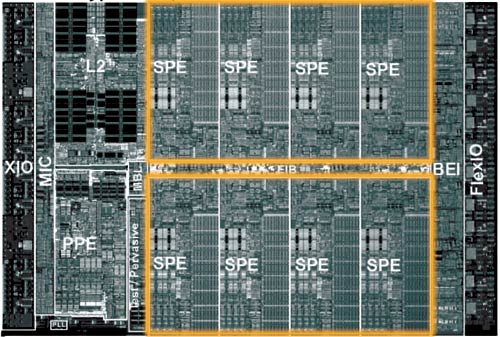

The majority of the Cell's die is composed of the eight Synergistic Processing Elements (SPEs). If you consider the PPE to be a general purpose microprocessor, think of the SPEs as general purpose processors with a slightly more specific focus.

The SPEs have no branch predictor, meaning that they rely solely on software branch prediction. There are ways that the compiler can avoid branches, and the SPE architecture lends itself very well to things like loop unrolling. Any elementary programmer is familiar with a loop, where one or more lines of code is repeated until a certain condition is met. The checking of that condition (e.g. i < 100) often results in a branch, so one way of removing that branch is simply to unroll the loop. If you have a statement in a loop that is supposed to execute 100 times, you could either keep it in the loop and execute it that way, or you could remove the loop and simply copy the statement 100 times. The end result is the same - the only difference is that in one case, you have a branch condition while the other case results in more lines of code to execute.

The problem with loop unrolling is that you need a large number of registers to unroll some loops, which is one reason that each SPE has 128 registers. Originally, the SPEs were supposed to use the VMX (Altivec) ISA, but because of a need for more than 32 architectural registers, the SPEs implemented a new ISA with support for 128 registers.

Each SPE is only capable of issuing two instructions per clock, meaning that at best, each SPE can execute two instructions at the same time. The issue width of a microprocessor can determine a big part of how large the microprocessor will be; for example, the Itanium 2 is a 6-issue core, so being a 2-issue core makes each SPE significantly smaller than most general purpose microprocessors.

In the end, what we see with the SPEs is that they sacrifice some of the normal tricks to improve ILP in favor of being able to cram more SPEs onto a single die, effectively sacrificing some ILP for greater TLP. Given the direction that the industry is headed, a move to a very TLP centric design makes a lot of sense, but at the same time, it will be quite dependent on developers adhering to very specific development models.

Clearly, the architects of Cell saw the SPEs as being used to run a highly parallelizable workload, and as Derek Wilson mentioned in his article about AGEIA's PhysX PPU:

"One of the properties of graphics that made the feature a good fit for a specialized processor inside a PC is the fact that the task is infinitely parallelizable. Hundreds of thousands, and even millions of pixels, need to be processed every frame. The more detailed a rendering needs to be, the more parallel the task becomes. The same is true with physics. As with the visual world, the physical world is continuous rather than discrete. The more processing power we have, the more things we can simulate at once, and the more realistically we can approximate the real world."

With NVIDIA supplying some form of a GPU for Playstation 3, Cell's array of SPEs have one definite purpose in a gaming console - physics and AI processing. Many have argued that the array of SPEs seems capable of taking over the pixel processing workload of a GPU, but for a high performance console, that's not much of an option. The SPE array could offer better CPU-based 3D rendering, but it would be a tough sell (no pun intended) for this array of SPEs to be the end of dedicated GPU hardware.

70 Comments

View All Comments

PhilAnd - Wednesday, October 5, 2005 - link

Thank you SO MUCH!!! I've been looking for an explanation of the cell forever and this did it perfictly!! THANK YOU!!! YOU ARE GOD!!!philpoe - Sunday, July 31, 2005 - link

Under the high-level overview of the cell section, the PPE has 64KB L1 and 512KB L2 cache.On the other hand, under the on-die memory controller section, we see that the XDR memory gives bandwidth of 25.6GB/sec, and the integrated memory controller "significantly reduces memory latencies".

My question then is, what good is the L1 and L2 cache doing? Given the amount of real estate those transistors take up, isn't it more economical to use the system RAM exclusively? The L2 cache takes up about the same amount of space as an SPE, not that it would help but so much to put another one on the die, but what effect on performance would getting rid of the L2 or even L1 cache have on memory with such high bandwidth?

tipoo - Wednesday, December 2, 2015 - link

L1 and L2 latency isn't even approached by the fastest system RAM latencies, XDR included. Nanoseconds vs milliseconds.jiulemoigt - Saturday, March 26, 2005 - link

Oh #59 it's funnier than that the PPE does all the work the modern CPU does with logic, and the easy stuff is done by the extra procs... but that means the messy {think calc equations} can not be done by the extra proc so if your game requires more abstract equations vs simple math {the math understadning simple math} say AI vs drawing boxes adn cubes, your machine will be dependant of the smaller proc, and the pipeline length is a game of balence prediction vs speed meaning that if you can predict a full pipeline it is much faster if the pipe is longer the vs you miss with the prediction at some point in the pipe and everthing after that point is lost so the longer the pipe is after the miss is a loss. So a shorter pipe is not nessacry better as there are tasks the P4 excells at because it has the huge pipe and the longer the pipe the high you can scale the proc speed, which is why intel chose such a huge pipe knowing the misses would hurt but at the time people still wanted every mhz possible. AMD has a 14 stage pipe because they use decent prediction but better register use, as well as fast pathing, but the biggest reason x86 is fast is because as long as it works theres reams of code out there to reach the sun a new system will require human hours to clean up so that is can take all the short cuts that x86 already does. So if the dev's are laughing now it is becasue the know it going to be very unfriendly to code for and are frustrated that the hardware which has years of effort going into it's design is not being designed to be easier to code for and to do the hardwork for us instead of the doing all the easy work faster which doesn't help us and making the hardwork harder! and in some cases run slower because it was cheaper. I understand how much money M$ lost, which was passed on to nvidia, so for them they won't get away with that this time so they will have to make it cheaper this time around.AndyKH - Thursday, March 24, 2005 - link

#55Regarding the interview from GameSpot:

He (the guy who is very upset with having to program for in-order cores) states that code will run very crappy on these new cores. Well... I don't know exactly how many pipeline stages the new cores have, but they will without a doubt have a LOT less stages than modern out-of-order core. If you also spend a great amount of design effort to make sure the branch target is calculated very early in the pipeline and couple that with a high clock frequency, you might not even need to fetch your bag of kleenexes to dry your eyes.

Of course, I don't know how long the pipeline in a Cell PPE or in the Xenon's cores is, but everything points to a very short one. Also I don't know how early the branch target is calculated, but I bet it's pretty early.

As an end remark I might add that "computer engineers are not stupid people". In the interview, the guy make it sound like it will be impossible to run gameplay code on the new console CPUs..... I personally don't think that IBM and Sonys engineers will design a CPU with such a little amount of care.

Regards

Andreas

TheGee - Monday, March 21, 2005 - link

Transputer anyone? The computer on a chip that could be massively parralleled? Difficult to program but this cell is not such a great leap in ideas but with the corporate weight may succeed where others have failed and break the x86 limitations put on PCs. If the busses are big enough it would be nice to be able to plug in extra CPUs on a card or such like to upgrade or speed up a system without to much difficulty as long as the software is not CPU limited. But as before it's best not to hold your breath!Slaimus - Sunday, March 20, 2005 - link

PS1 was easy to program, so that took off. Sony made PS2 very hard to program if you want to use its vector units efficiently, but since the game developers are already on board, they had to live with it. And sony will dump the same heap onto developers again with the PS3.With this kind of complexity, I have a feeling that middleware companies will thrive. Game developers want to create content more than write assembly code, so a few middleware companies will probably supply the libraries while everyone else licenses them. Of course Microsoft has a head start since DirectX already exists and is included in the devkit, but then again, the xbox2 is not as massively parallel.

stephenbrooks - Sunday, March 20, 2005 - link

Ah sod multiple cores. I always preferred playing Tetris anyhow.knitecrow - Friday, March 18, 2005 - link

GAME DEVELOPER @ GDC RANT ON NEXT GEN CONSOLEShttp://www.gamespot.com/news/2005/03/18/news_61204...

All right, here we go. "How Sony and Microsoft are about to screw your game design." These are games in the good old days. We didn't exactly have the best physique, but we were at least a balanced individual, you walk out on the beach, and you were like, you know, pathetic. But you know, you looked like a normal person. These are games today. We've been working really hard--I mean, you can maybe make the argument that this is the game--these are games today. I gotta little more work on that left arm to do, it's going to be as big as our graphics arm soon. This is kind of lame. We really want to be this guy don't we?

Unknown Speaker: No!

[laughter]

Chris Hecker: OK, he was the best guy I could find in like, three seconds in the WiFi network out in the lobby. All right. But how do we get there? Well, I'm going to take a little diversion here. I'm a programmer, so, I have two technical slides, really one technical slide. And that's about it. All right, ready? So there are two kinds of code in a game basically. There's gameplay code and engine code. Engine code, like graphics and physics, takes really giant data structures of homogenous data. I mean, it's all the same, like a lot of vertices are all a big matrix, or whatever, but usually floating point data structures these days. And you have a single small, relatively small hour that grinds away on that. This code is like, wow, it has a lot of math in it, it has to be optimized for super scalar, blah, blah, blah. It's just not actually that hard to write, right? It's pretty well defined what this code does.

The second kind of code we have is AI and gameplay code. Lots of little exceptions. Even if you're doing a simulation-y kind of game, there's tons of tunable parameters, [it's got a lot of interactions], it's a mess. I mean, this code--you look at the gameplay code in the game, and it's crap. Compared to like, my elegant physics simulator or whatever. But this is a code that actually makes the game feel different. This is the kind of code we want to be easy to write and so we can do more experimental stuff. Here is the terrifying realization about the next generation of consoles. I'm about to break about a zillion NDAs, but I didn't sign any NDAs so that's totally cool!

I'm actually a pretty good programmer and mathematician but my real talent is getting people to tell me stuff that they're not supposed to tell me. There we go. Gameplay code will get slower and harder to write on the next generation of consoles. Why is this? Here's our technical slide. Modern CPUs, like the Intel Pentium 4, blah, blah, blah, Pentium [indiscernible] or laptop, whatever is in your desktop, and all the modern power PCs, use what's called 'out of order' execution. Basically, out of order execution is there to make really crappy code run fast.

So, they basically--when out of order execution came out on the P6, the Pentium 6 [indiscernible] the Pentium 5, the original Pentium and the one after that. The Pentium Pro I think they called it, it basically annoyed a whole bunch of low level ASCII coders, because now all of a sudden, like, the crappiest-ass C code, that like, Joe junior programmer could write, is running as fast as their Assembly, and there's nothing they can do about it. Because the CPU behind their back, is like, reordering that guy's crappy ass C code, to run really well and utilize all the parts of the processor. While this annoyed a whole bunch of people in Scandinavia, it actually…

[laughter]

And this is a great change in the bad old days of 'in order execution,' where you had to be an Assembly language wizard to actually get your CPU to do anything. You were always stalling in the cache, you needed to like--it was crazy. It was a lot of fun to write that code. It wasn't exactly the most productive way of doing experimental programming.

The Xenon and the cell are both in order chips. What does this mean? The reason they did this, is it's cheaper for them to do this. They can drop a lot of core--you know--one out of order core is about the size of three to four in order cores. So, they can make a lot of in order cores and drop them on a chip, and keep the power down, and sell it for cheap--what does this do to our code?

Well, it makes--it's totally fine for grinding like, symmetric algorithms out of floating point numbers, but for lots of 'if' statements in directions, it totally sucks. How do we quantify 'totally sucks?' "Rumors" which happen to be from people who are actually working on these chips, is that straight line gameplay code runs at 1/3 to 1/10 the speed at the same clock rate on an in order core as an out of order core.

This means that your new fancy 2 plus gigahertz CPU, and its Xenon, is going to run code as slow or slower than the 733 megahertz CPU in the Xbox 1. The PS3 will be even worse.

This sucks!

[laughter]

There's absolutely nothing you can do about this. Well, you can actually hope that Nintendo uses an out of order core, because they're claiming that they're going to try and make it easy to develop for--except for Nintendo basically totally flailed this generation. So maybe they'll do something next generation. Who knows? You can think about having batchable design simulation-y systems, but like, I'm a huge proponent of simulation in gameplay, but even simulation in gameplay takes kind of messy systems under the hood. And this makes your gameplay harder to write.

You want to just write the gameplay. You don't want to have to like, spend 6 years of a super hardcore engine programmer's time to figure out how to make your gameplay run super scalars. You could do PC games. They are still out of order cores, but a lot of people don't think that's an option nowadays.

tipoo - Thursday, December 3, 2015 - link

It's funny looking back, he wanted them to change the CPU from the Gamecube for the next generation...They ended up using an upclocked Gamecube CPU for the Wii, and a modified tri core version of it for the Wii U.