ATI's Late Response to G70 - Radeon X1800, X1600 and X1300

by Derek Wilson on October 5, 2005 11:05 AM EST- Posted in

- GPUs

Pipeline Layout and Details

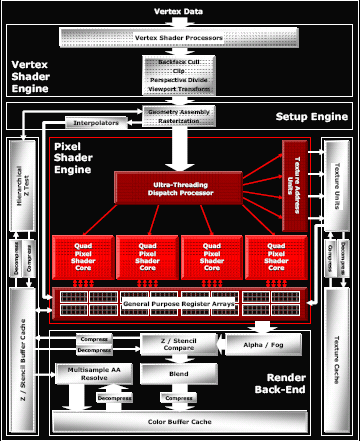

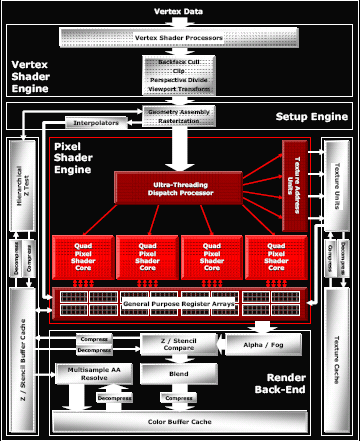

The general layout of the pipeline is very familiar. We have some number of vertex pipelines feeding through a setup engine into a number of pixel pipelines. After fragment processing, data is sent to the back end for things like fog, alpha blending and Z compares. The hardware can easily be scaled down at multiple points; vertex pipes, pixel pipes, Z compare units, texture units, and the like can all be scaled independently. Here's an overview of the high end case.

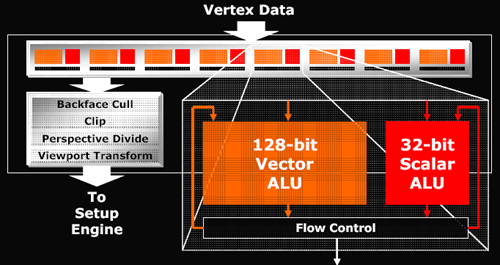

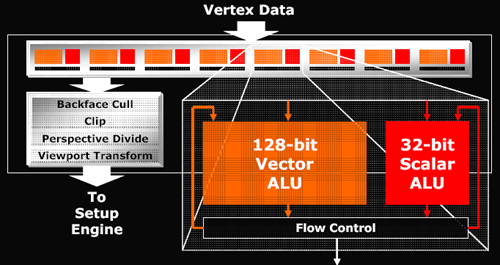

The maximum number of vertex pipelines in the X1000 series that it can handle is 8. Mid-range and budget parts incorporate 5 and 2 vertex units respectively. Each vertex pipeline is capable of one scalar and one vector operation per clock cycle. The hardware can support 1024 instruction shader programs, but much more can be done in those instructions with flow control for looping and branching.

After leaving the vertex pipelines and geometry setup hardware, the data makes its way to the "ultra threading" dispatch processor. This block of hardware is responsible for keeping the pixel pipelines fed and managing which threads are active and running at any given time. Since graphics architectures are inherently very parallel, quite a bit of scheduling work within a single thread can easily be done by the compiler. But as shader code is actually running, some instruction may need to wait on data from a texture fetch that hasn't completed or a branch whose outcome is yet to be determined. In these cases, rather than spin the clocks without doing any work, ATI can run the next set of instructions from another "thread" of data.

Threads are made up of 16 pixels each and up to 512 can be managed at one time (128 in mid-range and budget hardware). These threads aren't exactly like traditional CPU threads, as programmers do not have to create each one specifically. With graphics data, even with only one shader program running, a screen is automatically divided into many "threads" running the same program. When managing multiple threads, rather than requiring a context switch to process a different set of instructions running on different pixels, the GPU can keep multiple contexts open at the same time. In order to manage having any viable number of registers available to any of 512 threads, the hardware needs to manage a huge internal register file. But keeping as many threads, pixels, and instructions in flight at a time is key in managing and effectively hiding latency.

NVIDIA doesn't explicitly talk about hardware analogous to ATI's "ultra threading dispatch processor", but they must certainly have something to manage active pixels as well. We know from our previous NVIDIA coverage that they are able to keep hundreds of pixels in flight at a time in order to hide latency. It would not be possible or practical to give the driver complete control of scheduling and dispatching pixels as too much time would be wasted deciding what to do next.

We won't be able to answer specifically the question of which hardware is better at hiding latency. The hardware is so different and instructions will end up running through alternate paths on NVIDIA and ATI hardware. Scheduling quads, pixels, and instructions is one of the most important tasks that a GPU can do. Latency can be very high for some data and there is no excuse to let the vast parallelism of the hardware and dataset to go to waste without using it for hiding that latency. Unfortunately, there is just no test that we have currently to determine which hardware's method of scheduling is more efficient. All we can really do for now is look at the final performance offered in games to see which design appears "better".

One thing that we do know is that ATI is able to keep loop granularity smaller with their 16 pixel threads. Dynamic branching is dependant on the ability to do different things on different pixels. The efficiency of an algorithm breaks down if hardware requires that too many pixels follow the same path through a program. At the same time, the hardware gets more complicated (or performance breaks down) if every pixel were to be treated completely independently.

On NVIDIA hardware, programmers need to be careful to make sure that shader programs are designed to allow for about a thousand pixels at a time to take the same path through a shader. Performance is reduced if different directions through a branch need to be taken in small blocks of pixels. With ATI, every block of 16 pixels can take a different path through a shader. On G70 based hardware, blocks of a few hundred pixels should optimally take the same path. NV4x hardware requires larger blocks still - nearer to 900 in size. This tighter granularity possible on ATI hardware gives developers more freedom in how they design their shaders and take advantage of dynamic branching and flow control. Designing shaders to handle 32x32 blocks of pixels is more difficult than only needing to worry about 4x4 blocks of pixels.

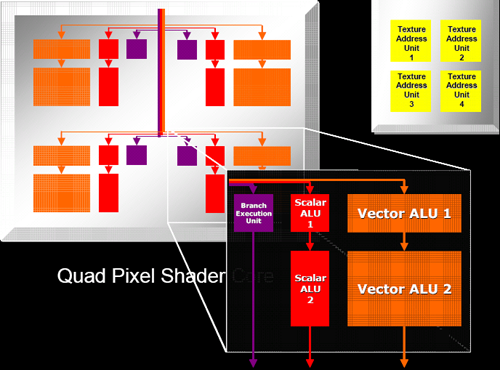

After the code is finally scheduled and dispatched, we come to the pixel shader pipeline. ATI tightly groups pixel shaders in quads and is calling each block of pixel pipes a quad pixel shader core. This language indicates the tight grouping of quads that we already assumed existed on previous hardware.

Each pixel pipe in a quad is able to handle 6 instructions per clock. This is basically the same as R4xx hardware except that ATI is now able to accommodate dynamic branching on their dedicated branch hardware. The 2 scalar, 2 vector, 1 texture per clock arrangement seems to have worked with ATI in the past enough for them to stick with it again, only adding 1 branch operation that can be issued in parallel with these 5 other instructions.

Of course, branches won't happen nearly as often as math and texture operations, so this hardware will likely be idle most of the time. In any case, having separate hardware for branching that can work in parallel with the rest of the pipeline does make relatively tight loops more efficient than what they could be if no other work could be done while a branch was being handled.

All in all, one of the more interesting things about the hardware is its modularity. ATI has been very careful to make each block of the chip independent of the rest. With high end hardware, as much of everything is packed in as possible, but with their mid-range solution, they are much more frugal. The X1600 line will incorporate 3 quads with 12 pixel pipes alongside only 4 texture units and 8 Z compare units. Contrast this to the X1300 and its 4 pixel pipes, 4 texture units and 4 Z compare units and the "16 of everything" X1800 and we can see that the architecture is quite flexible on every level.

The general layout of the pipeline is very familiar. We have some number of vertex pipelines feeding through a setup engine into a number of pixel pipelines. After fragment processing, data is sent to the back end for things like fog, alpha blending and Z compares. The hardware can easily be scaled down at multiple points; vertex pipes, pixel pipes, Z compare units, texture units, and the like can all be scaled independently. Here's an overview of the high end case.

The maximum number of vertex pipelines in the X1000 series that it can handle is 8. Mid-range and budget parts incorporate 5 and 2 vertex units respectively. Each vertex pipeline is capable of one scalar and one vector operation per clock cycle. The hardware can support 1024 instruction shader programs, but much more can be done in those instructions with flow control for looping and branching.

After leaving the vertex pipelines and geometry setup hardware, the data makes its way to the "ultra threading" dispatch processor. This block of hardware is responsible for keeping the pixel pipelines fed and managing which threads are active and running at any given time. Since graphics architectures are inherently very parallel, quite a bit of scheduling work within a single thread can easily be done by the compiler. But as shader code is actually running, some instruction may need to wait on data from a texture fetch that hasn't completed or a branch whose outcome is yet to be determined. In these cases, rather than spin the clocks without doing any work, ATI can run the next set of instructions from another "thread" of data.

Threads are made up of 16 pixels each and up to 512 can be managed at one time (128 in mid-range and budget hardware). These threads aren't exactly like traditional CPU threads, as programmers do not have to create each one specifically. With graphics data, even with only one shader program running, a screen is automatically divided into many "threads" running the same program. When managing multiple threads, rather than requiring a context switch to process a different set of instructions running on different pixels, the GPU can keep multiple contexts open at the same time. In order to manage having any viable number of registers available to any of 512 threads, the hardware needs to manage a huge internal register file. But keeping as many threads, pixels, and instructions in flight at a time is key in managing and effectively hiding latency.

NVIDIA doesn't explicitly talk about hardware analogous to ATI's "ultra threading dispatch processor", but they must certainly have something to manage active pixels as well. We know from our previous NVIDIA coverage that they are able to keep hundreds of pixels in flight at a time in order to hide latency. It would not be possible or practical to give the driver complete control of scheduling and dispatching pixels as too much time would be wasted deciding what to do next.

We won't be able to answer specifically the question of which hardware is better at hiding latency. The hardware is so different and instructions will end up running through alternate paths on NVIDIA and ATI hardware. Scheduling quads, pixels, and instructions is one of the most important tasks that a GPU can do. Latency can be very high for some data and there is no excuse to let the vast parallelism of the hardware and dataset to go to waste without using it for hiding that latency. Unfortunately, there is just no test that we have currently to determine which hardware's method of scheduling is more efficient. All we can really do for now is look at the final performance offered in games to see which design appears "better".

One thing that we do know is that ATI is able to keep loop granularity smaller with their 16 pixel threads. Dynamic branching is dependant on the ability to do different things on different pixels. The efficiency of an algorithm breaks down if hardware requires that too many pixels follow the same path through a program. At the same time, the hardware gets more complicated (or performance breaks down) if every pixel were to be treated completely independently.

On NVIDIA hardware, programmers need to be careful to make sure that shader programs are designed to allow for about a thousand pixels at a time to take the same path through a shader. Performance is reduced if different directions through a branch need to be taken in small blocks of pixels. With ATI, every block of 16 pixels can take a different path through a shader. On G70 based hardware, blocks of a few hundred pixels should optimally take the same path. NV4x hardware requires larger blocks still - nearer to 900 in size. This tighter granularity possible on ATI hardware gives developers more freedom in how they design their shaders and take advantage of dynamic branching and flow control. Designing shaders to handle 32x32 blocks of pixels is more difficult than only needing to worry about 4x4 blocks of pixels.

After the code is finally scheduled and dispatched, we come to the pixel shader pipeline. ATI tightly groups pixel shaders in quads and is calling each block of pixel pipes a quad pixel shader core. This language indicates the tight grouping of quads that we already assumed existed on previous hardware.

Each pixel pipe in a quad is able to handle 6 instructions per clock. This is basically the same as R4xx hardware except that ATI is now able to accommodate dynamic branching on their dedicated branch hardware. The 2 scalar, 2 vector, 1 texture per clock arrangement seems to have worked with ATI in the past enough for them to stick with it again, only adding 1 branch operation that can be issued in parallel with these 5 other instructions.

Of course, branches won't happen nearly as often as math and texture operations, so this hardware will likely be idle most of the time. In any case, having separate hardware for branching that can work in parallel with the rest of the pipeline does make relatively tight loops more efficient than what they could be if no other work could be done while a branch was being handled.

All in all, one of the more interesting things about the hardware is its modularity. ATI has been very careful to make each block of the chip independent of the rest. With high end hardware, as much of everything is packed in as possible, but with their mid-range solution, they are much more frugal. The X1600 line will incorporate 3 quads with 12 pixel pipes alongside only 4 texture units and 8 Z compare units. Contrast this to the X1300 and its 4 pixel pipes, 4 texture units and 4 Z compare units and the "16 of everything" X1800 and we can see that the architecture is quite flexible on every level.

103 Comments

View All Comments

DerekWilson - Friday, October 7, 2005 - link

Hello,Rather than update this article with the tables as we had planned, we decided to go all out and collect enough data to build something really interesting.

http://anandtech.com/video/showdoc.aspx?i=2556">http://anandtech.com/video/showdoc.aspx?i=2556

Our extended performance analysis should be enough to better show the strengths and weaknesses of the X1x00 hardware in all the games we tested in this article plus Battlefield 2.

I would like to apologize for not getting more data together in time for this article, but I hope the extended performance tests will help make up for what was lacking here.

And we've got more to come as well -- we will be doing an in-depth follow up on new feature performance and quality as well.

Thanks,

Derek Wilson

MiLLeRBoY - Thursday, October 6, 2005 - link

If NVIDIA puts out a 7800XT with a bigger cooler, which makes the video card dual slots, instead of just one slot. This would allow them to increase the speeds of the RAM and GPU. And if they increase it to 512MB ram, they will knock ATI’s X1800XT off the map completely.MiLLeRBoY - Thursday, October 6, 2005 - link

oops, 7800 GTX, I mean, lol.stephenbrooks - Thursday, October 6, 2005 - link

Maybe a solution for all the complaints about review-quality would be for AnandTech to put its reviews through "beta"? :pwaldo - Thursday, October 6, 2005 - link

So, I am back, and as always confused!Where are we now? We have at THG the same card beating teh 7800GTX hands down in several instances....and here at Anand, we have the ATI version barely holding its head above water.....talk about weird inconsistencies....someone is tweaking the numbers or the machines....one or the other.

Some of me would like to give the nod to THG because they have a history of doing more accurate more complete video card reviews, but this is just crazy....can someone at Anand please explain, cause well, I know THG won't.

tomoyo - Thursday, October 6, 2005 - link

In terms of pricing, I think Nvidia has Ati beaten in every category of card currently.I think the competition that ATI is marketing each card against is as follows(even if the prices have a huge disparity currently):

X1800XT vs 7800GTX

X1800XL vs 7800GT

X1600XT vs 6800/6600GT

X1600Pro vs 6600GT/6600

x1300Pro vs 6600

x1300 vs 6200

From what I've seen of the reviews from anandtech, techreport, and a couple other sources it looks like the X1800XT/XL are pretty competitive with their competition, however I really dislike the extra power consumption and of course the cost of the card. I think the 7800 is a far better solution in terms of most categories except a few minor features like having HDR/AA at the same time. It looks like it's possible the X1800 might have some gains in future games because of the better memory controller and threading pixel shader, but it seems rather useless for now.

The x1600 looks like the biggest disappointment by far. It's nowhere near the league of the 6800 cards and barely outperforms the 6600gt, which has a huge price advantage. The x800gto2 looks like a far better card than the x1600 here. Personally I'm hoping nvidia does what's expected and puts out a 90nm 7600 that has a decent performance gain over the 6600gt. That might be one of the best silent computing cards around when it comes out. (I'm hoping to replace my 6600 with this now that the x1600 is no upgrade for me)

The x1300 actually looks like the most promising chip to me. It's obviously not worthwhile for gamers, but I think it might turn out to be a pretty good drop-in card for non-gaming systems. It's all dependent on whether it can hit the price point for the under $100(or is that under $70) market well. It certainly looks like it'll outperform the 6200 and x300 and be the new standard for entry level systems... until nvidia's next entry card. Not to mention most of the x1x00 generation features are still included with the x1300 card.

AtaStrumf - Thursday, October 6, 2005 - link

Totaly disappointed in both ATi and AT.As for X1300 don't forget this is the best version out of X1300 family and I can't help but remember the FX 5200 Ultra, which looked great but was never really available, because they could not produce it at low enough price point. I think same will happen here.

bob661 - Thursday, October 6, 2005 - link

Very nice summary.andyc - Wednesday, October 5, 2005 - link

So what card is the "real" competitor to the 7800GT, becuase frankly, I'm totally confused which card ATI is trying to use to compete against it.Pete - Wednesday, October 5, 2005 - link

X1800XL.