AMD's Radeon HD 5870 Eyefinity 6 Edition Reviewed

by Anand Lal Shimpi on March 31, 2010 12:01 AM EST- Posted in

- GPUs

2GB vs. 1GB: Does it Matter?

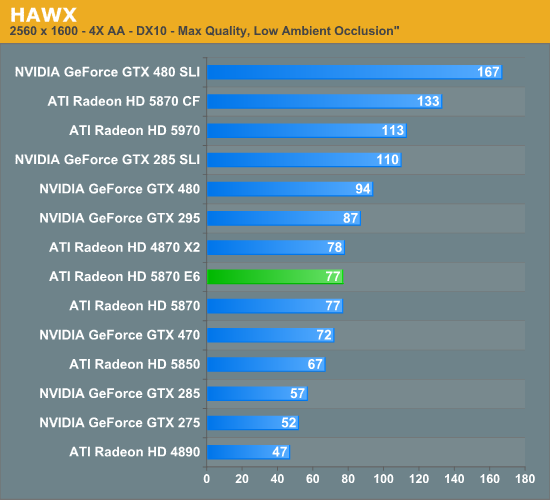

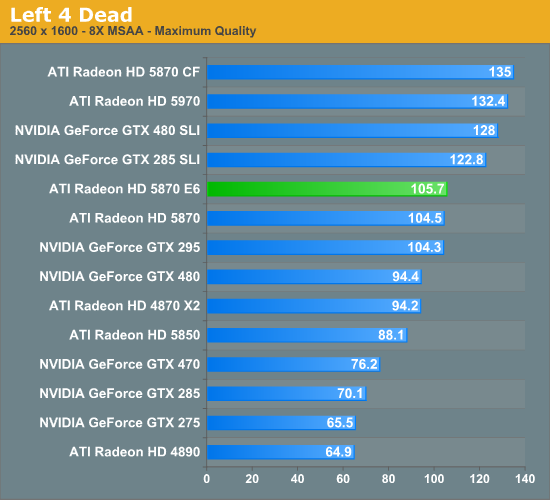

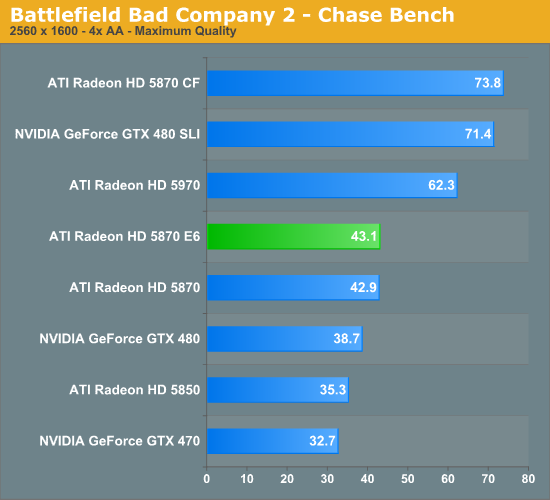

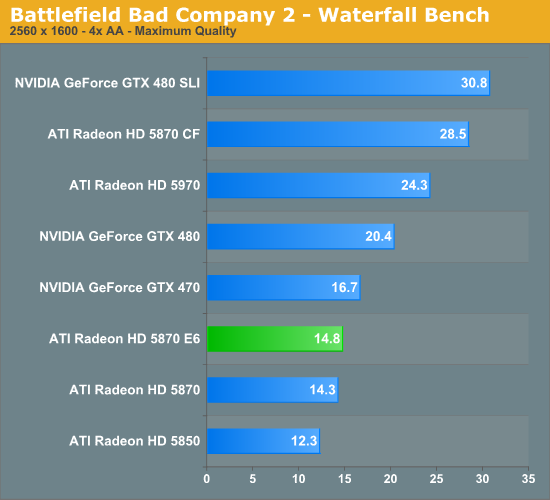

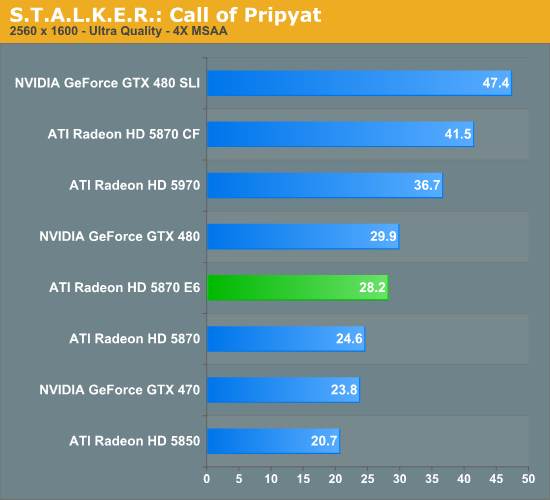

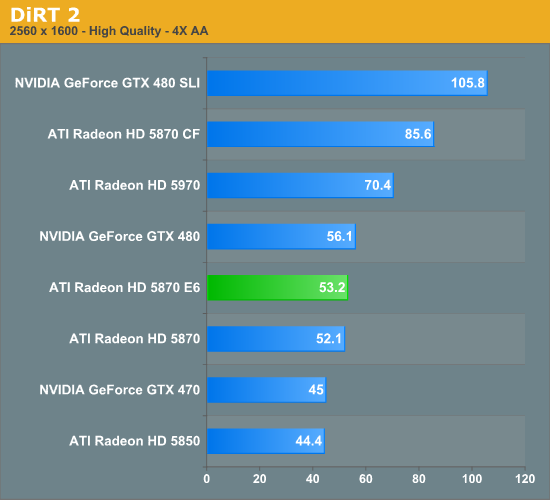

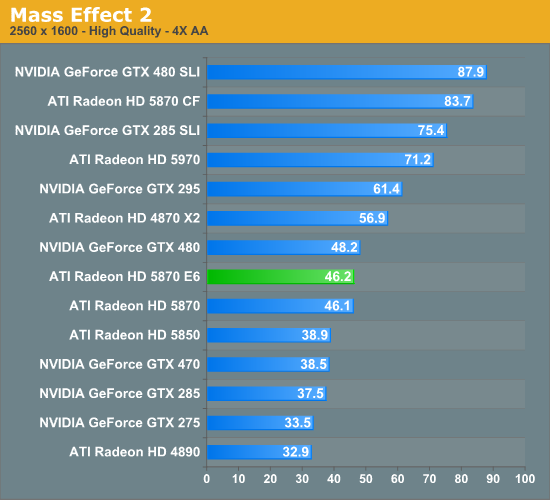

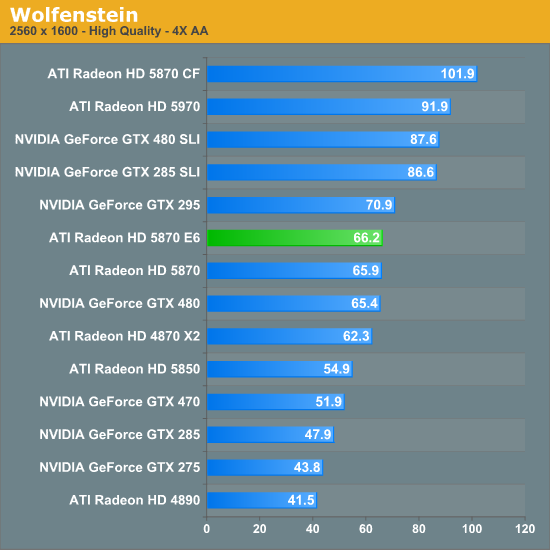

AMD equipped the Eyefinity Edition cards with 2GB of GDDR5 to deal with the increased memory requirements that come with pushing 2x the pixels of a standard 5870. But does the added memory improve performance in single-monitor scenarios?

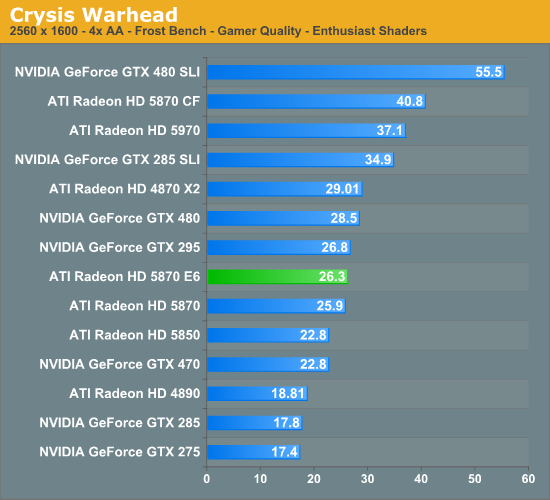

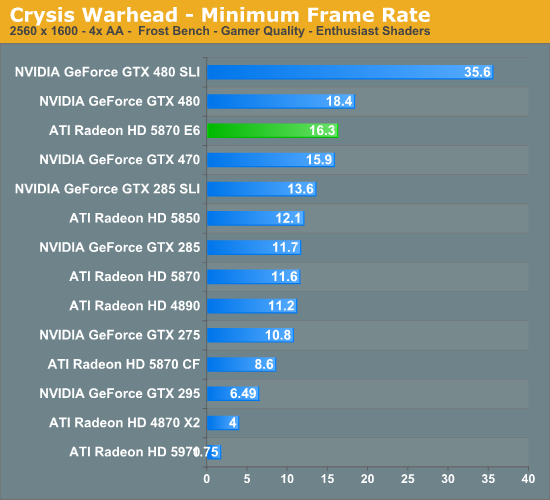

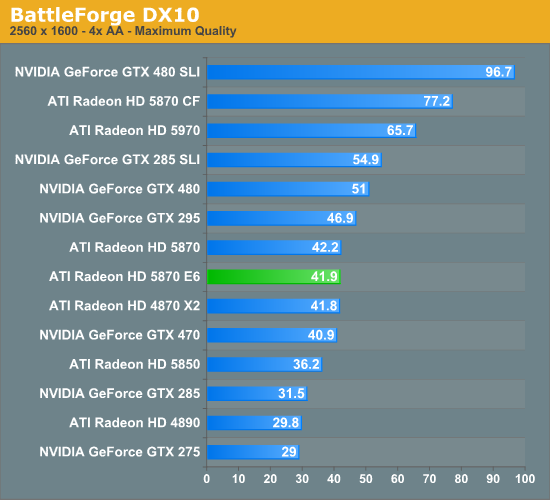

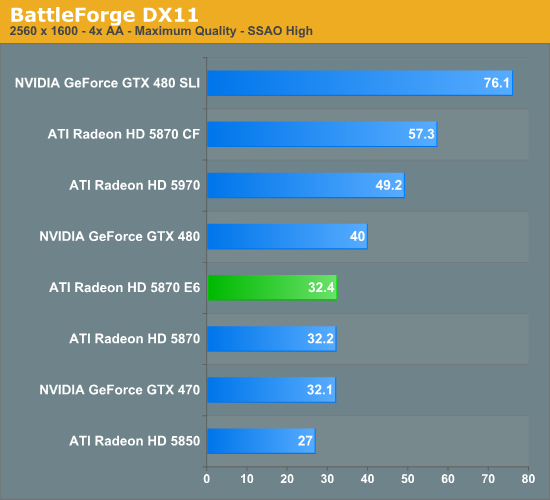

We ran through our standard GPU test suite (the same suite from the GTX 480 review) with our Eyefinity 6 Edition card and found that there's no performance advantage to having a 2GB frame buffer at 2560 x 1600 or below.

Performance did improve in our Crysis Warhead benchmark, but only in minimum frame rate. There we saw a 40% improvement in minimum frame rates at 2560 x 1600, but not often enough to really increase the average. The rest of our benchmarks don't produce repeatable enough minimum frame rates for us to draw any meaningful conclusions.

For single monitor users, I'm not sure that the 2GB frame buffer is worth it for today's games.

78 Comments

View All Comments

BoFox - Wednesday, March 31, 2010 - link

If I wanted huge screen real estate, I'd definitely go for a 1080p projector that can do anywhere from 100" to 20'. Of course, a top-of-the-line one would cost upwards of $10000, but a really nice one would only be a bit over $1000. Give me this over "jail" bars of bezels anytime!I'm a bit puzzled at why ATI is doing a 2GB version to counter the GTX 480, and not a slightly faster version. Right now is AMD/ATI's real chance to seize the bull's horns with a death grip. By all means they should release a 950-1000MHz version of 5870, named 5890! Even if the power consumption is 25-50W more, it would still be considerably lower than the GTX 480, and actually pwning it in nearly all of game benchmarks. Even better would be to release a 512-bit version just like they did 4 generations ago with HD2900XT. With up to 100% greater memory bandwidth, there would be roughly 20% more performance at 1000MHz core clock across all benchmarks, if not more.

I say this with mercy.. if AMD does not truly seize the moment with a death grip by the horns, AMD will regret it for a long time, if not forever.

bunnyfubbles - Wednesday, March 31, 2010 - link

Why not go with 3 cheaper projectors and use them with eyefinity? One of the oft neglected advantages to Eyefinity is a properly supported game can actually provide a player with a FOV advantage - they can actually see more of the game world than other players without distorting the image.This was never a counter to the GTX480, the E6 edition card had been planned long before we knew anything concrete about Fermi. And considering the benches, its quite obvious that 2GB is not needed for today's games. If ATI was going to introduce a counter to Fermi it would simply be a higher clocked 5870, but even that's not necessary save for bragging rights.

And a 512bit memory interface is the last thing I'd expect. It's actually bizarre you bring up the HD 2900XT as if it was something ATI should look back on for inspiration. If anything the HD 2900XT was ATI's own GTX480 debacle.

1reader - Wednesday, March 31, 2010 - link

I've hadn't thought about it that way, but the 2900XT situation was very similar to nVidia's 480GTX situation now. Like you said, definitely something ATI doesn't need to look back on for inspiration. That's why (I believe) ATI switched to GDDR5 as quickly as possible, to get as much throughput through that 256 bit memory interface.On the other hand though, I have a 2600XT with GDDR3 that makes a perfectly satisfying backup card. It definitely wouldn't have enough power to drive 6 displays though.

Also, what's up with AnandTech? I don't check back for two days, and the site disappears, only to be replaced by this sexy tech website. ;)

brysoncg - Wednesday, March 31, 2010 - link

Here's a thought: get a theater room with 6 hi-def projectors, and set them up in the eyefinity 6 setup. if you spent a little bit of time with it, you would be able to perfectly line up the edges of the projections from each projector, and you then have the eyefinity 6 setup, without the need for bezel correction (no bezel!), and therefore no crosshair problem. The only problem would be the cost....erple2 - Friday, April 2, 2010 - link

I think that the other problem would be the space. If a 1080p can comfortably drive a 100" screen, having a large enough wall to put 3x2 surfaces on it would become problematic, I'd think. I don't know too many people that have a 21' wide by 8' tall room where they could reasonably project onto...Plus the screen for that would be ... pricey.

However, some cheaper 720p projectors would be an interesting proposition, particularly projecting on a smaller wall - maybe 1/2 the size? so about 11' wide by 4' tall?

Xpl1c1t - Wednesday, March 31, 2010 - link

the ring bus is definitely worth looking back uponCalin - Thursday, April 1, 2010 - link

Also, unlike the computing units (which you can mostly disable at will in a finished product), any bad transistor in that ring bus would brick the entire chipBoFox - Thursday, April 1, 2010 - link

True.. 3 cheaper projectors with eyefinity would be an ideal solution.. and the screen could be a bit curved like at many cinema movie theaters today!On the same day Nvidia released GTX 480, AMD released this 2GB version to counter Nvidia's offering. Of course, AMD promised this 2GB version a long while ago, so it's about time. Perhaps it won't be long before AMD releases the faster 5890.

About the 512-bit bus: It is certainly do-able on a 40nm process, compared to when it was done on 80nm process with a 1024-bit ringbus a while ago on that HD2900XT (I will agree with you here in that it was redundant for the 2900XT)..

____

""Does a 512-bit bus require a die size that's going to be in the neighbourhood (or bigger) of R600 going forward?"

No, through multiple layers of pads, or through distributed pads or even through stacked dies, large memory bit widths are certainly possible. Certainly a certain size and a minimum number of consumers is required to enable this technology, but it's not required to have a large die."

-(Sir Eric Demers, architecture lead on R600 which is the still the basis of 5870's today)

http://www.beyond3d.com/content/interviews/39/5

If a 4890 simply performs around 19% better overall than a 5770 in all games except when using DX11, what shall we point at as the cause of the difference? The GPU cores are nearly identical in terms of clock speed, shaders, ROP's, etc.. with perhaps a slightly better optimization in the R800 architecture and better drivers. The main "obvious" difference is a 62.5% increase in memory bandwidth over the 5770. A 5870 is basically 2x 5770's in one GPU with everything doubled. It has been shown that a 5870 certainly does benefit from greater memory bandwidth.. let's say about 0.2% increase in performance per 1% increase in bandwidth.

By the way, Nvidia made quite an interesting statement on the memory bus a short while ago:

"With 3-D interconnects, it can vertically connect two much smaller die. Graphics performance depends in part on the bandwidth for uploading from a buffer to a DRAM. "If we could put the DRAM on top of the GPU, that would be wonderful," Chen said. "Instead of by-32 or by-64 bandwidth, we could increase the bandwidth to more than a thousand and load the buffer in one shot."

Based on any defect density model, yield is a strong function of die size for a complicated manufacturing process, Chen said. A larger die normally yields much worse than the combined yield of two die with each at one-half of the large die size. "Assuming a 3-D die stacking process can yield reasonably well, the net yield and the associated cost can be a significant advantage," he said. "This is particularly true in the case of hybrid integration of different chips such as DRAM and logic, which are manufactured by very different processes.""

http://www.semiconductor.net/article/print/438968-...

Nvidia's own John Chen mentioned increasing the bandwidth from "by-32 or by-64" per chip to "more than a thousand". This translates to 8x1024, which is an 8192-bit bus. Hopefully vertically stacked dies are the future. It would effectively reduce the need for increasingly larger buffer size, and act just like embedded RAM that can instantly load the buffer in one shot. ..a bit like SSD's today (small, but "instant"), and thought to be a pipe-dream a few years ago.

Ramon Zarat - Wednesday, March 31, 2010 - link

The 2900XT used a dual 512bit ring bus topology. The fact ATI or Nvidia don't use this technology today is a hint that it was not efficient enough or too complex to be commercially viable. In that sense, it was not a classic 512bit wide bus, as used by Nvidia previous generation or the 256/384/448bit bus in use today.A 512bit bus would be impossible to implement on the 5000 series simply because the memory controller is physically limited in hardware to "talk" to a 256bit bus. You need twice the traces on the PCB to go from 256 to 512bit and those traces must be, oner way or the other, physically linked to the GPU. The only way to speed up the memory access on the 5000 series would be to use faster DDR5 chip.

SoCalBoomer - Wednesday, March 31, 2010 - link

Unfortunately, a 1080p projector just won't get you the pixels that this thing will.I use a 2x2 setup on my desk at work and it has far more pixels (at far FAR less price) than a 1080p projector has (which is what? 1920x1080? something like that? - I'm working on 2560x2048)

My question would be if you can set these up as individual monitors just extending the desktop of if you HAVE to use eyefinity? I'd love to be able to do this instead of running dual cards, with the limitations on the motherboard that brings. . .