AMD Comments on GPU Stuttering, Offers Driver Roadmap & Perspective on Benchmarking

by Ryan Smith on March 26, 2013 2:28 AM ESTJust What Is Stuttering?

Now that we’ve seen a high-level overview of the rendering pipeline, we can dive into the subject of stuttering itself.

What is stuttering? In practice it’s any rendering anomaly that occurs that causes the time between frames to noticeably vary. This is admittedly a very generic definition, but it’s also a definition necessary to encompass all the different causes of stuttering.

We’ll get into specific scenarios of single-GPU and multi-GPU stuttering in the following pages, but briefly, stuttering can occur at several different points in the rendering pipeline. If the GPU takes longer to render a frame than expected – keeping in mind it’s impossible to accurately predict rendering times ahead of time – then that would result in stuttering. If a driver takes too long to prepare a frame for the GPU, backing up the rendering pipeline, that would result in stuttering. If a game simulation step takes too long and dispatches a frame later than it would have, or simply finds itself waiting too long before Windows lets it submit the next frame, that would result in stuttering. And if the CPU/OS is too busy to service an application or driver as soon as it would like, that would result in stuttering. The point of all of this being that stuttering and other pacing anomalies can occur at different points of the rendering pipeline, and become the responsibility of different hardware and software components.

Complicating all of this is the fact that Windows is not a real-time operating system, meaning that Windows cannot guarantee that it will execute any given command within a certain period of time. Essentially, Windows will get around to it when it can. In order to achieve the kind of millisecond level response time that applications and drivers need to ensure smoothness, Windows has to be overprovisioned to make sure it has excess resources. Consequently this is part of the reason for why the context queue exists in the first place, to serve as a buffer for when Windows can’t get the next frame passed down quickly enough.

Ultimately, while Windows will make a best-effort to get things done on time, the fact of the matter is that between the OS and the fact that PCs are composed of widely varied hardware, the software/hardware stack makes it virtually impossible to eliminate stuttering. Through careful profiling an optimizations it’s possible to get very close, but as the PC is not a fixed platform developers cannot count on any frame or any specific draw call being completed within a certain amount of time. For that kind of rendering pipeline consistency we’d have to look towards fixed platforms such as game consoles.

Moving on, stuttering is usually – though not always – a problem particular to gaming with v-sync disabled. When v-sync is enabled it places a hard floor on how often frames are presented to the user. For a typical 60Hz monitor this would mean there would be an interval of no shorter than 16.6ms, and in multiples of 16.6ms beyond that.

The significance of this is that if a game can consistently simulate and render at more than 60fps, v-sync effectively limits it to 60fps. With the end result being that the application is blocked from submitting any further frames once the context queue fills up, until the next scheduled frame is displayed. This fixed 16.6ms cycle makes it very easy to schedule frames and will typically minimize any stuttering. Of course v-sync also adds latency to the process since we’re now waiting on the GPU buffer to swap.

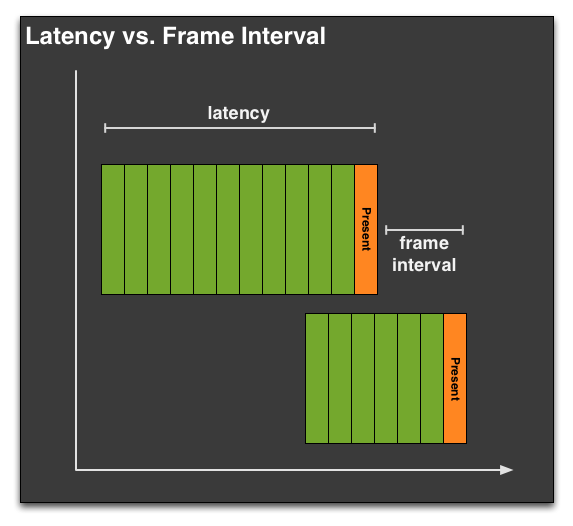

Throwing a few more definitions out before we move on, it’s important we differentiate between latency and the frame interval. Though latency gets thrown around as the time between frames, within the world of computer science and graphics that is not accurate, as latency has a different definition. Latency in this case is how long the entire rendering pipeline takes from start to end – from the moment the user clicks to the moment the first frame showing a response is displayed to the user. Most readers are probably more familiar with this concept as input lag, as latency in the rendering pipeline is a significant component of input lag.

Latency is closely related to, but not identical to the frame interval. Unlike latency, the frame interval is merely the time between frames, typically defined as the time (interval) between frames being displayed at the end of the rendering pipeline by the GPU performing a buffer swap. Typically latency and the frame interval are closely related, but thanks to the context queue it’s possible (and sometimes even likely) for a frame to go through the rendering pipeline with a high latency, while still being displayed at a consistent frame interval. For that matter the opposite can also happen.

When we’re looking at stuttering, what we’re really looking at is the frame interval rather than the latency. It’s possible to measure the latency separately, but whether it’s a software tool like FRAPS or something brute-force such as using a high-speed camera to measure the time between frames, what we’re seeing is the frame interval or a derivation thereof. The context queue means that the frame interval is not equivalent to the latency.

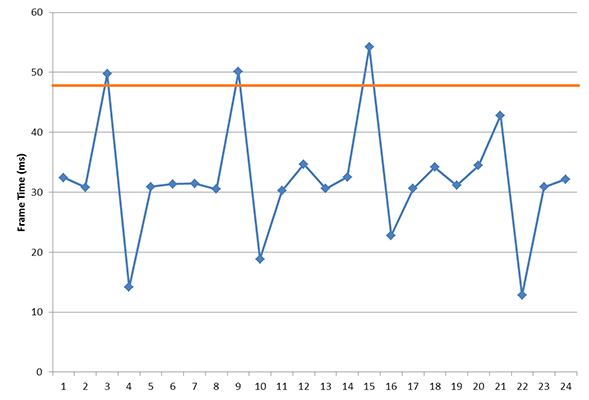

Finally, in our definition of stuttering we also need to somehow define when stuttering becomes apparent. Like input lag and other visual phenomena, there exists a point where stuttering is or isn’t visible to any given user. As we’ve already established that it’s virtually impossible to eliminate stuttering entirely on a variable platform like the PC, stuttering will always be with us to some degree, particularly if v-sync is disabled.

The problem is that this threshold is going to vary from person to person, and as such the idea of what an acceptable amount of stuttering would be is also going to vary depending on who you ask. If a frame takes 5ms longer than the previous, is that going to be noticeable? 10ms? 30ms? And what if this is at 30fps versus 60fps?

The $64K question: where is the cutoff for "good enough" stutter?

In our discussion with AMD, AMD brought up a very simple but very important point: while we can objectively measure instances of stuttering with the right tools, we cannot objectively measure the impact of stuttering on the user. We can make suggestions for what’s acceptable and set common-sense guidelines for how much of a variance is too much – similar to how 60fps is the commonly accepted threshold for smooth gameplay – but nothing short of a double-blind trial will tell us whether any given instance of stuttering is noticeable to any given individual.

AMD didn’t have all of the answers to this one, and frankly neither do we. Variance will always exist and so some degree of stuttering will always be present. The only point we can really make is the same point AMD made to us, which is that stuttering is only going to matter when it impacts the user. If the user cannot see stuttering then stuttering should no longer be an issue, even if we can measure some small degree of stuttering still occurring. Like input lag, framerates, and other aspects of rendering, there is going to be a point where stuttering can become “good enough” for most users.

103 Comments

View All Comments

eezip - Tuesday, March 26, 2013 - link

First one! Wow, I'm lame. Thanks Ryan - keep 'em coming!B3an - Tuesday, March 26, 2013 - link

Yes very lame. You should sit down and think what you're doing with your life and what kind of sad person you are.xaml - Tuesday, March 26, 2013 - link

It reads as if you didn't, so why do you point a finger.stickmansam - Tuesday, March 26, 2013 - link

I agree with the double blinding idea. Techreport had some videos on the skyrim stuttering and I showed it my bro with the card names covered and he actually preferred the AMD card. Personally I though both of them were playable since the 240fps video exaggerated any stuttering issues. If you can't tell the difference in a 60hz or 120hz video/monitor there is no difference.It would be nice if someone would develop an tool to measure the frames as they are being displayed, like as they are actually being viewed.

krumme - Tuesday, March 26, 2013 - link

The benefit of blindtest is twofold:It removes all the complexity involved in testing, and get to the point where it matters.

Secondly we get an oppinion as to what the benefits of the game have, going to higher quality settings.

Anand for much good, have the same staff, and we will get to know Ryan preferences in just a few rounds of testing.

Then he can have a nice assistant changing the cards for him :)

DanNeely - Tuesday, March 26, 2013 - link

While not blind, HardOCP's maximum playable settings testing is done to capture this. They report min/avg/max/graphed FPS; but at whatever combination of settings gave the most eye candy while still being fast and smooth enough to be enjoyable. At times this has resulted in observations that "while the raw FPS numbers imply that turning on X should be doable the gameplay results indicated otherwise" (generally due to stuttering problems).Havor - Tuesday, March 26, 2013 - link

I always liked HardOCP's maximum playable settings approach.But now i think that Ryan Shrout from PC Perspective is doing the best testing there is, by actually capturing all the frames with a capture card.

So no testing @ the start as FRAPS dose or some ware in the middle, no realworld frame output, better then that you cant do.

http://www.pcper.com/reviews/Graphics-Cards/Frame-...

http://www.pcper.com/reviews/Graphics-Cards/Frame-...

http://www.pcper.com/reviews/Graphics-Cards/Frame-...

Its a real interesting read, and i hope they will start doing testing real soon, as hard numbers are hard to come by, as there is still no perfect way of testing frame times.

Sabresiberian - Tuesday, March 26, 2013 - link

"Playable" and "optimal" are different things; for the most part no one is suggesting the games and cards that have more problems with stuttering are "unplayable".And, some people don't notice what bugs the fire out of others. Stuttering is one of those things. I think this has a lot to do with the fact that these problems have existed for quite awhile and people just got used to them, so kind of automatically ignore them.

So, I agree, if you don't notice it then it's not important. But if you do, then it is. :) I noticed this phenomenon years ago, and am very excited to see numbers that people can show quantifying the situation so that it can be discussed on more than a seat-of-the-pants level.

Soulwager - Tuesday, March 26, 2013 - link

The problem with double blinding is that some people notice more than others. If you're used to high end equipment on a 120hz monitor, you'll notice a hell of a lot more problems than dude off the street that normally plays on his laptop.medi01 - Wednesday, March 27, 2013 - link

Last time I've checked on toms, AMD's GPUs were better in this regard.Looks like yet another article to "compensate" for 7790.